Key takeaways

- AI search visitors convert at 2x the rate of regular organic visitors, making AI visibility a direct revenue lever, not just a vanity metric

- Most brands now track their AI visibility but stop there -- the gap between monitoring and actually improving is where customers are lost

- Being absent from a single AI-generated answer can eliminate you from a buyer's consideration before they ever visit your site

- The fix isn't more dashboards -- it's a closed loop: find content gaps, create content engineered for AI citation, and track the results

- Tools that only monitor leave you knowing you have a problem but not how to fix it

The dashboard trap

There's a specific kind of frustration that comes from staring at a monitoring dashboard that tells you exactly how invisible you are -- and nothing else.

In 2026, most marketing teams have figured out that AI search is real. ChatGPT has 910 million weekly active users. Google AI Overviews reach 2 billion people monthly across 200+ countries. Perplexity is growing fast. These aren't niche tools anymore -- they're where a meaningful slice of your potential customers start their research.

So teams set up tracking. They watch their brand mention rates across ChatGPT, Perplexity, Gemini. They see a number -- maybe it's 12% share of voice, maybe it's 3% -- and they feel informed. Then they go back to their regular work.

That's the trap. Monitoring without acting is just expensive anxiety.

The real cost isn't the subscription fee for a tracker. It's the customers who asked an AI assistant "what's the best [your category] tool for [your use case]?" and got back a list that didn't include you. Those customers didn't bounce from your website. They never arrived.

Why AI absence hits harder than a ranking drop

Traditional SEO had a certain forgiveness built in. If you dropped from position 2 to position 5 for a keyword, you still got clicks. Users scanned results, compared options, clicked around. You had multiple chances to appear.

AI search doesn't work that way.

When someone asks ChatGPT or Perplexity a question, they typically get one structured answer. Maybe a short list of 3-4 options. Maybe a single recommendation. The AI doesn't show them ten blue links to browse -- it makes a judgment call and presents it as fact.

If you're not in that answer, you don't exist for that query. Full stop.

This is what makes AI visibility fundamentally different from traditional rankings. According to data from Conductor (2026), AI search visitors buy at twice the rate of regular organic visitors. These are high-intent people who've already had a conversation with an AI, gotten a recommendation, and are now acting on it. Being excluded from that conversation doesn't just cost you traffic -- it costs you your highest-converting traffic.

Meanwhile, Ahrefs data from 2026 shows that AI Overviews have cut top-spot clicks by 58%. So even if you rank #1 in Google, a significant portion of users are getting their answer from the AI Overview above your result and never clicking through. The traffic you thought you had is quietly eroding.

The brands winning in this environment aren't just the ones who know they have an AI visibility problem. They're the ones who've done something about it.

What "monitoring only" actually looks like in practice

Here's a realistic scenario. A B2B SaaS company sets up an AI visibility tracker. They're checking 50 prompts across ChatGPT, Perplexity, and Google AI Overviews. The dashboard shows:

- Brand mention rate: 8%

- Competitor A mention rate: 34%

- Competitor B mention rate: 29%

They know they're losing. But the tool doesn't tell them why Competitor A is at 34%, which specific pages on Competitor A's site are getting cited, what content gaps on their own site are causing the absence, or what to write to close those gaps.

So they do... nothing actionable. Maybe they screenshot the dashboard for a quarterly review. Maybe someone says "we should work on this." Three months later, the numbers are roughly the same.

This is the monitoring-only trap in practice. The data is real. The problem is real. But without a path from insight to action, nothing changes.

Several tools in the market are built primarily around this monitoring layer. They're good at showing you the problem.

Otterly.AI

These tools serve a purpose -- knowing you have a problem is better than not knowing. But they leave you on your own to figure out what to do about it.

The three things that actually move the needle

Getting from "we're invisible in AI search" to "we're being cited regularly" requires three things working together.

1. Finding the specific gaps

Not all AI visibility gaps are equal. Some prompts have high search volume and are winnable. Others are dominated by authoritative sources you'll never displace. You need to know which is which.

Answer gap analysis -- looking at which prompts your competitors appear for that you don't -- is the starting point. But you also need prompt volume data (how often is this question actually being asked?) and difficulty scoring (how hard is it to break into this answer?).

Without this, you're guessing at what content to create. With it, you can prioritize the 10 prompts where you're absent but could realistically appear within 60-90 days.

2. Creating content that AI models actually cite

This is where most teams get stuck. They know they need content. They write something. It doesn't get cited. They're not sure why.

AI models cite content that directly answers questions, comes from authoritative sources, uses clear structure, and covers the specific angles the model is looking for. Generic blog posts written for keyword rankings often miss these marks entirely.

The content that gets cited tends to be specific, comprehensive on a narrow topic, and structured so an AI can extract a clean answer. Comparisons, listicles with real data, and direct Q&A formats tend to perform well. Content that hedges everything and says nothing tends to get ignored.

3. Tracking whether it worked

This is the part most teams skip. They publish content, assume it's helping, and never close the loop.

Page-level tracking -- seeing exactly which of your pages are being cited, by which AI models, and how often -- tells you whether your content strategy is working. Traffic attribution (connecting AI citations to actual site visits and conversions) tells you whether it's worth it financially.

Without this feedback loop, you're optimizing blind. With it, you can double down on what's working and fix what isn't.

The cost of waiting

Let's be concrete about what inaction costs.

If AI search visitors convert at 2x the rate of regular organic visitors, and your competitors are capturing the majority of AI citations in your category, the math is uncomfortable. Every month you're monitoring without acting is another month of high-intent buyers being handed to your competitors by ChatGPT, Perplexity, and Google AI Overviews.

The brands that built strong AI visibility in 2024 and 2025 are now entrenched. AI models have seen their content repeatedly, cited it, and built it into their training and retrieval patterns. Getting cited once tends to lead to getting cited more -- there's a compounding effect to AI visibility that mirrors what happened with domain authority in traditional SEO.

Waiting another quarter to "see how AI search develops" is the same mistake companies made with mobile optimization in 2012 and with Google's featured snippets in 2017. The window to establish early authority is closing.

What a real optimization workflow looks like

The difference between a monitoring tool and an optimization platform is whether it helps you take action.

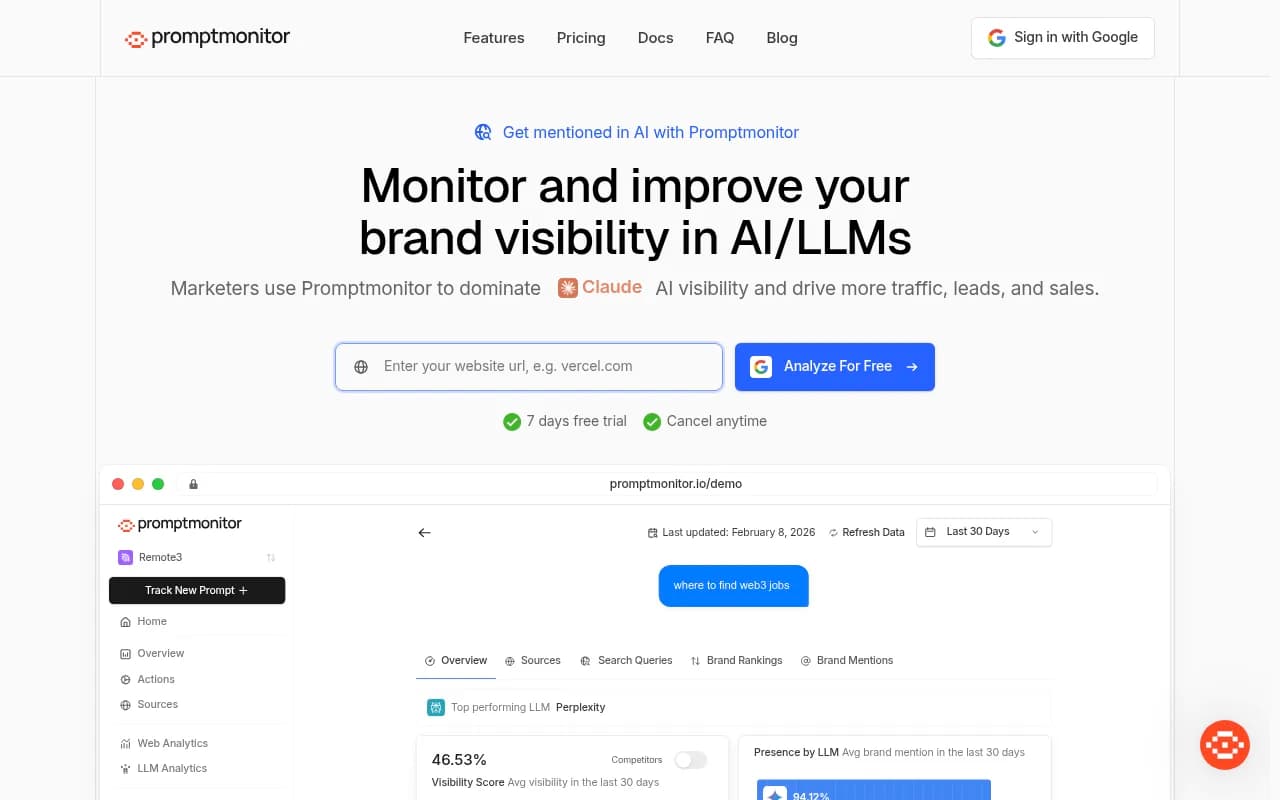

Promptwatch is built around this distinction. Rather than just showing you visibility scores, it runs the full loop: Answer Gap Analysis identifies which prompts competitors rank for that you don't, a built-in AI writing agent generates content engineered for AI citation (grounded in 880M+ citations analyzed), and page-level tracking with traffic attribution closes the loop to show what's actually working.

The crawler log feature is particularly useful -- it shows you in real time which AI crawlers (ChatGPT, Claude, Perplexity, etc.) are hitting your site, which pages they're reading, and what errors they're encountering. If GPTBot is crawling your homepage but never reaching your product comparison pages, that's a fixable problem. Most monitoring tools don't surface this at all.

For teams that want to start with monitoring and build from there, a few other platforms are worth knowing about:

Profound

The honest comparison: Profound and Scrunch have strong feature sets and enterprise-grade tracking. AthenaHQ is solid on monitoring. But none of them close the loop the way a platform with built-in content generation and gap analysis does. You'll still need to take the insights somewhere else to act on them.

A practical starting point for 2026

If you're currently monitoring but not acting, here's a realistic 30-day plan to change that:

Week 1: Audit your current visibility Run your top 20-30 prompts across at least 3 AI models. Note which prompts you appear in, which you don't, and which competitors appear instead of you. This is your baseline.

Week 2: Identify winnable gaps From your list of absent prompts, identify the ones where competitors are present but not dominant -- where the AI answer includes 3-4 sources and none of them are the obvious authority. These are your best opportunities.

Week 3: Create targeted content For each winnable gap, create one piece of content that directly answers the prompt. Be specific. Use clear structure. Include data, comparisons, or direct recommendations where relevant. Don't write for keywords -- write for the question.

Week 4: Publish, track, and iterate Publish the content, make sure it's crawlable (check your robots.txt, sitemap, and page speed), and set up page-level tracking to see if AI models start citing it. Give it 4-6 weeks before drawing conclusions.

This isn't a one-time project. AI visibility is an ongoing channel that requires the same sustained attention as SEO or content marketing. The brands treating it as a quarterly checkbox will keep losing to the ones treating it as a weekly workflow.

Monitoring vs. optimization: a quick comparison

| Capability | Monitoring-only tools | Optimization platforms |

|---|---|---|

| Brand mention tracking | Yes | Yes |

| Competitor visibility | Yes | Yes |

| Prompt volume & difficulty | Rarely | Yes |

| Answer gap analysis | No | Yes |

| Content generation for AI | No | Yes |

| AI crawler logs | No | Yes (Promptwatch) |

| Page-level citation tracking | Sometimes | Yes |

| Traffic attribution | No | Yes |

| Reddit/YouTube citation tracking | No | Yes (Promptwatch) |

| ChatGPT Shopping tracking | No | Yes (Promptwatch) |

The table makes the gap obvious. Monitoring tools give you the left two columns. Optimization platforms give you everything.

The bottom line

Knowing you're invisible in AI search and doing nothing about it is worse than not knowing at all -- because you're paying for the anxiety without the solution.

The brands that will win the next two years of AI search aren't the ones with the best dashboards. They're the ones who found their content gaps, created content that AI models actually want to cite, and built a feedback loop to keep improving. That's a workflow, not a subscription.

If your current setup tells you the problem but not how to fix it, that's worth changing now -- not next quarter.