Key takeaways

- Most AI visibility platforms only count mentions -- they don't check whether what AI says about your brand is actually true.

- Hallucinated brand information (wrong pricing, fake features, incorrect comparisons) can actively cost you deals, not just visibility.

- Only a handful of platforms in 2026 offer any meaningful hallucination or accuracy detection; most treat a wrong mention the same as a correct one.

- The best platforms combine detection with action: identifying what AI says wrong, then helping you fix it by creating the right content.

- Promptwatch is one of the few platforms that closes the full loop -- from spotting gaps and inaccuracies to generating content that corrects the record.

There's a scenario that keeps coming up in conversations with SaaS marketers right now. Their brand is getting mentioned in ChatGPT responses. That sounds like a win. Then they actually read what ChatGPT is saying -- and it's wrong. Wrong pricing. Features attributed to a competitor. A product integration that doesn't exist.

The founder behind LLMClicks documented this exactly: prospects were arriving at demo calls citing a price point 60% higher than the actual one. The AI had confidently hallucinated it. The brand ranked #3 organically. None of that mattered.

This is the part of AI visibility that most tools in 2026 still don't address. They're built to answer "are we mentioned?" -- not "is what's being said about us accurate?"

Why hallucination detection matters more than mention counting

When a traditional SEO tool tracks your rankings, accuracy isn't really a variable. Either your page appears at position 3 or it doesn't. The content of the result is your content.

AI search is different. The model synthesizes an answer from multiple sources, its training data, and its own inference. That synthesis can go wrong in ways that are genuinely damaging:

- Outdated pricing from a cached source gets presented as current fact

- A feature from a competitor's product gets attributed to yours

- An integration you deprecated two years ago gets recommended to prospects

- Your product gets suggested for use cases it explicitly doesn't support

Every one of these scenarios gets logged as a "positive mention" by most visibility trackers. The mention happened. The brand appeared. Job done.

But from a business perspective, a hallucinated mention can be worse than no mention at all. It creates false expectations, erodes trust when corrected, and in some cases actively steers prospects toward the wrong decision.

What to look for in a platform that actually detects hallucinations

Before getting into specific tools, it's worth being clear about what "hallucination detection" actually means in this context -- because vendors use the term loosely.

There are roughly three levels of capability:

Level 1: Sentiment scoring. The tool reads AI responses and labels them positive, neutral, or negative. This catches obvious bad press but misses factual errors that are framed positively ("Brand X is a great tool with enterprise-grade SSO" -- when you don't offer SSO).

Level 2: Fact comparison. The platform compares what AI says against a reference dataset -- your pricing page, feature list, or product documentation. Discrepancies get flagged. This is real hallucination detection, and it's rare.

Level 3: Continuous monitoring with alerts. The platform runs prompts on a schedule, compares outputs against your ground truth, and notifies you when something changes or conflicts. This is what enterprise brands actually need.

Most tools in 2026 are at Level 1 at best. A few are approaching Level 2. Level 3 remains genuinely uncommon.

The platforms worth looking at

Promptwatch

Promptwatch takes a different angle on this problem. Rather than just flagging what AI says about you, it focuses on why AI says the wrong things -- which usually comes down to missing or outdated content on your site.

The Answer Gap Analysis shows exactly which prompts competitors appear in that you don't, and which topics AI models want to answer but can't find authoritative content for on your site. That gap is often where hallucinations originate: the model has no good source, so it infers or extrapolates.

The built-in content generation agent then creates articles, comparisons, and listicles grounded in real citation data -- content specifically engineered to give AI models accurate, citable information about your brand. It's a more structural approach to the hallucination problem: instead of just detecting wrong answers, you're eliminating the conditions that produce them.

Promptwatch also includes AI crawler logs (which pages ChatGPT, Claude, and Perplexity are actually reading), page-level citation tracking, and traffic attribution to connect visibility changes to actual revenue.

LLMClicks

LLMClicks was built specifically around the hallucination problem -- the founder's own SaaS was being misrepresented in AI responses, and no existing tool caught it. The platform compares AI-generated responses against a reference dataset you provide (your pricing, features, positioning) and flags discrepancies.

It's one of the few tools that treats a factually wrong positive mention differently from a correct one. For SaaS companies with specific pricing or feature claims that AI models frequently get wrong, this is genuinely useful.

Profound

Profound is one of the more established enterprise platforms in this space. It monitors brand mentions across 9+ AI engines, tracks sentiment, and provides competitive visibility comparisons. Its hallucination detection is primarily sentiment-based rather than fact-comparison-based, but it does flag responses that contradict your brand positioning.

The platform is strong on breadth of coverage and reporting depth, which makes it popular with larger marketing teams that need to present AI visibility data to leadership.

Profound

Otterly.AI

Otterly is positioned at the more accessible end of the market. It tracks brand mentions across ChatGPT, Perplexity, and Google AI Overviews, and provides sentiment analysis. It doesn't do fact-level hallucination detection, but it's a reasonable starting point for teams that want to understand their baseline visibility before investing in deeper accuracy monitoring.

Otterly.AI

Peec AI

Peec AI focuses on tracking brand mentions and providing optimization suggestions. Its hallucination detection is limited to sentiment and basic accuracy flags, but it's worth mentioning for teams that want a mid-tier option between basic monitoring and full enterprise platforms.

Scrunch AI

Scrunch AI offers brand mention tracking across LLMs with some sentiment analysis capabilities. It's more focused on visibility breadth than accuracy depth, but it does surface cases where AI responses seem to contradict brand positioning.

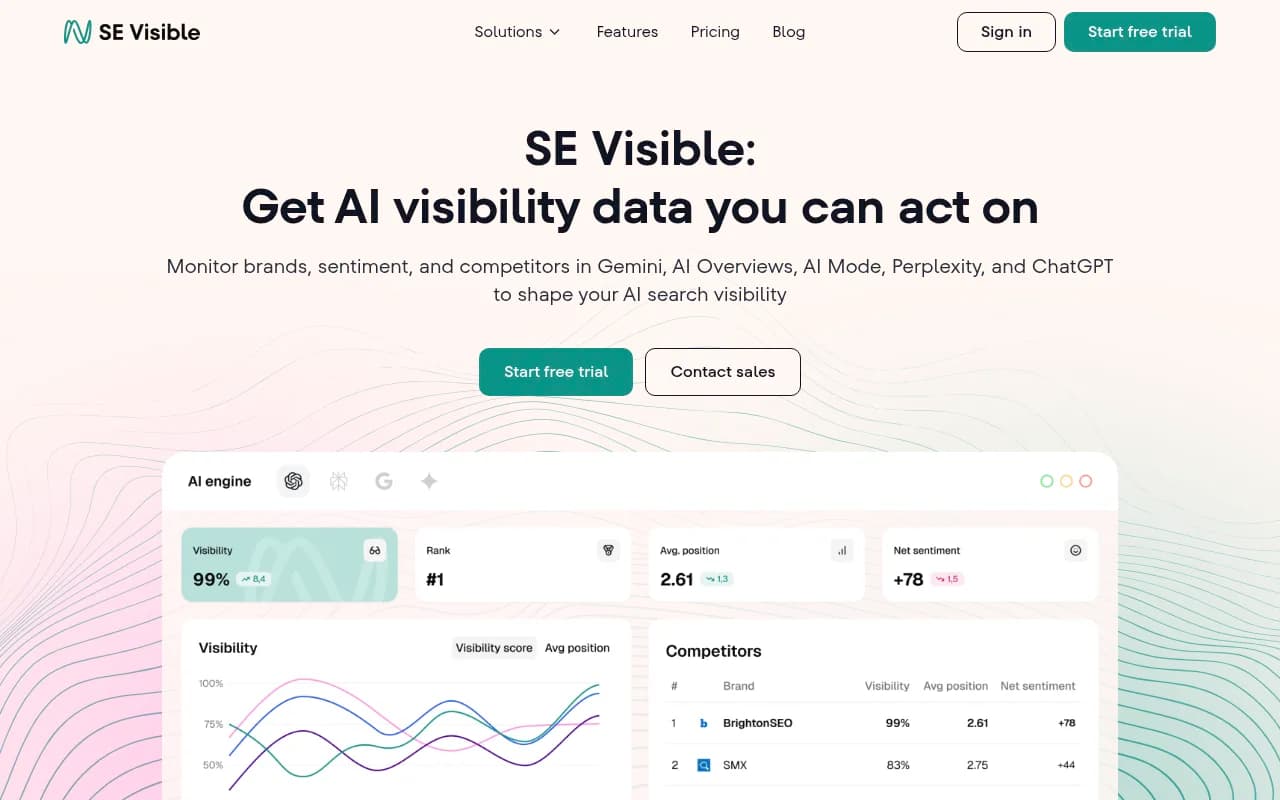

SE Visible (by SE Ranking)

SE Ranking's AI visibility module tracks brand mentions across AI engines and includes basic sentiment scoring. It's a reasonable add-on for teams already using SE Ranking for traditional SEO, though it doesn't go deep on factual accuracy.

AthenaHQ

AthenaHQ is monitoring-focused with good coverage across AI engines. It doesn't offer content generation or deep hallucination detection, but its tracking data is solid and it's used by teams that want clean, reliable mention data.

Feature comparison: which platforms catch what

| Platform | Mention tracking | Sentiment analysis | Fact-level hallucination detection | Content generation to fix gaps | AI crawler logs |

|---|---|---|---|---|---|

| Promptwatch | Yes (10 models) | Yes | Partial (via gap analysis) | Yes | Yes |

| LLMClicks | Yes | Yes | Yes (fact comparison) | No | No |

| Profound | Yes (9+ models) | Yes | Partial (sentiment-based) | No | No |

| Otterly.AI | Yes | Basic | No | No | No |

| Peec AI | Yes | Basic | No | No | No |

| Scrunch AI | Yes | Yes | Partial | No | No |

| SE Visible | Yes | Basic | No | No | No |

| AthenaHQ | Yes | Yes | No | No | No |

The honest picture: if you want true fact-level hallucination detection -- where the platform compares AI output against your actual product data -- LLMClicks is the most direct answer. If you want a platform that addresses the root cause of hallucinations (missing authoritative content) and helps you fix it, Promptwatch's approach is more comprehensive.

The root cause problem most tools ignore

Here's something worth sitting with: most AI hallucinations about brands don't come from nowhere. They come from the model having insufficient authoritative information and filling the gap with inference.

If your pricing page is buried behind a login wall, AI crawlers can't read it. If your feature documentation is thin or outdated, the model will extrapolate. If your competitors have detailed comparison pages and you don't, the model will use theirs.

This is why detection alone isn't enough. Knowing that ChatGPT got your pricing wrong is useful. Knowing why it got it wrong -- and being able to create the content that fixes it -- is what actually moves the needle.

Platforms like Promptwatch approach this by analyzing which prompts AI models answer using competitor content instead of yours, then generating content specifically designed to fill those gaps. It's a more structural fix than alert-based monitoring.

What to actually do when you find a hallucination

Finding out AI is saying something wrong about your brand is step one. Here's a practical sequence for what comes next:

Check the source. Use a tool with citation analysis to see what pages AI is citing when it makes the wrong claim. Often it's an outdated blog post, a third-party review site, or a competitor comparison page.

Audit your own crawlable content. AI crawler logs (available in platforms like Promptwatch) show which of your pages AI models are actually reading. If your pricing page isn't being crawled, that's your answer.

Create authoritative content on the disputed topic. If AI keeps getting your pricing wrong, a clear, crawlable pricing FAQ that AI models can cite directly is more effective than any amount of monitoring. Same for features, integrations, and use cases.

Monitor for improvement. After publishing corrective content, track whether AI responses change over the following weeks. Page-level citation tracking shows when new content starts getting cited.

Set up alerts for recurring issues. Some hallucinations are persistent -- the model keeps returning to a bad source. Alerts let you know when the problem recurs rather than discovering it in a prospect call.

Pricing overview (2026)

| Platform | Starting price | Free tier |

|---|---|---|

| Promptwatch | $99/mo (Essential) | Free trial |

| LLMClicks | Varies | Limited free tier |

| Profound | Enterprise pricing | Demo only |

| Otterly.AI | ~$49/mo | Free plan |

| Peec AI | ~$49/mo | Free trial |

| Scrunch AI | Custom | Demo only |

| AthenaHQ | Custom | Demo only |

Promptwatch's $99/mo Essential plan covers one site, 50 prompts, and 5 articles per month. The Professional tier at $249/mo adds crawler logs, multi-location tracking, and 15 articles. For teams that need both detection and the ability to fix what they find, that's a reasonable entry point.

Which platform is right for you

The answer depends on what problem you're actually trying to solve.

If your primary concern is knowing whether AI mentions your brand at all, and you want a simple, affordable starting point, Otterly.AI or Peec AI will do the job. They're monitoring tools, not optimization platforms, but they're honest about that.

If you've already confirmed you're being mentioned and you're worried about factual accuracy -- wrong pricing, misattributed features, hallucinated integrations -- LLMClicks is the most direct tool for that specific problem. It was built for exactly this scenario.

If you want to understand why AI gets things wrong and create content that fixes it, Promptwatch's combination of gap analysis, content generation, and crawler logs addresses the problem at a more structural level. It's the difference between treating symptoms and treating causes.

For enterprise teams that need executive-level reporting and broad model coverage, Profound is worth evaluating alongside Promptwatch.

The one thing to avoid: treating any mention as a good mention without reading what was actually said. In AI search, being mentioned with wrong information isn't a win -- it's a liability you haven't discovered yet.