Key takeaways

- Multi-model tracking is the baseline requirement for any serious GEO platform in 2026 -- a tool that only monitors one or two AI engines gives you an incomplete picture.

- Most platforms stop at monitoring. The ones worth paying for go further: they show you why you're missing from AI responses and help you fix it.

- ChatGPT, Claude, Gemini, and Perplexity each have different citation behaviors -- what gets you cited in one won't automatically work in another.

- Promptwatch is the only platform currently rated as a "Leader" across all GEO categories, combining multi-model tracking with content gap analysis and an AI writing agent.

- Pricing ranges from free tiers (basic monitoring only) to $500+/month for enterprise-grade platforms with full optimization workflows.

Why multi-model tracking actually matters

Here's the uncomfortable truth about AI search in 2026: your brand can be invisible on ChatGPT and prominent on Perplexity at the same time. Or cited heavily in Gemini's AI Overviews but completely absent from Claude's recommendations. These aren't edge cases -- they're the norm.

Each AI model has its own training data, retrieval logic, and citation preferences. ChatGPT tends to favor well-structured, authoritative content with clear entity signals. Perplexity is more aggressive about pulling from recent web sources. Gemini leans on Google's existing authority signals. Claude is selective in a different way again. Tracking just one of these gives you a false sense of security (or panic, depending on which one you check).

That's why multi-model tracking isn't a nice-to-have feature -- it's the minimum viable requirement for understanding your actual AI search presence. If a platform only queries one or two models, you're flying half-blind.

The second problem: most platforms that do track multiple models stop there. They show you a dashboard of visibility scores and call it a day. That's useful for reporting, but it doesn't tell you what to do next. The platforms worth investing in connect the monitoring data to actual content decisions.

What to look for in a multi-model GEO platform

Before diving into specific tools, here's what separates genuinely useful platforms from dashboards that look impressive but don't move the needle:

Model coverage: Does it track ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews at minimum? Bonus points for Grok, DeepSeek, Copilot, and Meta AI.

Prompt-level data: Aggregate visibility scores are fine for executive reports. But you need prompt-level data -- which specific questions trigger your brand, which ones don't, and what the AI says when it does mention you.

Competitor comparison: Knowing your own score in isolation is almost meaningless. You need to see how you stack up against competitors for the same prompts.

Content gap analysis: This is where most tools fall short. Showing you that you're invisible for a prompt is step one. Showing you what content would fix that is step two -- and most platforms never get there.

Traffic attribution: Can you connect AI visibility to actual website visits and revenue? Without this, GEO is just a vanity metric.

Crawler logs: Do you know which AI bots are crawling your site, how often, and which pages they're reading? This is foundational for technical GEO.

The top multi-model GEO platforms in 2026

Promptwatch

Promptwatch monitors 10 AI models simultaneously: ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Google AI Mode, Grok, DeepSeek, Copilot, and Meta/Llama. That's the broadest coverage of any platform in this category.

What separates it from the rest isn't just the model count -- it's what happens after the monitoring. The Answer Gap Analysis shows you exactly which prompts competitors are visible for that you're not, down to the specific content your site is missing. The built-in AI writing agent then generates articles, listicles, and comparisons grounded in 880M+ citations analyzed, prompt volumes, and competitor data. You close the loop with page-level tracking that shows which pages are being cited, by which models, and how often.

The crawler logs feature is worth calling out specifically. Real-time logs of AI crawlers (ChatGPT, Claude, Perplexity, etc.) hitting your site -- which pages they read, errors they encounter, how often they return. Most competitors don't have this at all.

Pricing: Essential at $99/mo (1 site, 50 prompts), Professional at $249/mo (2 sites, 150 prompts, crawler logs), Business at $579/mo (5 sites, 350 prompts). Free trial available.

Profound

Profound is a strong enterprise option, particularly for larger teams that need deep analytics and stakeholder-ready reporting. It covers the major AI models and has solid prompt tracking capabilities. The platform is well-regarded for its data depth.

The tradeoff: it's positioned at the higher end of the pricing spectrum, and it doesn't have the content generation capabilities that Promptwatch offers. It's a monitoring and analytics platform first. If your team already has a content workflow and just needs the visibility data to feed into it, Profound is worth evaluating.

Profound

Otterly.AI

Otterly.AI covers ChatGPT, Perplexity, Claude, and Google AI Overviews. The interface is clean and the setup is fast -- you can get tracking running in minutes. It's a popular choice for teams that want basic multi-model monitoring without a steep learning curve.

The limitation is that it stays in monitoring territory. There's no content gap analysis, no AI writing tools, no crawler logs, and no traffic attribution. It's a good starting point for teams new to GEO, but you'll likely outgrow it once you want to actually act on the data.

Otterly.AI

Peec AI

Peec tracks brand and competitor visibility across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews. The reporting is clean and client-ready, which makes it a reasonable choice for agencies that need to show clients their AI visibility scores.

Like Otterly.AI, it's primarily a monitoring tool. The data is solid, but the platform doesn't help you figure out what to do with it. For agencies that want to add GEO reporting to their service offering without building out a full optimization workflow, Peec is worth a look.

AthenaHQ

AthenaHQ has built a reputation for clean, well-organized AI visibility data. It covers the major models and gives you prompt-level visibility breakdowns. The competitor comparison features are decent.

The gap: like most monitoring-focused platforms, it doesn't bridge into content optimization or generation. You can see where you're losing -- you just don't get much help fixing it.

Scrunch AI

Scrunch AI covers multiple AI models and has particularly strong enterprise features around data export and API access. It's used by larger teams that need to integrate AI visibility data into existing reporting infrastructure.

The content optimization side is limited compared to Promptwatch, but if your primary need is reliable multi-model data that feeds into custom dashboards or BI tools, Scrunch is worth evaluating.

Semrush AI Toolkit

Semrush has added AI visibility tracking to its existing SEO platform. The advantage is obvious: if you're already a Semrush user, there's zero onboarding friction and you get GEO monitoring alongside your traditional SEO data in one place.

The limitations are real though. The AI tracking uses fixed prompts rather than letting you define your own, and there's no AI traffic attribution. It's a solid add-on for existing Semrush users, but not a reason to choose Semrush if you're evaluating platforms fresh.

Rankshift

Rankshift is built specifically for AI visibility monitoring and covers ChatGPT, Perplexity, and several other models. It's positioned as a dedicated LLM tracking tool with a clean interface and straightforward setup.

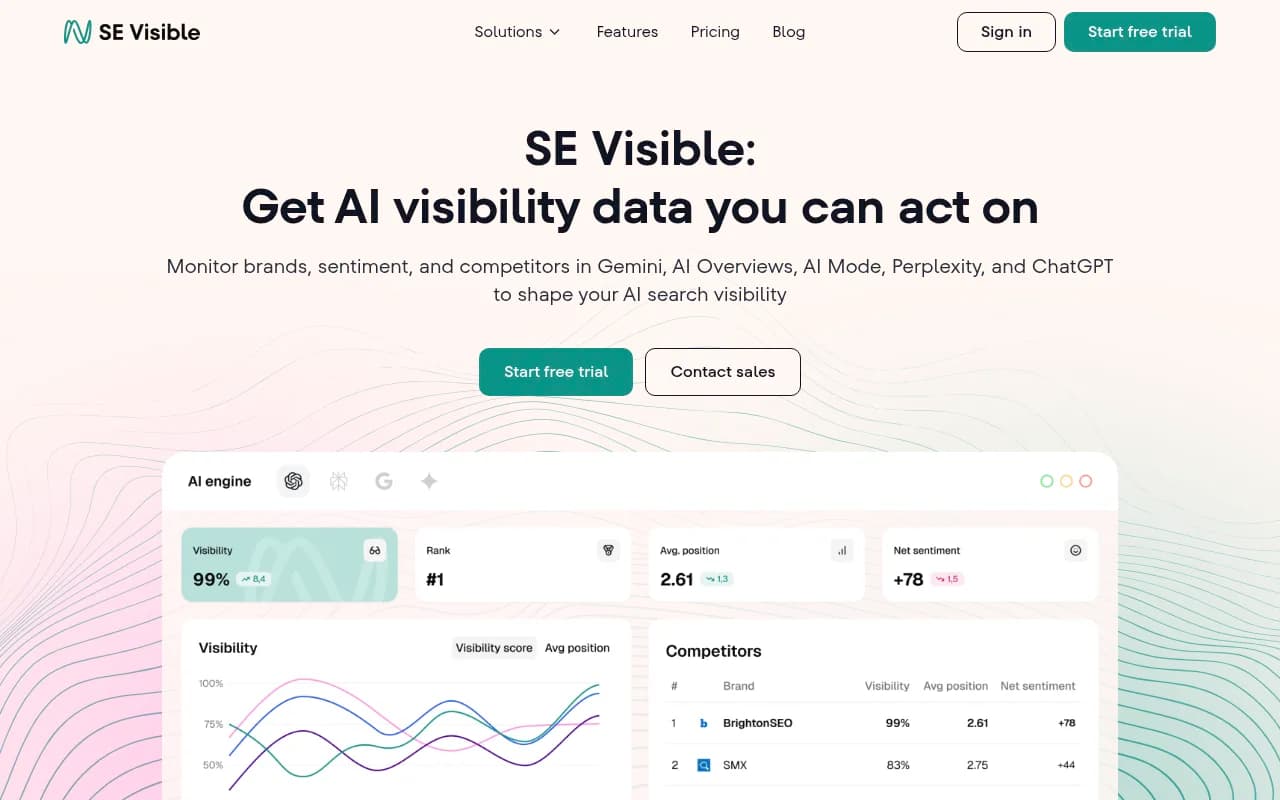

SE Visible (by SE Ranking)

SE Ranking's AI visibility module tracks brand mentions across several AI engines. It's a natural extension for teams already using SE Ranking for traditional SEO. The monitoring data is reliable, though like most add-on AI tracking features, it doesn't extend into content optimization territory.

AirOps

AirOps takes a different angle -- it's more of a content engineering platform that helps you create content designed to rank in AI search. It's less of a pure monitoring tool and more of a workflow platform for teams that want to systematically produce AI-optimized content at scale.

Head-to-head comparison

| Platform | Models tracked | Prompt-level data | Competitor comparison | Content gap analysis | AI content generation | Crawler logs | Traffic attribution | Starting price |

|---|---|---|---|---|---|---|---|---|

| Promptwatch | 10 | Yes | Yes | Yes | Yes | Yes | Yes | $99/mo |

| Profound | 9+ | Yes | Yes | Limited | No | No | No | Enterprise |

| Otterly.AI | 4-5 | Yes | Yes | No | No | No | No | ~$99/mo |

| Peec AI | 5 | Yes | Yes | No | No | No | No | ~$79/mo |

| AthenaHQ | 4-5 | Yes | Yes | No | No | No | No | Custom |

| Scrunch AI | 5+ | Yes | Yes | No | No | No | No | Custom |

| Semrush AI Toolkit | 5 | Limited | Yes | No | No | No | No | Add-on |

| SE Visible | 4 | Yes | Yes | No | No | No | No | Add-on |

| AirOps | 4-5 | Limited | Limited | Yes | Yes | No | No | Custom |

The monitoring-only trap

Something worth saying directly: a lot of teams buy a GEO monitoring tool, get excited about the dashboard, and then... nothing changes. Visibility scores stay flat. The tool gets used for monthly reporting but doesn't actually drive any action.

This happens because monitoring data without a clear path to action is just information. Knowing that you're invisible for "best project management software for remote teams" on ChatGPT is useful. But if the platform doesn't tell you what content would fix that and help you create it, you're left doing that work manually -- which most teams don't have bandwidth for.

The platforms that break this pattern are the ones that close the loop: find the gap, generate the content, track whether it worked. That's a fundamentally different product than a monitoring dashboard, and it's why the distinction between "monitoring-only" and "optimization platform" matters when you're evaluating tools.

How different AI models cite content differently

One thing multi-model tracking reveals quickly: your visibility scores will look very different across models, and the reasons aren't always obvious.

ChatGPT (especially in GPT-4o and the web-browsing mode) tends to favor pages that clearly answer specific questions, have structured formatting, and come from domains with established authority signals. It also pulls heavily from sources it's seen cited repeatedly across the web.

Perplexity is more aggressive about real-time web retrieval. Fresh content, recent publications, and pages that are actively being crawled tend to perform better here. It's also more likely to cite niche sources if they directly answer the query.

Gemini leans on Google's existing quality signals -- E-E-A-T, structured data, and the same authority factors that drive traditional Google rankings. If you're already ranking well in Google, you have a head start with Gemini.

Claude is selective in a way that's harder to reverse-engineer. It tends to favor content that's comprehensive, well-reasoned, and demonstrates genuine expertise. Thin content that ranks in Google often doesn't get cited by Claude.

This is why tracking all four simultaneously is so valuable -- you can see which model you're winning or losing in, and start to understand why.

Who needs multi-model tracking vs. single-model monitoring

Not every team needs a full 10-model tracking setup on day one. Here's a rough guide:

If you're a small brand or solo marketer just getting started with GEO, a basic tool that tracks ChatGPT and Perplexity is a reasonable starting point. The cost is lower and you'll learn the fundamentals.

If you're a marketing team at a mid-size company, you need at least 4-5 models covered -- ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews. These are where the majority of AI search volume lives right now. You also need competitor comparison and some form of content gap analysis, otherwise the data doesn't translate into action.

If you're an agency managing multiple clients, you need multi-site support, clean client reporting, and ideally white-label options. Platforms like Promptwatch offer agency/enterprise pricing for this use case.

If you're an enterprise brand, you need the full stack: broad model coverage, prompt volume data, crawler logs, traffic attribution, and API access for custom reporting. The monitoring-only platforms won't cut it at this level.

The bottom line

Multi-model tracking is table stakes in 2026. Any GEO platform worth considering should cover at least ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews. The real differentiator is what happens after the monitoring.

Most platforms show you the problem. The ones that help you fix it -- with content gap analysis, AI writing tools, and closed-loop attribution -- are in a different category. That's a short list right now, and Promptwatch sits at the top of it.

If you're evaluating platforms, start by asking: "After I see my visibility scores, what does this tool help me do about them?" The answer will tell you everything.