Key takeaways

- Real-time monitoring catches citation changes in AI models hours or days faster than scheduled approaches, but comes at a higher cost and complexity

- Scheduled monitoring (daily or weekly) is sufficient for most brands tracking stable, informational queries -- real-time matters most when you're in a competitive category or running active content campaigns

- ChatGPT and Perplexity update their responses more frequently than Claude or Gemini, making real-time monitoring more valuable for those platforms specifically

- The biggest gap in most monitoring setups isn't speed -- it's knowing what to do when you spot a change

- Tools like Promptwatch close that gap by pairing visibility tracking with content gap analysis and AI content generation, so you can act on what you find

Why the speed of detection actually matters

Here's something most brands don't think about: AI models don't have static answers. ChatGPT, Claude, Perplexity, and Gemini are constantly updating their responses based on new training data, retrieval-augmented generation (RAG) pipelines, and model updates. A response that mentioned your brand prominently last Tuesday might not mention you at all today.

The question is: how quickly do you find out?

If you're running weekly scheduled checks, you could go six days without knowing a competitor displaced you in ChatGPT's recommendations for your core category. If you're in a high-velocity space -- SaaS, fintech, travel, e-commerce -- that's six days of lost consideration from users who asked AI for a recommendation and got pointed somewhere else.

Real-time monitoring changes that math. But it also costs more, requires more infrastructure, and generates a lot of noise if you're not set up to act on alerts. So the right answer depends heavily on your situation.

Let's break down how each approach actually works.

How scheduled monitoring works

Scheduled monitoring runs queries against AI models at fixed intervals -- typically daily, weekly, or in some tools, every few hours. You define a set of prompts ("What are the best tools for X?", "Which brands are recommended for Y?"), and the platform runs those prompts on a schedule and logs the responses.

The advantages are real:

- Lower cost per query (you're not hammering APIs continuously)

- Cleaner data with less noise from response variability

- Easier to spot trends over time rather than reacting to every fluctuation

- Most platforms support this model, so you have more tool options

The downside is obvious: you're always working with stale data. If Claude drops your brand from a key recommendation on Monday and you're running weekly checks, you find out on Sunday. That's a week of invisible brand damage.

For most brands tracking 50-150 prompts across multiple AI models, daily scheduled monitoring is the practical sweet spot. It catches most meaningful changes within 24 hours without the cost and complexity of real-time systems.

How real-time monitoring works

Real-time monitoring (or near-real-time, which is more accurate) runs queries continuously or on very short intervals -- think every few minutes to every few hours. Some platforms use change detection algorithms that only alert you when a response meaningfully shifts, rather than logging every single query.

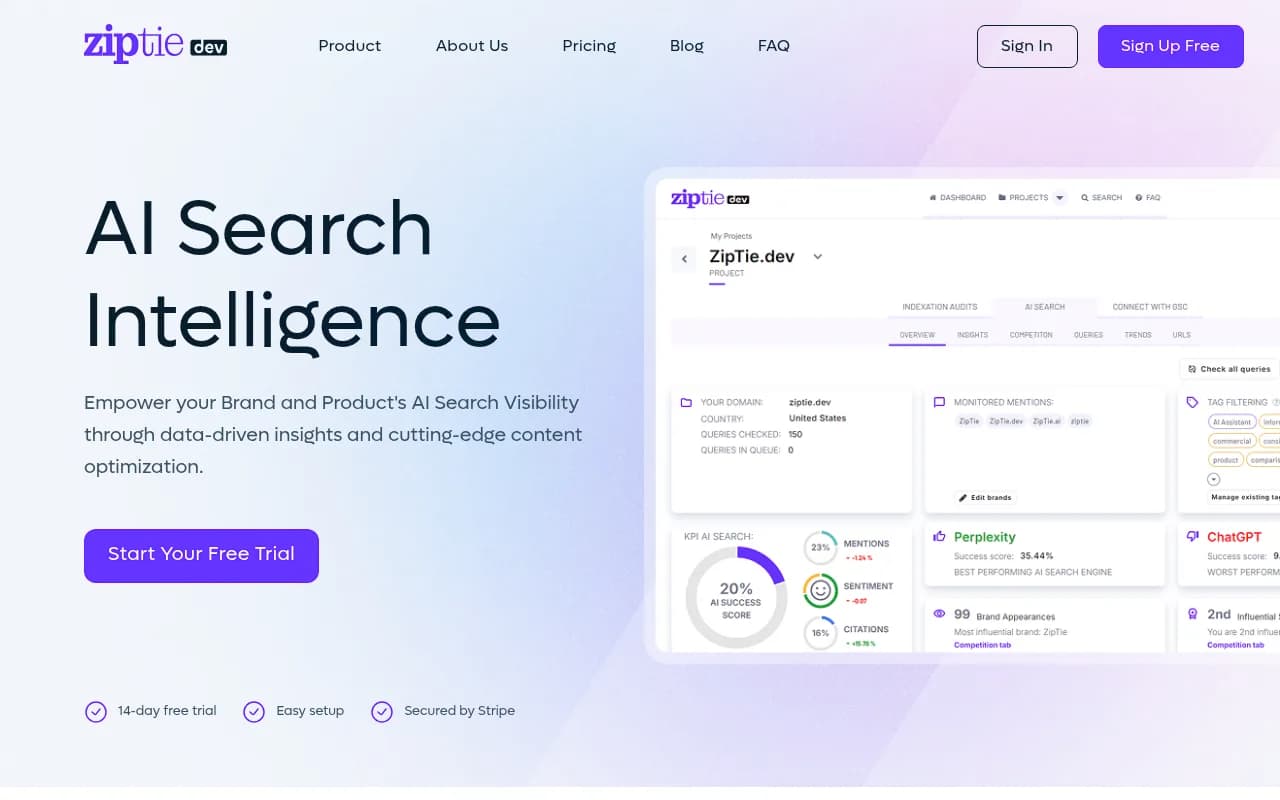

ZipTie.dev describes this approach well: "Real-time Perplexity monitoring with change detection catches dynamic answer changes faster than daily or weekly alternatives, critical when visibility can shift within hours."

The advantages for specific use cases are significant:

- You catch citation drops within hours, not days

- You can correlate visibility changes with specific events (a competitor publishing new content, a model update, a news cycle)

- You can run rapid experiments -- publish content, then watch whether AI models start citing it within 24-48 hours

- Competitive intelligence becomes much more actionable when you're seeing changes as they happen

The tradeoffs: real-time monitoring is expensive at scale (API costs add up fast), harder to interpret (AI responses have natural variability that can look like meaningful changes), and most tools that offer it charge significantly more.

Which AI models change their responses most frequently?

This is where it gets interesting, and where the real-time vs scheduled debate gets more nuanced.

Not all AI models are equal in terms of response volatility:

Perplexity changes responses most frequently because it's retrieval-based -- it's actively pulling from the web in real time. A new article, a Reddit thread, a press release can shift Perplexity's answer within hours. Real-time monitoring here has the most value.

ChatGPT (particularly with browsing enabled or in its search mode) also updates frequently, though its base model responses are more stable. The shopping and product recommendation features are especially volatile.

Claude tends to be more stable in its responses because Anthropic updates its models less frequently and Claude relies more on training data than live retrieval. Daily monitoring is usually sufficient here.

Google AI Overviews and AI Mode can shift rapidly because they're tied to Google's live index. Changes here often correlate with traditional SEO changes on the underlying pages.

Gemini sits somewhere in the middle -- more stable than Perplexity, less stable than Claude.

This means a tiered approach often makes the most sense: real-time or near-real-time for Perplexity and ChatGPT Search, daily scheduled for Claude and Gemini.

The real problem: detection without action

Here's what most monitoring discussions miss. Whether you detect a citation change in 5 minutes or 5 days, the harder question is: what do you actually do about it?

Most monitoring tools will tell you that your brand dropped from a ChatGPT recommendation. Very few will tell you why, or help you fix it.

The "why" usually comes down to one of a few things:

- A competitor published content that better answers the prompt

- Your existing content doesn't directly address the question being asked

- AI models can't find clear, structured information about your product for that specific use case

- A third-party source (Reddit, a review site, an industry publication) that was citing you has been updated or replaced

The "fix" requires understanding which specific content gaps exist, then creating content that AI models will actually cite. That's a content strategy problem, not a monitoring problem.

This is why platforms that combine monitoring with content gap analysis and content generation are more useful than pure trackers. Promptwatch's Answer Gap Analysis, for example, shows you exactly which prompts competitors are visible for that you're not -- and its built-in writing agent can generate content engineered to close those gaps. That's a fundamentally different workflow than getting an alert that your visibility score dropped.

Comparing the main approaches

| Approach | Detection speed | Cost | Best for | Platforms that support it |

|---|---|---|---|---|

| Real-time (minutes) | Fastest | High | Competitive categories, active campaigns | ZipTie, Promptwatch (crawler logs) |

| Near-real-time (hourly) | Fast | Medium-high | High-velocity brands, Perplexity tracking | Rankshift, LLM Pulse |

| Daily scheduled | 24h lag | Medium | Most brands, stable categories | Promptwatch, Otterly.AI, Profound, LLMrefs |

| Weekly scheduled | 7-day lag | Low | Low-priority monitoring, early-stage brands | Most tools |

| Manual spot checks | Variable | Very low | One-off research, no budget | Any AI model directly |

Tools worth knowing about in 2026

For real-time and near-real-time monitoring

ZipTie.dev focuses specifically on real-time Perplexity and ChatGPT monitoring with change detection. It's built for brands that need to catch dynamic answer changes quickly rather than just tracking trends.

Rankshift tracks brand visibility across ChatGPT, Perplexity, and AI search with a focus on actionable rank data. Good for teams that want clean, fast data without a lot of setup overhead.

LLM Pulse covers ChatGPT, Perplexity, and other AI search engines with visibility tracking that's designed to surface changes as they happen rather than just weekly summaries.

For scheduled monitoring with depth

Profound

Profound is one of the more established enterprise-grade platforms, tracking brand mentions across 9+ AI engines. It's monitoring-focused and strong on data depth, though it doesn't include content generation to help you act on what you find.

Otterly.AI

Otterly.AI tracks brand mentions across ChatGPT, Perplexity, and Google AI Overviews. It's a solid monitoring tool for teams that want straightforward visibility data on a scheduled basis.

LLMrefs

LLMrefs takes a keyword-based approach that mirrors traditional SEO reporting, which makes it easy to plug into existing workflows. It runs on scheduled queries and gives you share of voice and citation data across multiple AI engines.

Peec AI covers ChatGPT, Perplexity, and Claude with daily monitoring. It's a clean, accessible option for teams that are earlier in their AI visibility journey.

For monitoring plus action

Promptwatch is the platform that goes furthest beyond pure monitoring. It tracks 10 AI models (including ChatGPT, Claude, Perplexity, Gemini, Grok, DeepSeek, and more), but the real differentiator is what happens after you spot a gap. The Answer Gap Analysis shows you exactly which prompts competitors are winning that you're not. The built-in AI writing agent generates content grounded in 880M+ citations analyzed -- articles and comparisons designed to get cited, not just indexed. And AI Crawler Logs show you in real time which AI crawlers are hitting your pages and what they're reading. It's the difference between a dashboard and an optimization system.

How to decide which approach is right for you

Run through these questions:

How competitive is your category? If you're in a space where 5+ brands are actively competing for AI recommendations, real-time or daily monitoring is worth the cost. If you're in a niche with little AI visibility competition, weekly is fine.

Are you running active content experiments? If you're publishing content specifically to improve AI visibility, you need faster feedback loops. Daily at minimum, near-real-time if you can afford it. You want to know within 48 hours whether a new article is getting cited.

Which AI models matter most for your audience? If your customers are heavy Perplexity users, real-time monitoring there is more valuable than for Claude. Know where your audience is asking questions.

Do you have the bandwidth to act on alerts? Real-time monitoring that generates alerts you can't act on is just noise. If your team can only respond to visibility changes once a week, weekly monitoring is actually more appropriate -- you're not missing anything actionable.

What's your current baseline? If you don't know whether you're being cited at all, start with daily scheduled monitoring across your core prompts. Get a baseline. Then decide whether you need faster detection.

A practical setup for most brands in 2026

For a mid-sized brand with a real AI visibility goal (not just curiosity), here's what actually makes sense:

-

Define 50-150 prompts that represent how your customers ask AI for recommendations in your category. Include branded and unbranded queries.

-

Run daily scheduled monitoring across ChatGPT, Perplexity, Claude, and Google AI Overviews at minimum. These four cover the vast majority of AI search traffic.

-

Set up AI crawler log monitoring so you know when ChatGPT, Claude, and Perplexity are actually crawling your pages -- this is a leading indicator of citation changes, not a lagging one.

-

Use answer gap analysis to find the prompts where competitors are visible and you're not. These are your highest-priority content opportunities.

-

Publish content specifically targeting those gaps, then watch your daily monitoring data to see whether citations follow within 2-4 weeks.

-

Add real-time monitoring for Perplexity specifically if you're in a fast-moving category and have the budget.

The speed of detection matters less than the quality of your response to what you detect. A team that checks weekly and has a clear content strategy will outperform a team with real-time alerts and no plan for what to do with them.

The bottom line

Real-time monitoring wins on speed. Scheduled monitoring wins on cost and simplicity. But neither approach matters much if you're just watching numbers change without a system for improving them.

The brands gaining ground in AI search in 2026 aren't the ones with the fastest alerts -- they're the ones who spot a gap, create content that directly addresses it, and track whether AI models start citing that content. The monitoring approach is just the first step in that loop.

Pick the cadence that matches your competitive intensity and your team's capacity to act. Then make sure the tool you're using can help you do something about what you find.