Key takeaways

- MCP (Model Context Protocol) is now the default integration standard for connecting AI models to external tools and data -- adopted by OpenAI, Google, Microsoft, and thousands of enterprise teams by mid-2025.

- Most AI search visibility platforms are still monitoring-only dashboards with no MCP support, leaving users to manually export data and build their own workflows.

- A small group of platforms -- including Promptwatch, Botify, and a few others -- are moving toward agentic, action-oriented architectures that MCP makes possible.

- MCP matters for GEO teams because it enables AI agents to automatically pull visibility data, trigger content creation, and close the optimization loop without human intervention.

- If your AI visibility tool doesn't have an API or MCP integration roadmap, you're likely locked into a passive monitoring workflow that won't scale.

What MCP actually is (and why it matters here)

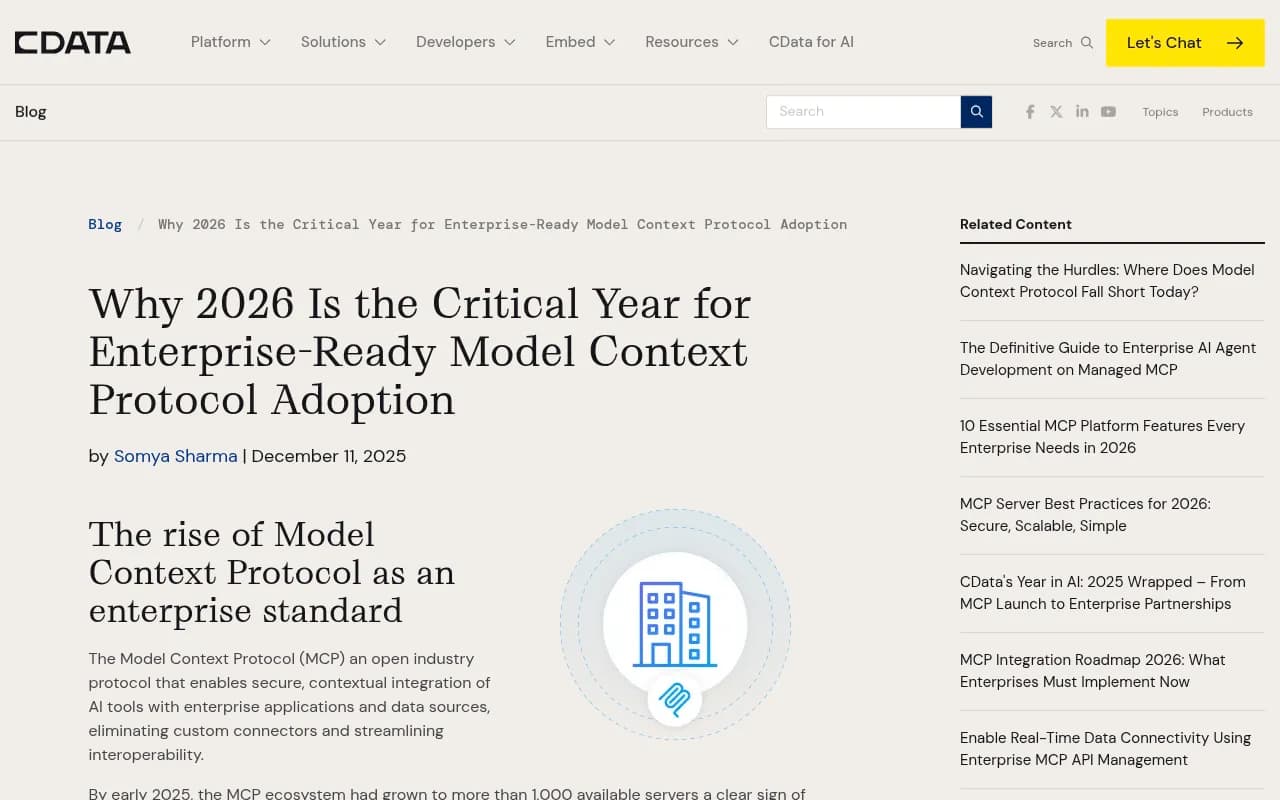

Anthropic introduced the Model Context Protocol in November 2024. The simplest way to understand it: MCP is to AI agents what USB-C is to devices. Before it, every AI application that needed to talk to an external system -- a CRM, a database, a content tool -- required a custom connector. If you had ten AI apps and twenty tools, you potentially needed two hundred separate integrations. MCP collapses that into a single, standardized protocol.

By March 2025, OpenAI had adopted it. By mid-2025, Google DeepMind, Microsoft, and LangChain followed. By December 2025, it was donated to the Linux Foundation and became vendor-neutral open infrastructure. The MCP ecosystem had grown to over 1,000 available servers by early 2025, and the market is expected to hit $1.8B in value by end of 2025 according to CData's enterprise analysis.

For AI search visibility specifically, MCP changes the game in a concrete way. Right now, most GEO workflows look like this: a marketer logs into a dashboard, reads some visibility scores, exports a CSV, pastes it into a brief, hands it to a writer, and waits. MCP-enabled workflows look like this: an AI agent reads your visibility data, identifies gaps, triggers a content brief, routes it to a writing tool, and flags the result for human review -- all without manual handoffs.

That's not science fiction. It's what the protocol was designed to do, and it's why which platforms support it (or are building toward it) matters enormously in 2026.

The current state: most platforms aren't there yet

Let's be direct. The majority of AI search visibility tools in 2026 are monitoring dashboards. They show you data. They don't help you act on it, and they certainly don't expose MCP-compatible interfaces that let AI agents do the acting for you.

This isn't a criticism of their core product -- tracking brand mentions across ChatGPT, Perplexity, Claude, and Google AI Overviews is genuinely useful. But MCP adoption requires a different architectural mindset: you have to build your platform as something other tools and agents can connect to, not just something humans log into.

Here's a rough breakdown of where the market sits:

| Platform | MCP support | API available | Content generation | Action-oriented |

|---|---|---|---|---|

| Promptwatch | In roadmap / partial | Yes | Yes (built-in AI writer) | Yes |

| Botify | Partial (enterprise) | Yes | No | Partial |

| Profound | No confirmed MCP | Yes (limited) | No | No |

| Otterly.AI | No | No | No | No |

| Peec.ai | No | No | No | No |

| AthenaHQ | No | No | No | No |

| ScrunchAI | No | No | No | No |

| Search Party | No | No | No | No |

| Semrush | Partial (via integrations) | Yes | Yes (ContentShake) | Partial |

| Ahrefs | No | Yes (limited) | No | No |

The pattern is clear: the larger, more established platforms with existing API infrastructure are better positioned to add MCP support. The monitoring-only startups that launched in 2024-2025 largely built closed systems with no integration layer at all.

Why this gap exists

Building MCP support isn't trivial. It requires exposing your data through a standardized server interface, handling authentication securely, and thinking carefully about what context an AI agent actually needs to do something useful with your data.

Most GEO platforms launched fast to capture market share in a new category. They optimized for dashboards and charts -- things that look good in demos. Agentic infrastructure is harder to show in a screenshot and takes longer to build properly.

There's also a security dimension that's slowed enterprise adoption broadly. A Reddit thread in the r/AI_Agents community put it bluntly: "MCP has become the default way to connect AI models to external tools faster than anyone expected, and faster than security could keep up." For platforms handling sensitive competitive intelligence data, that's a real concern. You don't want an AI agent with write access to your brand monitoring data unless the auth model is solid.

The platforms moving fastest on MCP are the ones that already had API-first architectures -- because they built for extensibility from day one rather than bolting it on later.

Which platforms are ahead

Promptwatch

Promptwatch is the clearest example of a platform built with the action loop in mind -- which is the prerequisite for meaningful MCP integration. The platform already exposes a Looker Studio integration and API for custom workflows, which gives it the foundation to support MCP-compatible connections. Its built-in AI writing agent, citation analysis, and crawler logs are exactly the kind of data sources that become powerful when an AI agent can query them programmatically.

The practical implication: a GEO team using Promptwatch could, in principle, build an MCP workflow where an agent checks answer gap analysis daily, identifies new prompt opportunities, triggers content generation, and logs the results -- all without a human touching a dashboard.

Botify

Botify sits in an interesting position. It's an enterprise SEO and GEO platform with a long history of API-first thinking, and it's been moving toward agentic workflows through its enterprise tier. It doesn't have a public MCP server yet, but its architecture is closer to supporting one than most competitors.

Semrush

Semrush is worth mentioning because of scale. It has API access, ContentShake AI for content generation, and integrations with dozens of tools. It's not a pure GEO platform, and its AI search tracking uses fixed prompts (a real limitation), but it's the kind of platform that will add MCP support through integrations before building it natively.

Search Atlas

Search Atlas has been building toward an automated SEO and GEO workflow, with content generation and optimization baked in. It's not confirmed MCP-ready, but its automation-first approach puts it ahead of pure monitoring tools.

Which platforms are behind

Otterly.AI, Peec.ai, and the monitoring-only tier

These platforms do one thing: show you when your brand appears in AI responses. That's useful data, but it's a closed loop. No API, no content generation, no integration layer. In a world where MCP enables AI agents to act on visibility data automatically, platforms that don't expose their data programmatically become bottlenecks.

Otterly.AI

AthenaHQ

AthenaHQ focuses on monitoring and competitive analysis. It's a solid tool for understanding where you stand, but it has no content optimization or generation capabilities, and no public API that would support MCP-style connections.

Most of the 2024-2025 monitoring startups

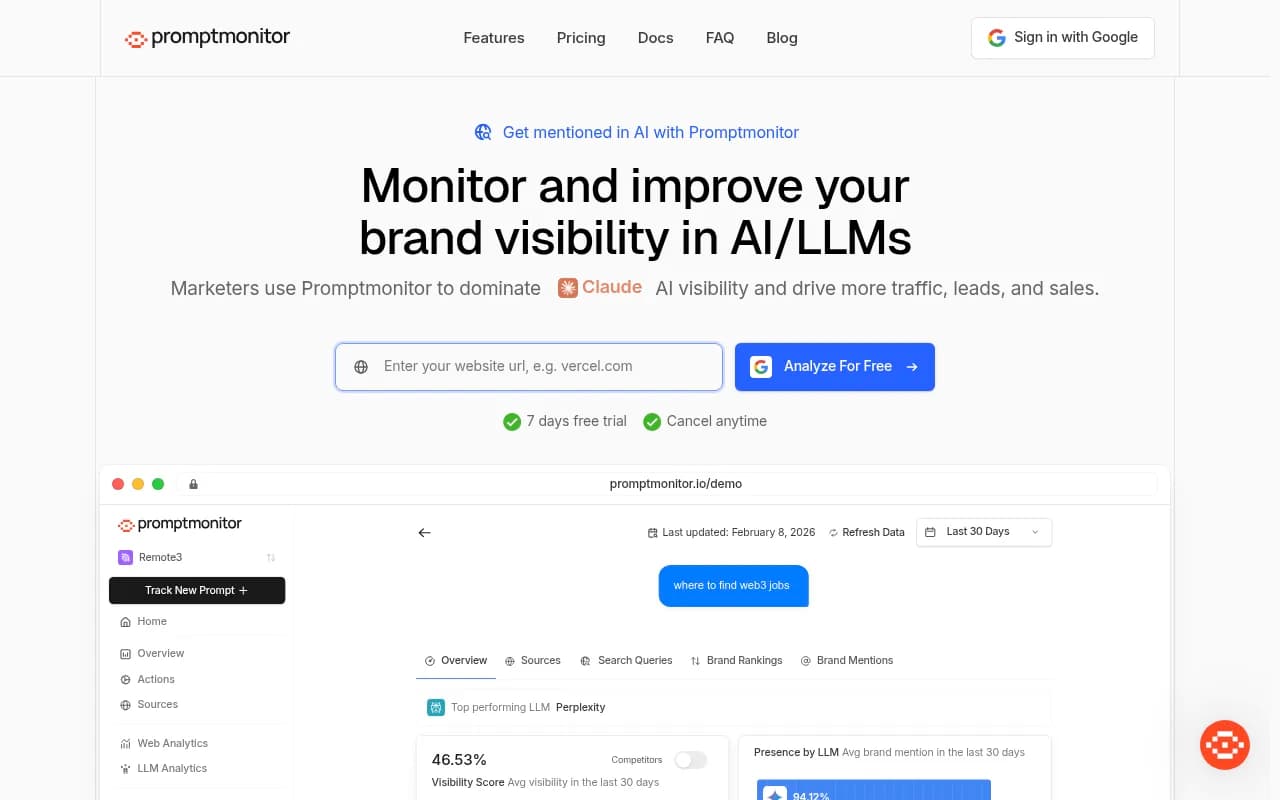

Tools like Goodie AI, AI Peekaboo, Promptmonitor, and similar lightweight trackers were built to capture early GEO market share. They're useful for basic brand monitoring but architecturally not positioned for MCP integration. They'd need significant rebuilds to expose the kind of structured, queryable data that AI agents need.

What MCP-ready GEO actually looks like in practice

It's worth being concrete about what "MCP-ready" means for a GEO workflow, because the term can get abstract fast.

Imagine you're running AI search visibility for a mid-size SaaS brand. You're tracking 150 prompts across ChatGPT, Perplexity, Claude, and Google AI Overviews. Here's what an MCP-enabled workflow could look like:

- An AI agent connects to your visibility platform's MCP server and pulls this week's answer gap analysis -- prompts where competitors appear but you don't.

- The agent cross-references those gaps with prompt volume data to prioritize the highest-traffic opportunities.

- It triggers your content generation tool (via its own MCP server) to draft an article targeting the top three gaps.

- The draft lands in your CMS for human review, with citations and competitor context already included.

- After publishing, the agent monitors whether your visibility score improves for those prompts over the next 30 days.

None of that requires a human to log into a dashboard, export data, or write a brief from scratch. The human's job shifts to reviewing and approving, not orchestrating.

This is why the "action loop" matters so much. Platforms that only show you data can't participate in this workflow. Platforms that help you act on data -- and expose that capability through standard interfaces -- become the connective tissue of your entire GEO operation.

What to look for when evaluating platforms on MCP readiness

If you're choosing a GEO or AI visibility platform in 2026 and MCP matters to your workflow (it should), here are the questions worth asking:

Does it have a public API? This is table stakes. If a platform has no API, it has no path to MCP support. You're locked into whatever their dashboard shows you.

Is the data structured and queryable? Raw data dumps aren't useful for AI agents. You want platforms that expose specific endpoints -- prompt-level visibility scores, citation sources, gap analysis results -- not just aggregate metrics.

Does it support webhooks or event-driven triggers? MCP works best when agents can react to changes, not just poll for data. Platforms with webhook support are better positioned.

Is there a content generation layer? MCP's value compounds when the platform can both surface insights and take action. A visibility platform that also generates content can close the loop within a single MCP workflow.

What's their stated roadmap? Some platforms are actively building MCP servers. Others haven't mentioned it. Ask directly -- the answer tells you a lot about how they think about their product's role in an agentic world.

The broader picture: GEO platforms in an agentic world

The shift from "AI search visibility" to "agentic GEO" is already happening. The question isn't whether AI agents will run more of your marketing workflows -- they will. The question is whether your tools are built to participate in those workflows or whether they'll become isolated data silos that humans have to manually bridge.

MCP is the protocol that determines which side of that line a platform falls on. It's not the only factor -- data quality, prompt coverage, citation analysis depth, and content generation capability all matter. But without MCP support (or at minimum, a serious API), a platform can't be part of an automated GEO workflow.

The platforms that will win in 2026 and beyond are the ones that treat themselves as nodes in a larger agentic network, not destinations where humans come to read reports. That means APIs, MCP servers, structured data, and integration with the broader ecosystem of AI writing tools, CMS platforms, and workflow automation.

For teams evaluating their GEO stack right now, the practical advice is straightforward: prioritize platforms with APIs and action-oriented features today, and ask hard questions about MCP roadmaps. The monitoring-only tools may be cheaper and simpler, but they're building toward obsolescence in a world where AI agents do the work that humans used to do manually.

The platforms that understand this -- and are building accordingly -- are the ones worth betting on.