LangSmith Review 2026

Observability and testing platform for LangChain apps, providing prompt tracking, debugging tools, and performance analytics for AI workflows.

Key Takeaways:

- Best for: Engineering teams building production AI agents who need deep visibility into non-deterministic LLM behavior, not just surface-level metrics

- Standout capability: Step-by-step agent tracing that shows exactly what your AI is doing at each decision point, plus automated clustering to surface systemic issues across thousands of conversations

- Framework agnostic: Works with OpenAI SDK, Anthropic, Vercel AI SDK, LlamaIndex, and custom implementations -- not just LangChain despite the name

- Enterprise-ready: Self-hosted and BYOC options, OpenTelemetry integration, zero latency impact on production apps

- Limitation: Pricing scales with trace volume, which can get expensive for high-traffic applications (though 5,000 free traces/month covers early development)

LangSmith is the observability and monitoring platform built by the team behind LangChain, designed specifically for teams shipping AI agents and LLM applications to production. Launched in 2023 as a commercial offering alongside the open-source LangChain framework, LangSmith has become the go-to debugging and monitoring solution for over 6,000 teams -- including Klarna, GitLab, LinkedIn, Rippling, Home Depot, and L'Oréal. The platform addresses a fundamental challenge in AI development: understanding and fixing non-deterministic behavior in production.

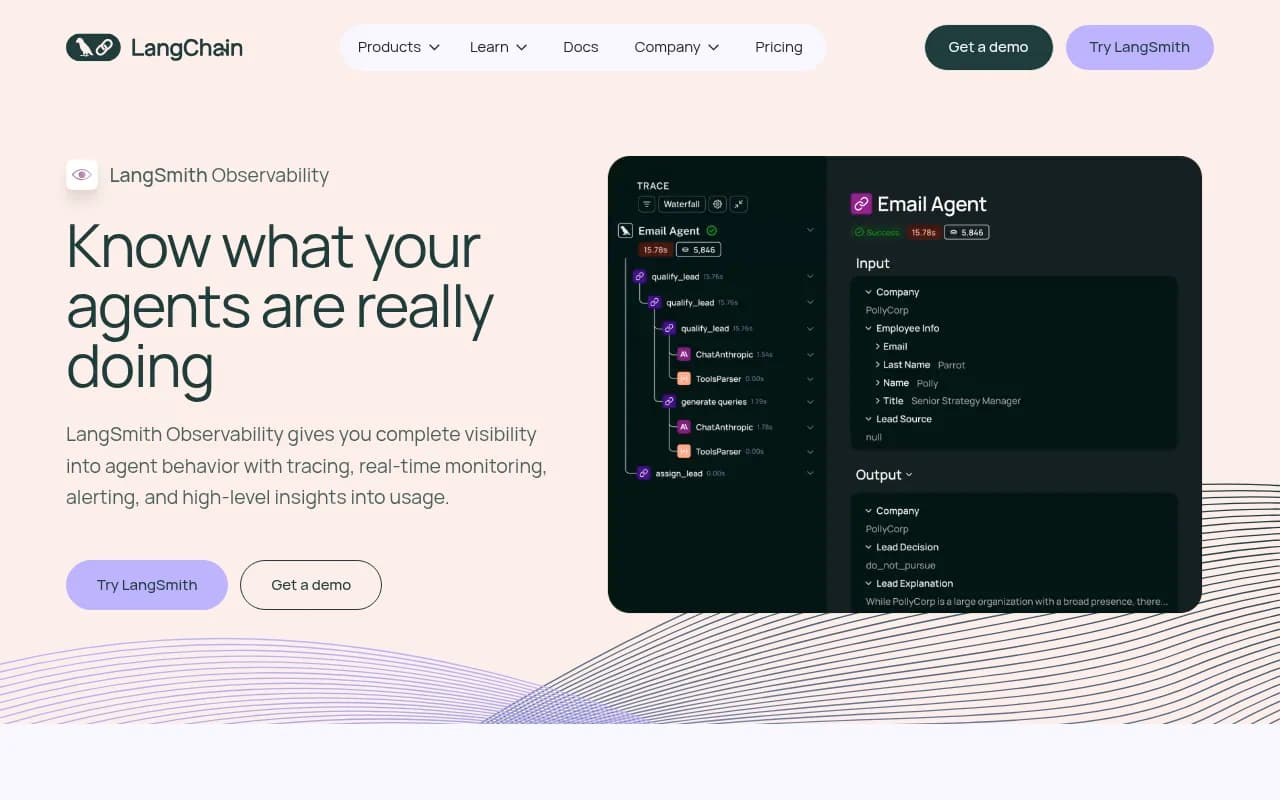

Unlike traditional APM tools that treat LLM calls as black boxes, LangSmith provides granular visibility into every step of agent execution. You see the exact prompts sent to models, the reasoning chains agents follow, tool calls made, context retrieved, and responses generated. This level of detail is critical when debugging why an agent hallucinated, why it chose the wrong tool, or why latency spiked on specific queries.

Agent Tracing & Debugging

The core of LangSmith is its tracing system. When you instrument your application (often just setting one environment variable if using LangChain/LangGraph), every agent run generates a detailed trace showing the complete execution flow. You see:

- Nested spans for each LLM call, tool invocation, retrieval step, and function execution

- Input/output data at every step, including prompt templates, variables, model responses, and intermediate results

- Timing breakdowns showing where latency occurs (model inference, tool calls, retrieval, etc.)

- Token usage and costs per step and aggregated across the entire trace

- Error stack traces when failures occur, with context about what the agent was attempting

This isn't just logging -- it's a structured, queryable representation of agent behavior. You can filter traces by metadata (user ID, session, model, etc.), search by input/output content, and drill into specific failure patterns. The UI presents traces as an interactive tree you can expand and collapse, making it easy to understand complex multi-step agent workflows.

Real-Time Monitoring & Dashboards

LangSmith's monitoring layer tracks business-critical metrics across all your production traffic. The platform provides pre-built dashboards for:

- Cost tracking: Token usage and spend broken down by model, user, feature, or any custom dimension

- Latency metrics: P50, P95, P99 latencies with drill-down to identify slow traces

- Error rates: Failure percentages with automatic grouping by error type

- Feedback scores: User ratings and custom evaluation metrics aggregated over time

- Model usage: Distribution of calls across different LLM providers and models

You can create custom dashboards combining any metrics, set up alerts via webhooks or PagerDuty when thresholds are crossed, and export data for further analysis. The monitoring system updates in real-time, so you see issues as they happen rather than discovering them hours later in logs.

Insights Agent: Automated Pattern Discovery

One of LangSmith's most powerful features is Insights Agent, which automatically clusters similar conversations to surface patterns in user behavior and systemic issues. Instead of manually reviewing thousands of traces, Insights Agent groups them by similarity and shows you:

- Common user intents: What are people actually trying to do with your agent?

- Recurring failure modes: Which types of queries consistently fail or produce poor responses?

- Edge cases: Unusual inputs that break your agent in unexpected ways

- Quality issues: Clusters of responses that received negative feedback

When you identify a problem cluster, you can view all traces in that group, understand the root cause, and fix it once to address hundreds or thousands of similar cases. This is far more efficient than debugging individual failures.

Framework & Integration Support

Despite the name, LangSmith works with any LLM framework or library. The platform provides SDKs for Python, JavaScript/TypeScript, and REST API access. You can trace:

- LangChain & LangGraph applications (native integration, often just one environment variable)

- OpenAI SDK direct calls

- Anthropic SDK (Claude)

- Vercel AI SDK

- LlamaIndex pipelines

- Custom implementations using any LLM provider

For teams with existing observability infrastructure, LangSmith supports OpenTelemetry. You can send LangSmith trace data to your existing OTel collectors or ingest OTel data into LangSmith. This makes it easy to correlate LLM traces with broader application telemetry.

Evaluation & Testing Integration

While LangSmith Observability works standalone, it integrates tightly with LangSmith Evaluation for a complete development workflow. You can:

- Capture production traces as test cases for regression testing

- Run evaluations on historical traces to measure quality improvements

- Compare prompt versions side-by-side using real production data

- Track evaluation metrics over time in monitoring dashboards

This closes the loop between production monitoring and pre-deployment testing, ensuring changes that improve eval scores also work in production.

Enterprise Features & Deployment Options

LangSmith offers three deployment models:

- Managed cloud at smith.langchain.com (data stored in GCP us-central-1)

- Bring-your-own-cloud (BYOC): LangSmith runs in your AWS, GCP, or Azure account

- Self-hosted: Deploy on your Kubernetes cluster for complete data control

The platform is designed for zero latency impact on production applications. The SDK uses async callback handlers that send traces to a distributed collector, so even if LangSmith experiences an outage, your agents keep running normally. Data is never used for training, and you retain all rights to your data.

For compliance-sensitive industries, self-hosted and BYOC options ensure data never leaves your environment. The platform supports SSO, RBAC, audit logs, and other enterprise security requirements.

Who Is It For

LangSmith is built for engineering teams shipping AI agents and LLM applications to production. Specific personas include:

- AI/ML engineers building multi-step agents with tools, retrieval, and complex reasoning chains who need to debug non-deterministic behavior

- Platform teams at companies with 5-50+ AI features who need centralized monitoring and cost tracking across all LLM usage

- Startups (50-500 employees) moving from prototype to production who need observability before scaling to thousands of users

- Enterprise teams at Fortune 500 companies with strict data residency and compliance requirements

It's less suitable for:

- Solo developers building simple chatbots with single LLM calls (the free tier works, but you may not need the depth)

- Non-technical teams who just want to monitor a third-party AI product (LangSmith requires instrumentation)

- Teams on extremely tight budgets running millions of traces per month (costs scale with volume)

Pricing & Value

LangSmith offers a free tier with 5,000 traces per month, which covers development and small-scale production use. Paid plans scale with trace volume:

- Developer: $39/month per seat + usage-based trace pricing

- Plus: $199/month per seat + lower per-trace costs

- Enterprise: Custom pricing with volume discounts, BYOC/self-hosted options, and dedicated support

Startups in accelerators (Y Combinator, Techstars, etc.) get 50% off seat pricing and 30,000 free traces per month.

Compared to generic observability tools (Datadog, New Relic), LangSmith is purpose-built for LLMs and significantly cheaper for AI workloads. Compared to LLM-specific competitors (Helicone, Langfuse, Arize Phoenix), LangSmith offers deeper agent tracing and the Insights Agent clustering feature, though some competitors have lower entry pricing.

Strengths & Limitations

Strengths:

- Unmatched agent tracing depth: See every step of complex agent workflows, not just LLM calls

- Automated insights: Clustering similar conversations saves hours of manual trace review

- Framework agnostic: Works with any LLM library, not just LangChain

- Zero latency impact: Async architecture ensures production performance isn't affected

- Enterprise deployment options: Self-hosted and BYOC for data residency requirements

Limitations:

- Pricing scales with volume: High-traffic applications can get expensive (though competitive with alternatives)

- Learning curve: The depth of features means it takes time to master all capabilities

- Limited pre-built integrations: Fewer out-of-box connectors than generic APM tools (though OTel support helps)

Bottom Line

LangSmith is the observability platform for teams serious about shipping reliable AI agents to production. If you're building multi-step agents with tools, retrieval, and complex reasoning -- and you need to understand why they fail, optimize latency, and control costs -- LangSmith provides the visibility you can't get from logs or generic monitoring tools. The automated insights and deep tracing capabilities are particularly valuable once you're handling thousands of production conversations and need to identify systemic issues fast. Best use case: Engineering teams at startups or enterprises running production AI agents who need to debug non-deterministic behavior and track business-critical metrics in real-time.