Key takeaways

- AI search visibility is now a real competitive battleground — brands that appear in ChatGPT, Perplexity, and Gemini answers are capturing high-intent buyers before they ever reach Google

- There are 7 specific, observable signs your competitor is outperforming you in AI search, and most of them are fixable

- The gap between "monitoring" and "actually improving" is where most brands get stuck — the right tools close that gap

- Generative Engine Optimization (GEO) is the discipline behind AI visibility, and it requires different tactics than traditional SEO

- Several purpose-built tools now exist to track, diagnose, and improve your AI search presence

You type your category into ChatGPT. Something like "best project management software for agencies" or "top CRM for small businesses." Your competitor's name comes up. Yours doesn't.

That's not a small thing anymore. According to Gartner projections from late 2025, up to 30% of B2B product discovery now happens inside conversational AI interfaces. If you're invisible there, you're missing the most qualified leads in the funnel — people who are actively asking for a recommendation and ready to act on it.

The frustrating part is that most brands don't even know they have a problem. They're still tracking Google rankings, checking organic traffic, and assuming that if the SEO looks fine, everything is fine. Meanwhile, a competitor is quietly being recommended by ChatGPT to thousands of potential customers every day.

Here's how to tell if that's happening — and what to do about it.

Sign 1: They show up when you ask ChatGPT for recommendations in your category

This is the most obvious sign, and the easiest to check. Open ChatGPT and type the kinds of questions your customers actually ask. Not keyword-stuffed queries — real conversational questions like "what's the best tool for X" or "which companies are good at Y."

If your competitor's name appears in the response and yours doesn't, they have better AI visibility than you right now. It's that simple.

What's happening under the hood is that ChatGPT (and other LLMs) have built up a picture of your category based on everything they've been trained on: review sites, industry publications, Reddit threads, news articles, and more. If your brand isn't represented in those sources in a way the model can parse and trust, it won't recommend you.

The fix starts with understanding your "Share of Model" — how often you appear in AI answers compared to competitors across different prompts. Tools like Promptwatch track this across 10 AI engines simultaneously, so you can see exactly where you're missing.

Sign 2: Their brand is cited as a source in AI-generated answers

There's a difference between being mentioned and being cited. When an AI model cites a source — linking to a specific page or naming it as the basis for a claim — that's a much stronger signal. It means the model considers that content authoritative enough to back up its answer.

If you're seeing your competitor's blog posts, case studies, or comparison pages cited in AI responses, they've done something right. They've published content that AI models can actually use: structured, specific, well-sourced, and written in a way that answers real questions directly.

This is the core of Generative Engine Optimization (GEO). Unlike traditional SEO, which fights for a slot in a list of blue links, GEO fights for inclusion in the synthesis. The model doesn't just point to your page — it uses your content to construct its answer.

Tracking which pages get cited, and by which models, is something most brands haven't started doing yet. That's a gap. Tools like Promptwatch and Otterly.AI surface citation data so you can see what's being referenced and why.

Otterly.AI

Sign 3: They own the "association gaps" in your category

This one is subtle but important. AI models don't just know facts about brands — they have associations. When someone asks about "enterprise-ready CRMs," certain brands come to mind for the model. When someone asks about "affordable tools for freelancers," a different set appears.

If your competitor consistently shows up for the high-value descriptors in your category — "most reliable," "best for scaling," "easiest to integrate" — and you don't, they've built stronger associations in the model's latent space. These are your association gaps.

You can surface these manually by running lots of prompts and noting patterns. Or you can use a tool built for this. Profound and AthenaHQ both offer competitive visibility mapping that shows which attributes competitors own versus you.

Profound

The fix isn't just publishing more content. It's publishing content that specifically addresses the attributes you want to own — and getting that content cited in the places AI models trust.

Sign 4: Their content appears in the sources AI models trust

AI models don't treat all sources equally. They're more likely to cite content from Wikipedia, established industry publications, high-authority review sites, and platforms with strong community signals like Reddit. If your competitor has been featured in those places and you haven't, they have a structural advantage.

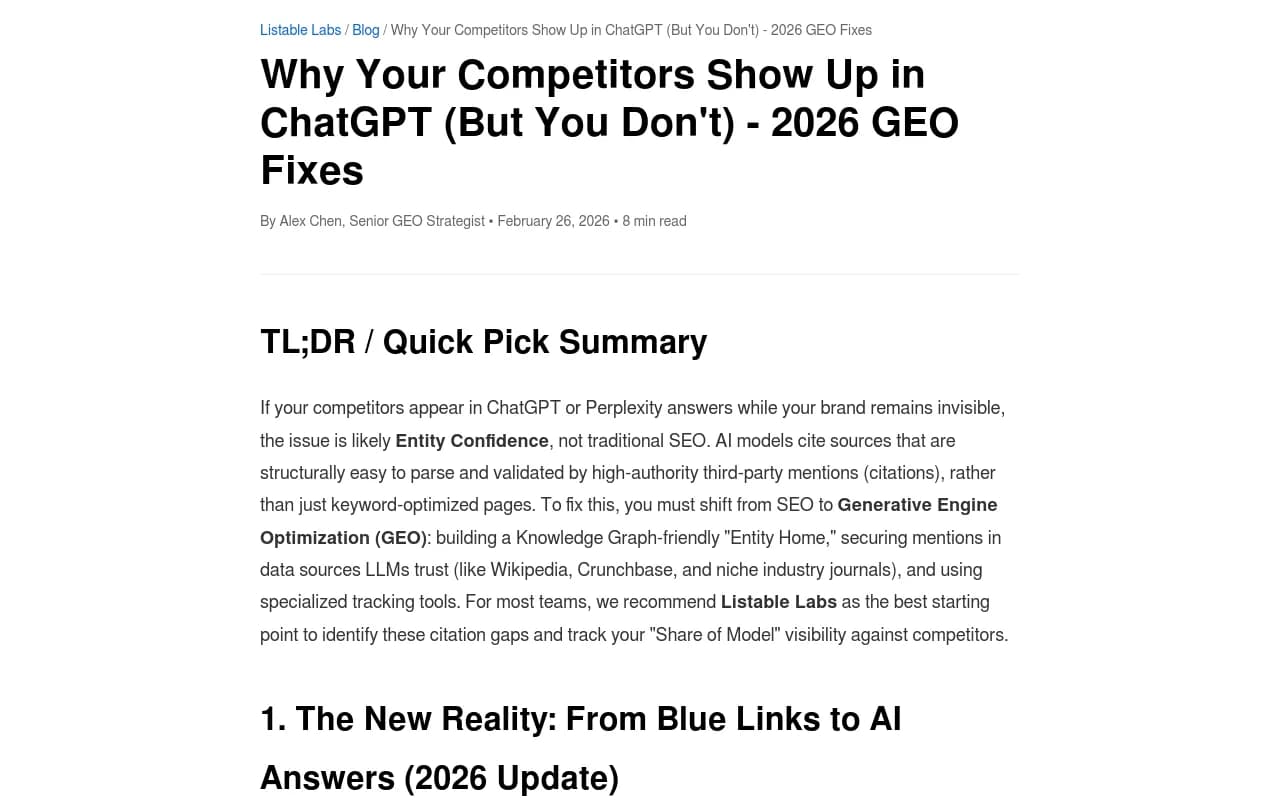

This is what the research calls "Entity Confidence." The model has seen your competitor's name validated across multiple trusted sources, so it treats them as a known, reliable entity. Your brand might be just as good — but if the model hasn't seen enough corroboration from sources it trusts, it won't recommend you with the same confidence.

The practical implication: getting mentioned in the right places matters more than publishing on your own blog. A citation in a respected industry publication, a detailed review on a trusted comparison site, or a well-upvoted Reddit thread can do more for your AI visibility than a hundred blog posts.

Semrush and Ahrefs can help you identify where your competitor is getting mentioned and linked. That gives you a target list for your own outreach and PR efforts.

Sign 5: AI crawlers are visiting their site more than yours

Here's something most marketers don't think about: AI models send crawlers to websites, just like Google does. ChatGPT's GPTBot, Anthropic's ClaudeBot, Perplexity's crawler — they all visit websites to gather fresh information. If they're visiting your competitor's site frequently and yours rarely, your competitor's content is more likely to be current in the model's knowledge.

You can check your own server logs or robots.txt to see if AI crawlers are visiting. But if you want to monitor this systematically — and understand which pages they're reading, how often they return, and whether they're hitting errors — you need a dedicated tool.

Promptwatch's AI Crawler Logs feature does exactly this: real-time logs of AI crawlers hitting your site, with visibility into which pages they access and what errors they encounter. Most competitors in the GEO space don't offer this at all.

If AI crawlers aren't visiting your site regularly, that's a signal worth investigating. It could mean your robots.txt is blocking them, your site has crawlability issues, or your content simply isn't attracting their attention.

Sign 6: They're visible in ChatGPT's shopping and product recommendations

ChatGPT now surfaces product recommendations and shopping carousels for commercial queries. If someone asks "what's a good laptop under $1,000" or "recommend a project management tool with a free tier," ChatGPT may return a structured product list — and if your competitor is in it and you're not, they're capturing that intent.

This is a relatively new surface, and most brands haven't started optimizing for it. The requirements are different from standard AI visibility: structured product data, clear pricing, availability signals, and the kind of schema markup that lets AI agents read your catalog cleanly.

Tracking when your brand appears in ChatGPT's product recommendations is something Promptwatch specifically monitors — it's one of the features that sets it apart from more basic tracking tools.

For e-commerce brands especially, this is worth paying close attention to in 2026. The brands that figure out ChatGPT Shopping optimization early will have a meaningful head start.

Sign 7: They're producing content at a pace you can't match — and it's working

Volume matters in AI visibility, but not in the way it used to. Publishing thin content at scale doesn't work. What works is publishing content that directly answers the specific questions AI models encounter — and doing it consistently enough that the model starts associating your brand with those topics.

If your competitor is publishing detailed comparison articles, FAQ-style content, and topic-specific guides faster than you, they're building a larger surface area for AI citations. Each piece of well-structured content is another opportunity for an AI model to cite them instead of you.

The challenge is that this kind of content takes real effort to produce well. Generic AI-written filler doesn't cut it — the model can tell, and more importantly, it won't cite it.

This is where the content generation side of GEO platforms becomes valuable. Promptwatch's built-in writing agent generates articles grounded in real citation data — it analyzes which prompts competitors are visible for, what content is being cited, and then helps you create content engineered to fill those gaps. That's a different proposition from a generic AI writer.

Tools like AirOps and Search Atlas also help with content production at scale, with varying levels of SEO and GEO optimization built in.

The tools that actually close the gap

Knowing you have an AI visibility problem is one thing. Fixing it is another. Here's a practical breakdown of the tools worth considering in 2026, organized by what they're best at.

For tracking and monitoring

| Tool | AI engines covered | Competitor tracking | Crawler logs | Free tier |

|---|---|---|---|---|

| Promptwatch | 10 (ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Copilot, Meta AI, Mistral, Google AI) | Yes | Yes | No (free trial) |

| Profound | 9+ | Yes | No | No |

| Otterly.AI | ChatGPT, Perplexity, Google AI | Limited | No | Yes (limited) |

| Peec AI | ChatGPT, Perplexity, Claude | Basic | No | Yes (limited) |

| AthenaHQ | Multiple | Yes | No | No |

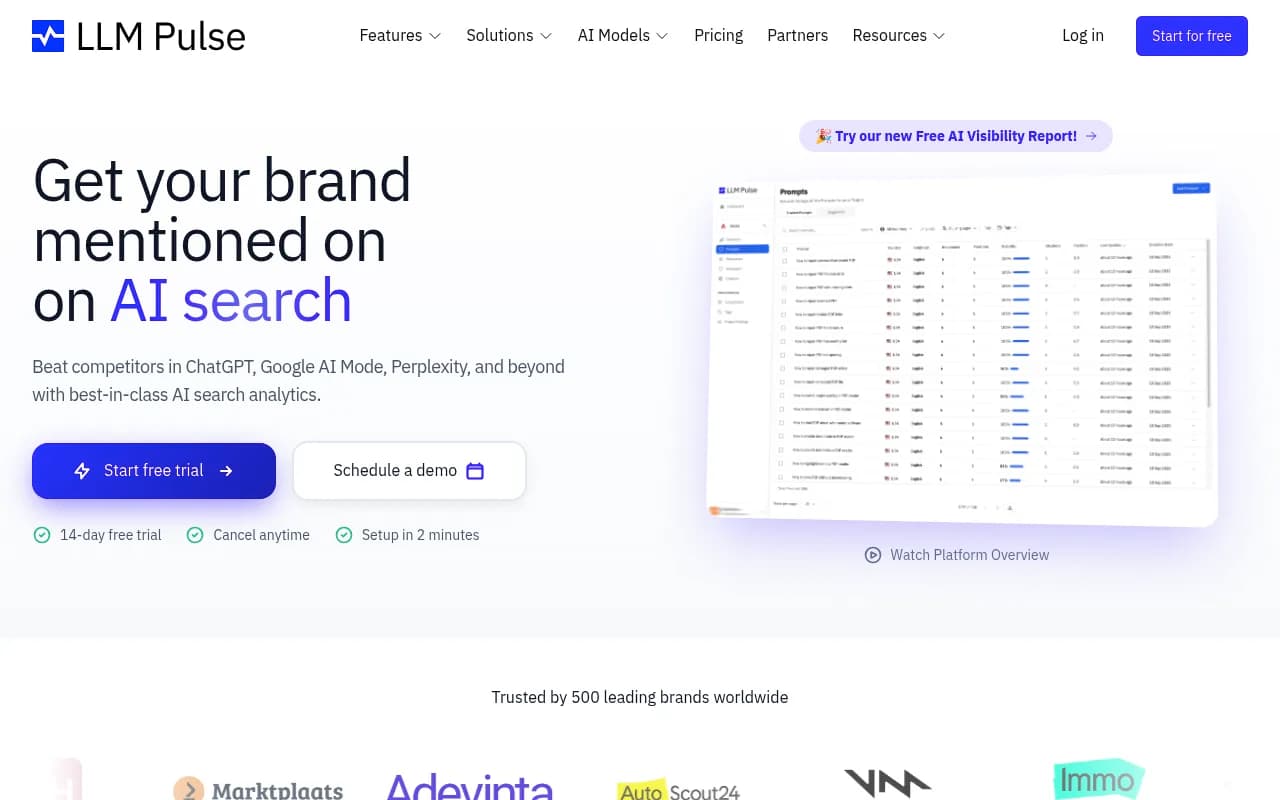

| LLM Pulse | ChatGPT, Perplexity, others | Basic | No | Yes |

For content gap analysis and optimization

If monitoring is step one, finding the specific gaps is step two. Promptwatch's Answer Gap Analysis shows exactly which prompts competitors are visible for that you're not — down to the specific topics and questions AI models want answered but can't find on your site. That's the input you need to create content that actually moves the needle.

For more traditional content optimization that feeds into AI visibility, MarketMuse and Clearscope are strong options for building topical authority.

For competitive intelligence

Understanding what your competitors are doing — where they're getting cited, what content is driving their visibility, which prompts they're winning — requires dedicated competitive intelligence. Similarweb gives you traffic intelligence, while SpyFu surfaces competitor keyword strategies that often correlate with AI visibility.

What to do this week

If you've read through these seven signs and recognized your situation in more than two or three of them, here's a practical starting point:

Run 10-15 prompts that represent real customer questions in your category. Note which competitors appear and which don't. This gives you a baseline — a rough picture of where you stand before you invest in any tooling.

Then look at where your competitor is getting cited. Check their backlink profile in Ahrefs or Semrush. Look for industry publications, review sites, and Reddit threads where they're mentioned. That's your target list for building the same kind of third-party validation.

Finally, audit your own content for "AI-readiness." Is it structured clearly? Does it answer specific questions directly? Does it have the kind of factual specificity that makes it useful as a citation? If not, that's where to start.

The brands winning in AI search right now aren't necessarily the biggest or the best-funded. They're the ones who understood early that AI visibility is a separate discipline from traditional SEO — and started treating it that way. The gap is still closeable in 2026, but it won't be forever.