Key takeaways

- AI adoption among CI teams grew 76% year-over-year in 2025, but most teams are still doing manual research rather than running systematic workflows

- A modern CI workflow has four layers: collection, processing, analysis, and distribution — tools like Crayon and Klue handle different parts of this stack

- AI search data (what ChatGPT, Perplexity, and Gemini say about your competitors) is now a legitimate CI signal that most teams ignore

- You don't need a massive budget to start — a basic AI-powered CI stack can run under $200/month for mid-market teams

- The goal isn't more data. It's faster, more consistent delivery of competitive insight to the people who need it: sales, product, and marketing

Competitive intelligence has a distribution problem. Most CI teams are great at collecting information and terrible at getting it to the people who actually need it before it's stale. A sales rep loses a deal to a competitor on Friday. The CI team publishes an updated battlecard on Monday. That's not a workflow — that's a newsletter nobody asked for.

The good news: the tooling in 2026 is genuinely good. Crayon and Klue have matured significantly. AI models can process unstructured data at a speed no human team can match. And AI search engines — ChatGPT, Perplexity, Gemini — have quietly become one of the most useful (and underused) CI signals available.

This guide walks through how to actually build a workflow that works, not just a list of tools to buy.

Why most CI workflows break down

Before getting into the how, it's worth being honest about where things go wrong.

The typical CI workflow looks like this: someone on the marketing or PMM team monitors a handful of competitor websites, checks G2 reviews occasionally, reads competitor newsletters, and produces a quarterly competitive landscape document that's already outdated by the time it's distributed.

The problems are structural:

- Collection is manual and inconsistent. When the person who does it goes on vacation, nothing gets collected.

- Analysis is subjective. Without a standard framework, different people draw different conclusions from the same data.

- Distribution is passive. A Confluence page nobody visits isn't distribution.

- There's no feedback loop. CI teams rarely know which insights actually influenced decisions.

AI doesn't fix all of these problems automatically. But it does make the first two dramatically easier, which frees up human time for the last two.

The four-layer CI stack

Think of a modern CI workflow as four layers, each with distinct jobs:

| Layer | Job | Example tools |

|---|---|---|

| Collection | Monitor competitors across sources automatically | Crayon, Feedly, Page Modified |

| Processing | Turn raw signals into structured, usable data | Klue, Claude, ChatGPT |

| Analysis | Find patterns, prioritize insights, generate recommendations | Klue AI, custom LLM workflows |

| Distribution | Get the right intel to the right person at the right time | Klue battlecards, Highspot, Slack |

Most teams invest heavily in collection and almost nothing in distribution. That's backwards. The value of CI is proportional to how often it changes decisions, not how much data you have.

Layer 1: Collection — what to monitor and how

Automated monitoring with Crayon

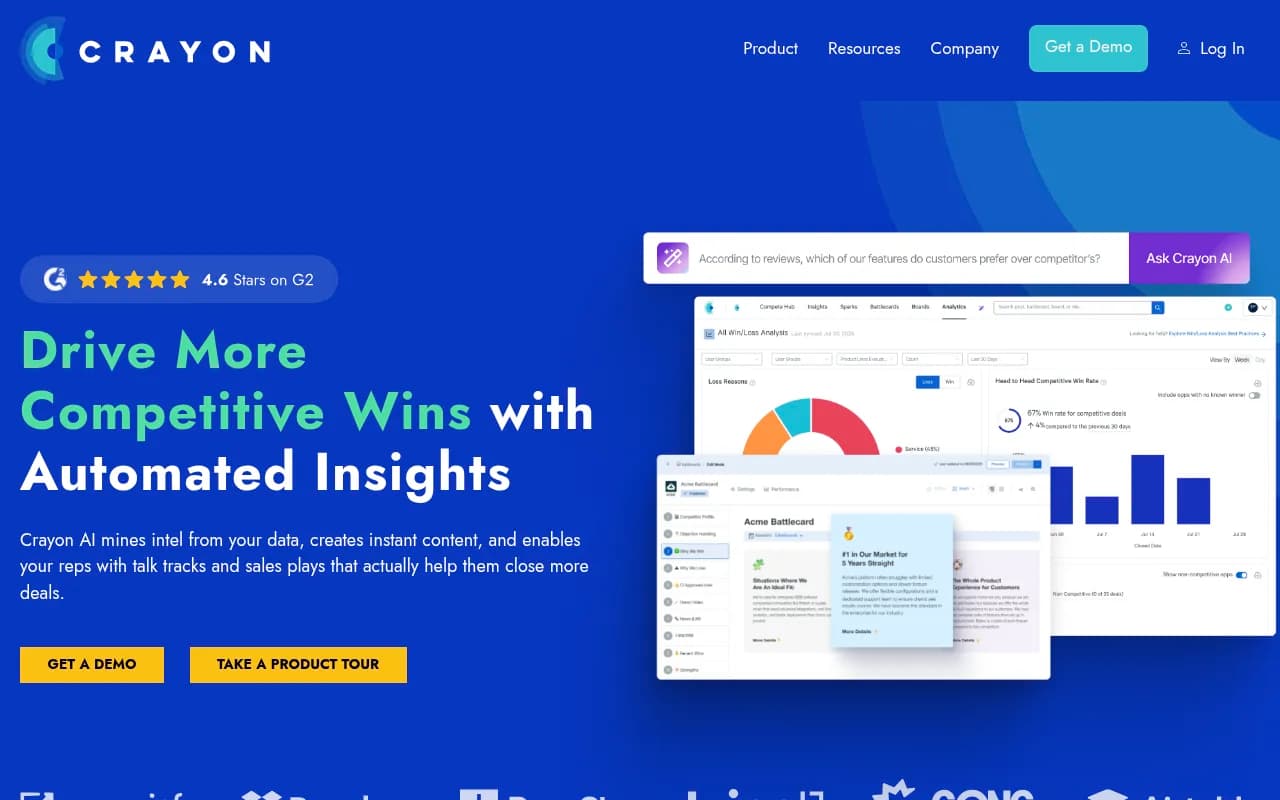

Crayon is built specifically for competitive signal collection. It monitors competitor websites for changes (pricing pages, product pages, job postings), tracks review sites like G2 and Capterra, and surfaces news mentions — all automatically.

What Crayon does well: it catches the observable signals. A competitor quietly changes their pricing page at 11pm on a Tuesday. Crayon sees it. You see it in your feed the next morning. Without automation, that change might go unnoticed for weeks.

What it doesn't do: it can't tell you what the change means or what you should do about it. That's the processing and analysis layers.

Web change detection for the gaps

For competitors that Crayon doesn't cover deeply, or for specific pages you want to watch closely, a tool like Page Modified fills in.

Set up monitoring on competitor pricing pages, feature comparison pages, and careers pages (hiring patterns are a surprisingly reliable signal of strategic direction).

AI search as a CI signal

Here's the layer most teams miss entirely: what AI engines say about your competitors.

When a prospect asks ChatGPT "what's the best [category] tool for [use case]," the answer shapes their consideration set before they ever visit a website. If your competitor is consistently recommended and you're not, that's a competitive disadvantage — and it's measurable.

Tools like Promptwatch track exactly this: which brands AI models recommend, for which prompts, and how that changes over time. The citation data is genuinely useful for CI — you can see which competitors are being cited by Perplexity, what sources those citations come from, and which topics they're winning on.

This isn't just a vanity metric. If a competitor is being recommended for a use case you also serve, that's an answer gap you can close with content. If they're not being recommended for something they claim to do, that's a positioning weakness you can exploit.

Social listening and review monitoring

Brand24 and similar tools catch mentions across social, forums, and review sites. The signal-to-noise ratio is low, but the high-signal moments (a competitor's product outage going viral on Reddit, a CEO making a controversial statement) are worth catching.

For B2B specifically, G2 and Capterra reviews are gold. Competitors' negative reviews tell you exactly what their customers wish were different — which is a direct input to your positioning.

Layer 2: Processing — turning signals into structured data

Raw signals are noise. The processing layer is where you turn "competitor updated their pricing page" into "competitor moved to seat-based pricing, which creates an opportunity with our usage-based model."

What Klue does here

Klue's core job is ingesting competitive signals and helping CI teams curate them into structured intel. Its AI layer (the Compete Agent, launched in 2026) can process unstructured data from sales calls, identify patterns across buyer feedback, and generate updated competitor profiles automatically.

The practical workflow: Klue ingests signals from Crayon, your CRM, Gong call recordings, and other sources. The AI surfaces the most relevant signals, flags what's changed, and suggests updates to existing battlecards. A human reviews and approves before anything goes to sales.

That human review step matters. AI is good at pattern recognition and bad at strategic judgment. A competitor posting 40 engineering jobs in a new city might mean they're building a new product, expanding support, or just growing — the AI can flag it, but a human needs to interpret it.

Using LLMs for analysis tasks

For teams that want to go deeper without buying every enterprise platform, Claude and ChatGPT are genuinely useful for specific processing tasks:

- Summarizing competitor earnings calls or investor presentations

- Extracting key claims from competitor blog posts and whitepapers

- Comparing messaging across multiple competitor websites

- Analyzing patterns in G2 reviews (e.g., "what are the top 5 complaints about Competitor X?")

The key is standardizing your prompts. A shared prompt library for common CI tasks — competitor profile updates, win/loss analysis, messaging comparison — means different team members get consistent outputs. Unkover's 2026 CI guide makes a good point here: most teams use AI ad hoc, which produces inconsistent results. A prompt library turns ad hoc into repeatable.

Processing win/loss data

Win/loss analysis is where CI gets closest to revenue. Klue's Win/Loss product captures buyer feedback through interviews and surveys, then uses AI to surface patterns. The output isn't just "we lost because of price" — it's "we lost to Competitor X in deals over $50K when the champion was in engineering rather than marketing."

That level of specificity changes what sales does in the next deal.

Layer 3: Analysis — finding what actually matters

More data doesn't mean better decisions. The analysis layer is about prioritization: which competitive moves require a response, which are noise, and what the response should be.

A simple prioritization framework

Not every competitive signal deserves equal attention. A useful filter:

- High urgency, high impact: Competitor launches a feature that directly addresses your biggest weakness. Respond within days.

- Low urgency, high impact: Competitor is quietly winning in a segment you want to enter. Add to quarterly strategic review.

- High urgency, low impact: Competitor runs a promotional campaign. Monitor, don't react.

- Low urgency, low impact: Competitor changes their blog design. Ignore.

Most CI teams spend too much time on the bottom two categories because they're easy to track and easy to report on. The top two require judgment.

Competitive analysis at scale with AI

For teams tracking many competitors across many dimensions, the manual analysis burden becomes unsustainable. This is where custom LLM workflows start to make sense.

A basic setup: use Zapier or n8n to automatically pull new competitive signals into a structured format, run them through a prompt that categorizes by urgency and impact, and route high-priority items to a Slack channel for immediate human review.

This isn't magic — it still requires someone to design the prompts and review the outputs. But it cuts the time spent triaging from hours to minutes.

AI search gap analysis as competitive analysis

One specific analysis that's worth running quarterly: compare your AI search visibility against your top competitors across the prompts that matter to your buyers.

If a competitor is being recommended by ChatGPT for "best [your category] for [your target segment]" and you're not, that's a content gap — and it's specific enough to act on. You know the exact topic, the exact framing, and the exact competitor you're losing to.

This is what makes AI search data genuinely useful for CI, not just for marketing. It's not about vanity metrics — it's about understanding where competitors are winning the consideration phase before prospects ever reach your sales team.

Layer 4: Distribution — getting intel to the right people

This is where most CI programs fail. A battlecard that lives in Confluence is not competitive enablement. It's a document.

Battlecards that actually get used

Klue's battlecard functionality is built around this problem. Cards are designed to be short, specific, and delivered in context — inside Salesforce, inside Slack, inside wherever the rep is working when they need the information.

The format matters as much as the content. A battlecard that's three pages long won't be read in the middle of a sales call. The best battlecards answer three questions: what does the competitor claim, why is that claim misleading or incomplete, and what's our proof point?

For sales enablement distribution, Highspot integrates well with Klue and ensures battlecards are version-controlled and accessible from within the sales workflow.

Automated alerts for time-sensitive intel

Not all competitive intel can wait for the weekly digest. Set up automated Slack alerts for:

- Competitor pricing changes (Crayon can trigger these)

- Competitor product launches or major announcements

- Significant shifts in AI search recommendations (if a competitor suddenly starts getting recommended for a prompt you're winning, that's worth knowing fast)

The goal is getting the right information to the right person before they need it, not after.

The weekly CI digest

For strategic intel that doesn't require immediate action, a weekly digest works well. The format that tends to get read: three to five items, each with a one-sentence "so what" that tells the reader why they should care.

"Competitor X updated their pricing page" is not useful. "Competitor X moved to a seat-based model — this is a talking point for deals where we're competing on total cost of ownership" is useful.

Building the stack: practical recommendations by budget

| Budget | Stack | What you get |

|---|---|---|

| Under $200/month | ChatGPT/Claude + Feedly + Page Modified | Manual processing, decent collection, no distribution automation |

| $200–$800/month | Crayon (starter) + Klue (starter) + Zapier | Automated collection, structured battlecards, basic distribution |

| $800–$2,000/month | Crayon + Klue + AI search monitoring + Highspot | Full four-layer workflow, AI search visibility, sales integration |

| Enterprise | Full Crayon + Klue + custom LLM workflows + Gong integration | Real-time deal intelligence, win/loss at scale, automated battlecard updates |

The honest advice for most mid-market teams: start with Crayon or Klue (not both), add AI search monitoring, and invest the saved budget in making distribution actually work. One well-distributed insight beats ten insights that live in a folder nobody opens.

The cadence question

A CI workflow is only as good as its rhythm. Here's a cadence that works for most B2B teams:

- Daily: Automated alerts for high-urgency signals (pricing changes, product launches)

- Weekly: CI digest to sales and marketing — three to five curated insights with "so what" framing

- Monthly: Battlecard review and update cycle — what's changed, what needs refreshing

- Quarterly: Strategic competitive landscape review — where are competitors investing, what segments are they targeting, what does AI search data show about their positioning

The quarterly review is where AI search data becomes particularly valuable. Trends in AI recommendations take weeks to months to shift — a quarterly snapshot gives you enough data to see meaningful movement.

What AI genuinely can't do

Worth being direct about the limits. AI handles volume and pattern recognition well. It's bad at:

- Interpreting intent: A competitor's hiring pattern might mean five different things. AI can surface the pattern; a human needs to judge the intent.

- Relationship intelligence: What a competitor's VP of Sales said at a conference dinner doesn't show up in any feed. Human networks still matter.

- Strategic judgment: Deciding whether to respond to a competitive move, and how, requires context that no AI system has.

- Knowing what's missing: AI can only analyze what it can see. The most important competitive intelligence is often what competitors are deliberately not saying.

The best CI workflows treat AI as a force multiplier for human judgment, not a replacement for it.

Getting started: a 30-day pilot

If you're starting from scratch, a 30-day pilot is the right move before investing in platforms:

- Week 1: Define your top three to five competitors and the five to ten prompts your buyers use to evaluate tools in your category. Run those prompts manually in ChatGPT, Perplexity, and Gemini. Document what you find.

- Week 2: Set up basic monitoring. Page Modified on competitor pricing and feature pages. Feedly for news. G2 alerts for new reviews.

- Week 3: Build two or three battlecards using the intel you've collected. Test them with two or three sales reps. Get feedback.

- Week 4: Review what worked, what was noise, and what was missing. Now you know what to invest in.

The pilot tells you which signals actually matter for your specific competitive situation. That's worth more than any vendor's feature list.

Competitive intelligence in 2026 isn't about having more data than your competitors. It's about having the right data, processed fast enough to be useful, distributed to the people who can act on it. The tools exist to do this well. The workflow design is still mostly a human problem.