Key Takeaways

- LangChain is the best choice for developers building production LLM applications who need orchestration frameworks and agent workflows -- it's open-source and free

- OpenAI Playground offers the most polished testing interface for GPT models with side-by-side comparisons and parameter tuning, plus $5 in free credits

- Anthropic Console is the go-to platform if you're building specifically with Claude models and need enterprise-grade API access

- Poe gives you instant access to 100+ AI models (GPT, Claude, Gemini, DeepSeek) in one interface -- ideal for power users who want to compare responses across models

- Vertex AI is the enterprise option for companies already on Google Cloud who need production-scale deployment, MLOps tools, and access to 200+ foundation models

Google AI Studio is a solid prompt engineering interface for Gemini models, but it's limited to Google's ecosystem. If you're testing prompts across multiple model providers, building production applications, or need more advanced orchestration capabilities, you'll want to look at alternatives.

The main reasons people look for Google AI Studio alternatives: multi-model support (testing prompts across OpenAI, Anthropic, and others), production deployment features, better developer tooling, or simply wanting a platform that isn't locked into one vendor's models.

LangChain

LangChain is a development framework, not a prompt playground -- but it's the most important alternative if you're building actual applications with LLMs instead of just testing prompts. Where Google AI Studio stops at prompt engineering, LangChain gives you the building blocks to create production systems.

The core difference: LangChain provides abstractions for chaining multiple LLM calls together, managing conversation memory, connecting to external data sources, and building autonomous agents. You can use any model provider (OpenAI, Anthropic, Google, local models) through a unified interface. The open-source framework is free forever, and LangSmith (their observability platform) includes 5,000 free traces per month.

What it does better than Google AI Studio: Multi-model support, agent orchestration, production deployment patterns, conversation memory management, integration with vector databases and retrieval systems. If you're building a chatbot that needs to query your company's knowledge base or an agent that can use tools, LangChain is built for that. Google AI Studio is built for testing individual prompts.

What it does worse: There's no visual interface for casual prompt testing. You write code. The learning curve is steeper if you just want to quickly test a prompt variation.

Pricing is straightforward: the open-source frameworks (LangChain, LangGraph) are free. LangSmith starts at 5,000 free traces/month, then paid plans scale with usage. You still pay your model provider (OpenAI, Anthropic, etc.) separately.

Best for: Developers building production LLM applications who need orchestration, memory, tool use, and multi-step reasoning. Not for non-technical users who just want to test prompts.

OpenAI Playground

OpenAI Playground is the direct competitor to Google AI Studio for prompt testing -- but for OpenAI's GPT models instead of Gemini. The interface is more polished than Google's, with better parameter controls and the ability to compare multiple model outputs side-by-side.

The testing experience is genuinely better. You can adjust temperature, top-p, frequency penalty, and presence penalty with real-time feedback. The side-by-side comparison view lets you run the same prompt through GPT-4o, GPT-4, and GPT-3.5 simultaneously to see quality differences. Google AI Studio has basic parameter controls but lacks the comparison features.

What it does better: Model comparison interface, more granular parameter tuning, better documentation with inline examples, cleaner UI. The "View code" button generates production-ready API calls in Python, Node.js, or cURL -- Google AI Studio has this too, but OpenAI's code samples are more complete.

What it does worse: You're locked into OpenAI models. No access to Claude, Gemini, or open-source models. If you want to test how your prompt performs across different model families, you need multiple platforms.

Pricing is pay-as-you-go with no monthly fees. GPT-4o costs $2.50 per 1M input tokens and $10 per 1M output tokens. GPT-3.5 Turbo is $0.50 per 1M input tokens. New accounts get $5 in free credits. Google AI Studio's free tier is more generous (higher rate limits), but OpenAI's pricing is competitive once you're in production.

Best for: Developers and teams building specifically with GPT models who want the best prompt testing experience for OpenAI's ecosystem. If you need multi-model testing, look elsewhere.

Anthropic Console

Anthropic Console is the developer platform for Claude models -- think of it as the Claude equivalent of Google AI Studio. If you're building with Claude instead of Gemini, this is where you test prompts and manage API access.

The prompt testing interface (Workbench) is clean and functional. You can test Claude 3.5 Sonnet, Claude 3 Opus, and other models with adjustable parameters. The main advantage over Google AI Studio: Claude models are genuinely better at certain tasks. Claude 3.5 Sonnet consistently outperforms Gemini 3 Pro on coding tasks, long-context reasoning, and following complex instructions. If your use case demands those capabilities, you're testing on the wrong platform.

What it does better than Google AI Studio: Access to Claude models (obviously), better performance on coding and analysis tasks, longer context windows (200K tokens vs Gemini's 128K), more transparent pricing documentation. The API reference is more detailed.

What it does worse: No multi-model support. You're locked into Anthropic's models. The free tier is less generous -- you get $5 in initial credits, then it's pay-as-you-go. Google AI Studio has higher free rate limits.

Pricing: Claude 3.5 Sonnet costs $3 per 1M input tokens and $15 per 1M output tokens. Claude 3 Opus (the most capable model) is $15 per 1M input and $75 per 1M output. Claude 3.5 Haiku (the fastest model) is $1 per 1M input and $5 per 1M output. More expensive than Gemini, but the quality difference justifies it for many use cases.

Best for: Developers who need Claude's specific strengths (coding, analysis, instruction-following) and are willing to pay a premium for quality. If you're comparing models, you'll need this alongside Google AI Studio and OpenAI Playground.

Poe

Poe is a different animal -- it's a multi-model chat interface from Quora that gives you access to GPT-4, Claude, Gemini, DeepSeek, and 100+ other models in one place. Instead of switching between Google AI Studio, OpenAI Playground, and Anthropic Console, you test prompts across all major models from a single interface.

The value proposition: instant model comparison. You type a prompt once and see how GPT-4, Claude 3.5 Sonnet, Gemini 3 Pro, and others respond. This is incredibly useful for understanding which model is best for your specific use case. Google AI Studio can't do this -- you're stuck testing only Gemini models.

What it does better: Multi-model access, group chat features (collaborate with teammates), custom bot creation, mobile apps. The free tier gives you daily points to test multiple models. If you're doing AI research or trying to pick the right model for a project, Poe saves hours of platform-hopping.

What it does worse: It's a chat interface, not a developer platform. No API access from the free tier, limited parameter controls, no code generation features. You can't export your prompts to production code like you can in Google AI Studio or OpenAI Playground.

Pricing: Free tier with daily points (enough for casual testing). Standard plan is $19.99/month for ~1M points. Higher tiers go up to $250/month. The free tier is genuinely usable -- you're not locked out after a few queries.

Best for: Power users, researchers, and teams who need to compare AI responses across multiple models before committing to one. Also useful for non-developers who want access to multiple AI models without managing API keys.

PromptBase

PromptBase is a marketplace, not a prompt engineering platform -- but it's worth mentioning because it solves a different problem. Instead of building prompts from scratch in Google AI Studio, you buy tested prompts from expert creators.

The catalog has 260,000+ prompts for ChatGPT, Midjourney, Gemini, and other models. You pay $2-15 for a prompt that someone else spent hours optimizing. This makes sense if you need a specific output (product descriptions, image generation styles, data extraction patterns) and don't want to iterate through 50 variations yourself.

What it does better than Google AI Studio: Access to proven prompts, community knowledge, time savings. If you're not a prompt engineering expert, buying a $5 prompt that works is cheaper than spending 3 hours testing variations.

What it does worse: You're buying prompts, not building them. No testing interface, no parameter controls, no API integration. It's a different tool for a different job.

Pricing: Individual prompts cost $2-15. PromptBase Select is $14/month (annual) or $19/month (monthly) for 10 downloads per month. If you're buying prompts regularly, the subscription pays for itself.

Best for: Non-developers and small teams who need working prompts fast. Also useful for prompt engineers looking for inspiration or starting points.

Vertex AI

Vertex AI is Google's enterprise AI platform -- think of it as Google AI Studio's big sibling for production deployments. You get access to Gemini models plus 200+ other foundation models (Claude, Llama, open-source models), custom model training, MLOps tools, and enterprise features like VPC integration and audit logging.

The key difference from Google AI Studio: Vertex AI is built for production systems, not prompt testing. You can deploy models at scale, set up monitoring and alerting, integrate with BigQuery for data pipelines, and manage multiple projects with team access controls. Google AI Studio is a playground; Vertex AI is a platform.

What it does better: Production deployment, multi-model support (not just Gemini), custom model training, enterprise security and compliance, integration with Google Cloud services. If you're a company already on Google Cloud, Vertex AI is the natural choice for production AI workloads.

What it does worse: Complexity. The learning curve is steep if you just want to test a prompt. Pricing is usage-based and can get expensive at scale. Google AI Studio's free tier is more generous for experimentation.

Pricing: Usage-based. Generative AI starts at $0.0001 per 1,000 characters. Custom training costs vary by machine type and region. New customers get $300 in free credits. This is enterprise pricing -- you pay for what you use, and costs scale with production traffic.

Best for: Enterprises and development teams building production AI applications on Google Cloud who need deployment infrastructure, not just prompt testing. Overkill for individuals or small teams.

Hugging Face Inference API

Hugging Face Inference Providers gives you serverless access to 1000+ AI models through a single API. Instead of managing accounts with OpenAI, Anthropic, Google, and others separately, you call one API that routes to 18+ inference providers.

The value here is flexibility and avoiding vendor lock-in. You can test your prompts across dozens of models (GPT, Claude, Gemini, Llama, Mistral, and hundreds of open-source models) without juggling API keys. Hugging Face handles the routing, scaling, and failover automatically.

What it does better than Google AI Studio: Multi-provider access, open-source model support, no vendor lock-in, OpenAI-compatible endpoints (easy migration). If you're building a product and want the flexibility to switch models based on cost or performance, this is the platform.

What it does worse: No visual prompt testing interface. You're working with API calls, not a playground. The free tier is limited -- you'll hit rate limits quickly if you're testing heavily.

Pricing: Free tier included. PRO plan is $9/month with additional credits. Pay-per-use based on provider rates (Hugging Face doesn't mark up the underlying model costs). Team and Enterprise plans available.

Best for: Developers building production applications who want multi-model flexibility and don't want to be locked into one provider's ecosystem. Not for non-technical users.

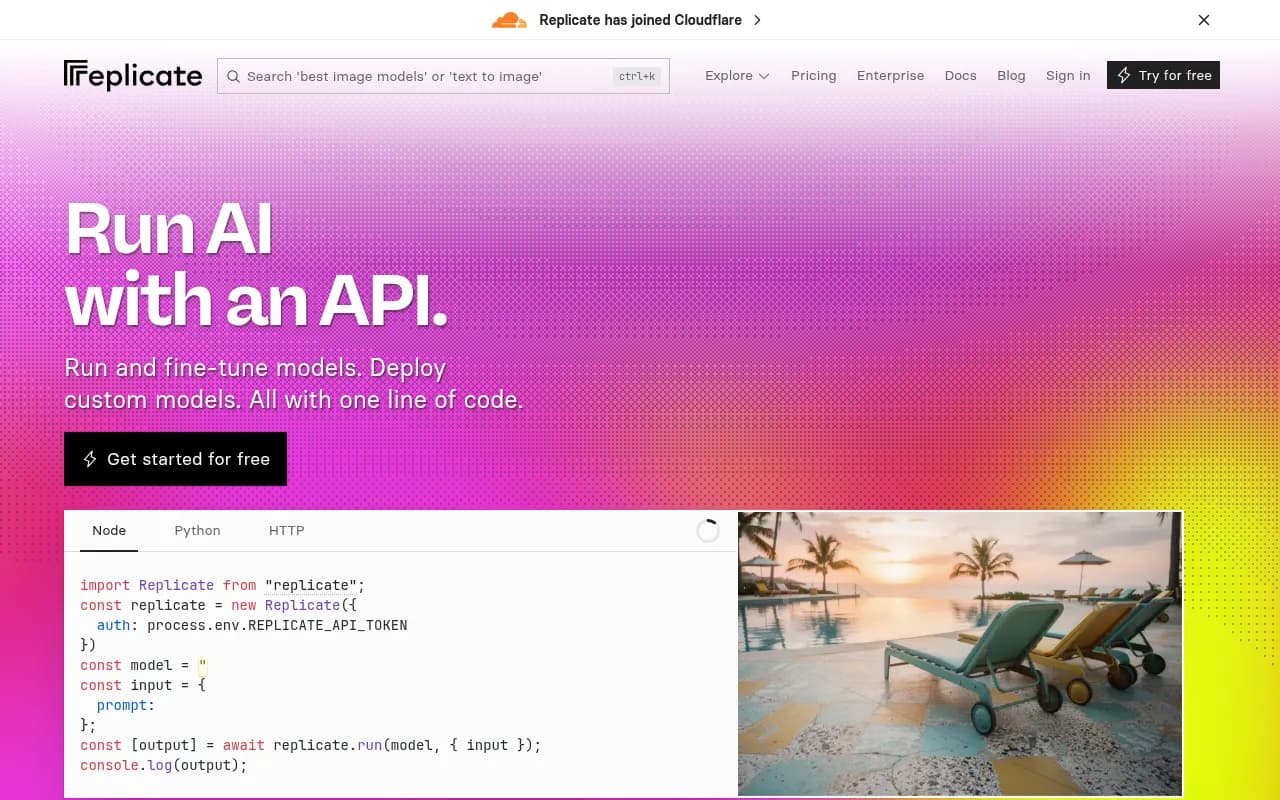

Replicate

Replicate is a cloud platform for running open-source AI models -- think of it as the deployment layer for models you find on Hugging Face. You don't test prompts here; you deploy models to production and pay only for compute time used.

The main use case: you want to run Stable Diffusion, Llama 3, Whisper, or other open-source models without managing infrastructure. Replicate handles scaling from zero to production traffic automatically. You call their API, they spin up the model on a GPU, run your inference, and shut it down.

What it does better than Google AI Studio: Open-source model support, pay-per-second pricing (no monthly fees), automatic scaling, custom model deployment. If you're using models that aren't available through Google, OpenAI, or Anthropic, Replicate is how you run them in production.

What it does worse: No prompt testing interface. No first-party models (no GPT, Claude, or Gemini access). You're paying for GPU compute time, which can get expensive for high-traffic applications.

Pricing: Pay-per-second compute time. T4 GPU costs $0.000225/second, L40S GPU costs $0.000975/second, A100 (80GB) GPU costs $0.0014/second. No monthly fees. $10 free credit to start. This is cheaper than running your own GPU servers but more expensive than API calls to OpenAI or Google for equivalent models.

Best for: Developers who need to run open-source models in production without managing infrastructure. Also useful for fine-tuning custom models and deploying them at scale.

Which alternative should you choose?

If you're just testing prompts and want the simplest experience: stick with Google AI Studio for Gemini models, OpenAI Playground for GPT models, or Anthropic Console for Claude models. Pick based on which model family you're using.

If you need to compare responses across multiple models: Poe is the fastest way to test the same prompt on GPT, Claude, Gemini, and others simultaneously. Saves hours of platform-hopping.

If you're building a production application: LangChain for orchestration and agent workflows, Hugging Face Inference API for multi-provider flexibility, or Vertex AI if you're already on Google Cloud and need enterprise features.

If you want to run open-source models: Replicate for deployment without infrastructure management, or Hugging Face for access to the model catalog.

The honest answer: most developers end up using multiple platforms. You test prompts in Google AI Studio or OpenAI Playground, build orchestration with LangChain, and deploy through Vertex AI or Hugging Face depending on your model choices. There's no single platform that does everything well.