Summary

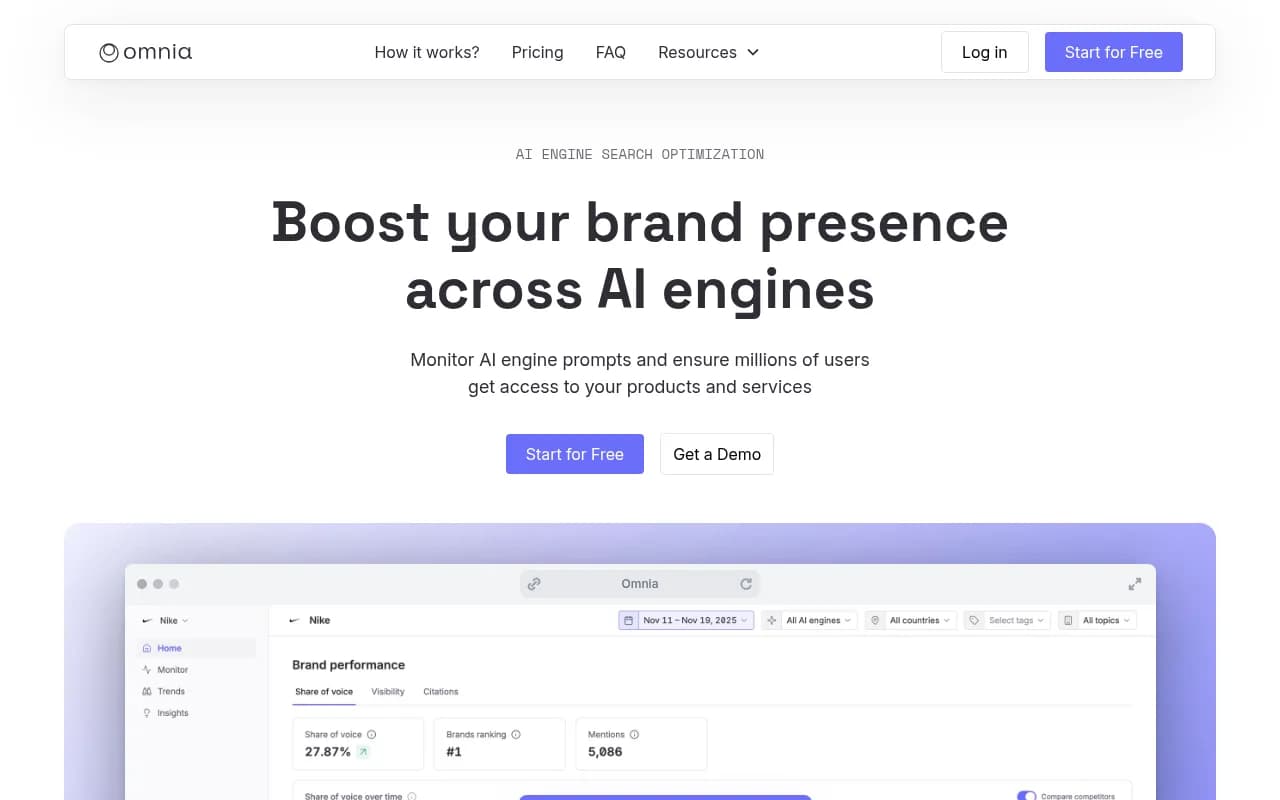

Tracking AI visibility used to mean opening ChatGPT, Perplexity, Claude, Gemini, and a dozen other AI models in separate tabs, manually typing the same prompts, and copy-pasting results into a spreadsheet. In 2026, that's no longer necessary—or sustainable. Modern AI visibility platforms let you monitor 10+ models from a single dashboard, track brand mentions and citations in real time, and identify content gaps that prevent you from showing up in AI-generated answers. This guide walks through the practical system for unified AI visibility tracking: which models to monitor, how to build prompt sets that match real buyer intent, what metrics actually matter, and how to turn monitoring data into content that ranks.

- Multi-model tracking is now table stakes: ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews, Copilot, Grok, DeepSeek, Meta AI, and Mistral all surface different sources—you need visibility across all of them

- Unified dashboards save hours: Platforms like Promptwatch consolidate tracking, citation analysis, and content gap identification in one interface

- Prompt sets replace keyword lists: AI visibility isn't about rankings—it's about which questions trigger mentions, and whether your content answers them

- Citation tracking reveals what's missing: Seeing which URLs AI models cite (and which they ignore) tells you exactly what content to create next

- The action loop matters more than the data: Monitoring alone doesn't improve visibility—you need tools that show gaps, generate content, and track results

Why tracking AI visibility across multiple models matters in 2026

AI search has fragmented discovery. People don't just Google anymore—they ask ChatGPT for product recommendations, use Perplexity for research, consult Claude for comparisons, and rely on Google AI Overviews for quick answers. Each model has its own training data, citation preferences, and update cadence. A brand that shows up in ChatGPT might be invisible in Perplexity. A source cited by Claude might never appear in Gemini.

That fragmentation creates a new problem: you can't optimize for AI visibility if you don't know where you're visible and where you're not. Manually checking each model is impractical—by the time you've typed the same prompt into 10 different interfaces, the data is already stale. You need a system that tracks all models simultaneously, compares your visibility across them, and surfaces patterns you can act on.

The shift from traditional SEO to AI visibility also changes what you're measuring. In SEO, you track keyword rankings, impressions, and click-through rates. In AI visibility, you track mention frequency, citation share, sentiment, and prompt coverage. A brand that ranks #1 in Google for "project management software" might never be mentioned when someone asks ChatGPT "what's the best tool for remote teams?" That's the gap unified tracking helps you find.

Which AI models you actually need to monitor

Not all AI models matter equally. Some have massive user bases and influence purchase decisions. Others are niche or experimental. In 2026, the core models worth tracking are:

- ChatGPT (OpenAI): Largest user base, strongest brand influence, frequent updates

- Perplexity: Research-focused, high citation volume, growing in B2B

- Google AI Overviews: Appears in search results, massive reach, SEO crossover

- Claude (Anthropic): Long-context reasoning, preferred for detailed comparisons

- Gemini (Google): Integrated with Google Workspace, strong in enterprise

- Copilot (Microsoft): Built into Windows and Office, high corporate adoption

- Grok (xAI): Real-time data access, Twitter integration, growing user base

- DeepSeek: Open-source alternative, strong technical community

- Meta AI: Integrated with Facebook, Instagram, WhatsApp—consumer-focused

- Mistral: European AI model, GDPR-compliant, growing in regulated industries

You don't need to track all of them from day one. Start with the three or four models your target audience actually uses. If you're B2B SaaS, prioritize ChatGPT, Perplexity, and Claude. If you're e-commerce, add Meta AI and Google AI Overviews. If you're targeting developers, include DeepSeek and Mistral.

The key is consistency: track the same prompts across all selected models so you can compare visibility and identify model-specific gaps. A unified dashboard makes this possible without manual effort.

How unified AI visibility tracking actually works

Most AI visibility platforms follow a similar technical approach:

- Prompt execution: The platform sends your predefined prompts to each AI model's API or interface, simulating real user queries

- Response capture: It records the full AI-generated answer, including text, citations, and any embedded links or sources

- Entity extraction: Natural language processing identifies brand mentions, competitor names, and cited URLs within each response

- Scoring and aggregation: The platform calculates visibility scores (mention frequency, citation share, sentiment) and aggregates data across models

- Change detection: It tracks how responses evolve over time—new mentions, dropped citations, sentiment shifts

The best platforms run these checks daily or weekly, depending on your plan. They store historical data so you can spot trends: "We were mentioned in 40% of ChatGPT responses last month, now it's 15%—what changed?"

Some platforms also offer geographical tracking (how responses differ by country or region) and persona-based prompts (how answers change when you specify "for small businesses" vs "for enterprises"). These features matter if your audience is global or segmented.

The 4-step system for multi-model AI visibility tracking

Step 1: Build a prompt set that matches real buyer intent

AI visibility isn't about keywords—it's about prompts. A prompt is the full question or instruction a user gives an AI model. Unlike keywords, prompts are conversational, context-rich, and intent-specific.

Start by collecting real questions your customers ask. Pull from:

- Sales call transcripts and support tickets

- Reddit threads and Quora questions in your niche

- "People Also Ask" boxes in Google search results

- ChatGPT and Perplexity search logs (if you have access)

- Customer interviews and user research sessions

Turn those questions into prompt clusters. For example, if you sell email marketing software, your clusters might be:

- Comparison prompts: "What's the best alternative to Mailchimp for small businesses?"

- Use case prompts: "What email tool should I use for e-commerce abandoned cart campaigns?"

- Feature prompts: "Which email platform has the best automation workflows?"

- Problem-solving prompts: "How do I improve my email deliverability?"

Prioritize prompts you can actually win. If you're a startup competing with Mailchimp, you won't rank for "best email marketing software" (too broad, too competitive). But you might win "best email tool for Shopify stores under $50/month" (specific, winnable).

Aim for 20-50 prompts to start. That's enough to get meaningful data without overwhelming your tracking system. You can expand later as you identify gaps.

Step 2: Set up unified tracking across all target models

Once you have your prompt set, configure your tracking platform to monitor all selected AI models. Most platforms let you:

- Upload prompts in bulk (CSV or direct input)

- Select which models to query (ChatGPT, Perplexity, Claude, etc.)

- Set tracking frequency (daily, weekly, or on-demand)

- Define competitors to monitor alongside your brand

- Configure alerts for visibility changes (e.g. "notify me if we drop below 30% mention rate")

The platform will start running your prompts and collecting baseline data. This takes a few days to a week, depending on prompt volume and model response times.

During setup, pay attention to geographical and persona settings. If you operate in multiple countries, track prompts in each region—AI models often surface different sources based on user location. If you serve multiple customer segments, create persona-specific prompt variants ("for freelancers" vs "for agencies").

Step 3: Analyze visibility patterns and identify gaps

Once you have baseline data, the real work begins: understanding why you're visible in some models but not others, and which content gaps are holding you back.

Start with the visibility heatmap. Most platforms show a grid with prompts on one axis and AI models on the other. Each cell indicates whether you were mentioned, cited, or ignored. Look for patterns:

- Model-specific gaps: Mentioned in ChatGPT but never in Perplexity? That model might rely on sources you're not present in (e.g. Reddit, YouTube, academic papers)

- Prompt-specific gaps: Visible for "best email tools" but not "email automation for e-commerce"? You're missing content that addresses that specific use case

- Competitor dominance: If competitors are mentioned in 80% of responses and you're at 10%, you're not just losing—you're invisible

Next, drill into citation analysis. Which URLs are AI models actually citing when they mention competitors? Are they linking to blog posts, product pages, comparison guides, or third-party reviews? This tells you what content format and angle works.

Finally, use the platform's content gap analysis feature (if available). This shows you prompts where competitors are mentioned but you're not, along with the specific topics and angles their cited content covers. That's your content roadmap.

Step 4: Turn insights into content that ranks in AI search

Monitoring alone doesn't improve visibility. You need to create content that AI models want to cite. The best platforms (like Promptwatch) include AI writing agents that generate articles, listicles, and comparisons grounded in real citation data.

Here's the workflow:

- Identify a high-value gap: Pick a prompt where competitors are visible but you're not

- Analyze what's being cited: Look at the URLs AI models reference—what topics, formats, and angles do they cover?

- Generate optimized content: Use the platform's AI writer to create an article that addresses the prompt, incorporates missing angles, and matches the citation patterns of successful content

- Publish and track: Publish the content on your site, then monitor whether AI models start citing it in responses to the target prompt

This cycle—find gaps, generate content, track results—is what separates optimization platforms from monitoring-only tools. Most competitors (Otterly.AI, Peec.ai, AthenaHQ) stop at step three. They show you the data but leave you to figure out what to do with it.

Comparison: unified tracking platforms vs manual monitoring

| Approach | Time per prompt | Models covered | Historical data | Citation analysis | Content recommendations |

|---|---|---|---|---|---|

| Manual monitoring | 10-15 min | 1-2 | No | No | No |

| Basic tracking tools | 5 min | 3-5 | Limited | Basic | No |

| Unified platforms | Automated | 10+ | Full history | Deep analysis | Yes |

| Promptwatch | Automated | 10+ | Full history | 880M+ citations | AI content generation |

The difference is stark. Manual monitoring is fine for a quick spot check, but it doesn't scale. Basic tools save time but lack the depth needed for optimization. Unified platforms like Promptwatch close the loop: they show you where you're invisible, explain why, and help you fix it.

What metrics actually matter in multi-model tracking

Not all AI visibility metrics are equally useful. Focus on:

- Mention rate: Percentage of prompts where your brand is mentioned (aim for 30%+ in your niche)

- Citation share: How often your URLs are cited vs competitors (10-20% is strong)

- Prompt coverage: Number of prompts where you appear at least once (track growth over time)

- Model-specific visibility: Which models mention you most/least (identifies platform-specific gaps)

- Sentiment: Whether mentions are positive, neutral, or negative (qualitative but important)

- Position in response: Are you mentioned first, middle, or last? (earlier is better)

Ignore vanity metrics like "total mentions" without context. A brand mentioned 100 times across 1,000 prompts (10% mention rate) is less visible than one mentioned 50 times across 100 prompts (50% mention rate).

Also track traffic attribution if your platform supports it. Some tools (like Promptwatch) offer code snippets or Google Search Console integration to connect AI visibility to actual website traffic. That's the ultimate proof of ROI.

Common mistakes that break multi-model tracking

Even with a unified dashboard, teams make mistakes that undermine their tracking:

- Tracking too many prompts too soon: Start with 20-50 high-value prompts. You can always expand later.

- Ignoring geographical differences: AI models surface different sources in different countries. Track by region if you operate globally.

- Not updating prompts regularly: Buyer intent evolves. Review and refresh your prompt set quarterly.

- Focusing on models your audience doesn't use: If your customers are on LinkedIn, track ChatGPT and Perplexity—not Grok or Meta AI.

- Treating all mentions as equal: A brief mention at the end of a response is not the same as being the first recommendation with a citation.

- Monitoring without acting: Data is useless if you don't create content to fill the gaps it reveals.

The biggest mistake is treating AI visibility tracking as a reporting exercise instead of an optimization system. The goal isn't to generate dashboards—it's to improve visibility and drive traffic.

How to choose the right unified tracking platform

Not all AI visibility platforms are built the same. When evaluating options, prioritize:

- Model coverage: Does it track all the AI models your audience uses? (10+ is ideal)

- Prompt flexibility: Can you add custom prompts, or are you stuck with fixed lists?

- Citation depth: Does it show which URLs are cited, or just whether you were mentioned?

- Content gap analysis: Does it identify missing topics and angles, or just report data?

- Content generation: Does it help you create optimized content, or leave you to figure it out?

- Traffic attribution: Can you connect AI visibility to actual website traffic and revenue?

- Crawler logs: Does it show when AI models crawl your site and what they read? (Critical for debugging)

Platforms like Promptwatch check all these boxes. Others (Otterly.AI, Peec.ai, Search Party) are monitoring-only and lack the action layer. A few (Profound, Scrunch) have strong features but higher price points and missing capabilities like Reddit tracking or ChatGPT Shopping.

If you're just starting, look for a platform with a free trial and low entry pricing. Promptwatch offers an Essential plan at $99/month (1 site, 50 prompts, 5 articles) that's enough to prove ROI before scaling up.

The future of multi-model AI visibility tracking

AI search is still early. In 2026, most brands are just starting to track visibility. By 2027-2028, AI visibility will be as standard as SEO rank tracking is today. The platforms that win will be the ones that close the action loop—not just showing you data, but helping you fix the gaps.

Expect to see:

- Real-time tracking: Daily or hourly updates instead of weekly batches

- Predictive analytics: AI models that forecast which prompts will drive traffic before you optimize for them

- Automated content pipelines: Platforms that generate, publish, and track content without manual intervention

- Cross-channel attribution: Connecting AI visibility to not just traffic, but leads, signups, and revenue

- AI model APIs becoming standard: More models offering official APIs for tracking and optimization

The brands that invest in unified tracking now will have a 12-18 month head start over competitors still manually checking ChatGPT in incognito mode. That advantage compounds as AI search adoption grows.

Getting started: your first week of unified AI visibility tracking

Here's a practical 7-day plan to set up multi-model tracking:

Day 1-2: Build your initial prompt set (20-30 prompts). Pull from customer questions, Reddit threads, and "People Also Ask" boxes.

Day 3: Sign up for a unified tracking platform (start with a free trial). Configure models, prompts, and competitors.

Day 4-5: Let the platform collect baseline data. Don't touch anything—just let it run.

Day 6: Review the visibility heatmap. Identify 3-5 high-value gaps where competitors are mentioned but you're not.

Day 7: Use the platform's content gap analysis to understand what's missing. If it has an AI writing agent, generate one optimized article and publish it.

That's it. One week, one article, and you're in the game. Track the prompt for 2-4 weeks to see if AI models start citing your new content. If they do, repeat the cycle. If not, analyze why (wrong angle, missing depth, poor distribution) and adjust.

The key is starting. AI visibility tracking isn't rocket science—it's just a new discipline that most brands haven't adopted yet. The ones that do will own discovery in their niche.