Key takeaways

- No single tool monitors all major AI engines equally well — coverage gaps are common, especially for Claude and Gemini

- The most important distinction in 2026 is between monitoring-only tools and platforms that help you act on what they find

- A practical stack combines a core AI visibility platform, a content optimization layer, and traffic attribution

- Crawler log access is a hidden differentiator — most tools skip it entirely

- Finding that you're invisible in AI responses is only useful if you know what to do next

Why your brand monitoring stack needs a rethink

If you're still relying on Google Alerts and a social listening tool to track brand mentions, you're missing a large and growing slice of where your customers are finding (or not finding) you.

AI search has changed the discovery loop. When someone asks ChatGPT "what's the best project management tool for remote teams?" or asks Perplexity "which CRM should a 10-person startup use?" — those responses shape buying decisions. And unlike a Google results page, there's no obvious way to know whether your brand appeared or not unless you're actively tracking it.

The problem is that the tooling landscape is fragmented. Some platforms track ChatGPT well but barely touch Claude. Others cover Google AI Overviews but miss Perplexity. A few do broad coverage but give you no way to improve what you find. Building the right stack means understanding what each layer does — and where the gaps are.

The three layers of an effective AI monitoring stack

Before diving into specific tools, it helps to think about the stack in three layers:

- Visibility tracking — Which AI engines mention your brand, in what context, and how often?

- Gap analysis and content optimization — Where are competitors visible but you're not? What content would close those gaps?

- Traffic attribution — Is AI-driven visibility actually sending people to your site? Which pages?

Most teams in 2026 have layer one covered to some degree. Layers two and three are where the real differentiation happens.

Layer 1: Core AI visibility tracking

What to look for in a tracking tool

Coverage is the obvious starting point — you want a tool that queries ChatGPT, Claude, Gemini, Perplexity, and ideally a few others (Grok, DeepSeek, Copilot) on a regular basis. But coverage alone isn't enough. The questions being asked matter enormously. A tool that tracks 50 generic prompts will miss the specific queries your customers are actually typing.

Look for:

- Customizable prompt sets (not just fixed templates)

- Prompt volume and difficulty scoring, so you can prioritize

- Sentiment tracking (is the mention positive, neutral, or a warning?)

- Competitor comparison, so you can see share of voice

Tools worth knowing

Promptwatch sits at the top of the category in 2026, tracking 10 AI models including ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Copilot, Meta AI, Mistral, and Google AI Overviews. What separates it from most trackers is that it doesn't stop at monitoring — it feeds visibility data directly into content gap analysis and an AI writing agent. More on that in layer two.

For teams that want a focused, lightweight tracker, a few other options are worth considering:

Otterly.AI is one of the more accessible entry points — it covers four core AI engines with add-ons for more, starts at $29/month, and earned a Gartner Cool Vendor mention in 2025. It's a solid choice if you're just getting started and need basic mention tracking without a large budget commitment.

Otterly.AI

Peec AI takes a more flexible approach, letting you add AI models as add-ons rather than locking you into a fixed set. It covers up to 10 models depending on your plan and has unlimited seats, which makes it appealing for larger teams.

Profound is worth a look for enterprise teams that need deep research capabilities. It has strong prompt volume data and tracks up to 10 AI engines, though it sits at a higher price point.

Profound

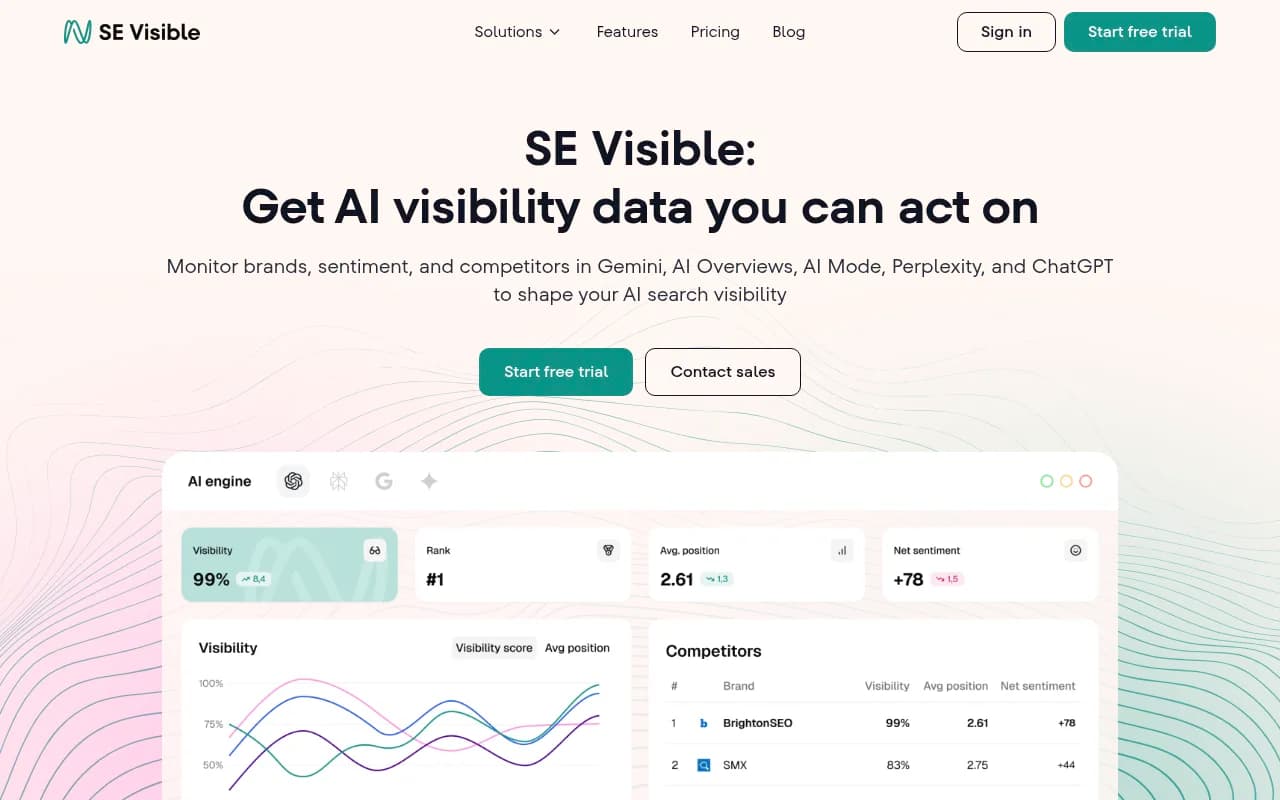

For teams already invested in SE Ranking's SEO platform, SE Visible adds AI visibility tracking across five engines with multi-brand dashboards and unlimited seats.

The Claude and Gemini coverage problem

Here's something that doesn't get talked about enough: Claude coverage is genuinely harder than ChatGPT coverage. Claude's API has different rate limits and response patterns, and several tools that claim "multi-model" tracking quietly deprioritize it. Before committing to any platform, ask specifically how often it queries Claude and whether the prompts are identical across models.

Gemini has its own quirks — Google AI Overviews and Gemini (the standalone model) behave differently, and tools that conflate them give you misleading data. Platforms that distinguish between Google AI Mode and Google AI Overviews as separate tracking targets are more useful here.

Layer 2: Gap analysis and content optimization

This is where most monitoring stacks fall short. Knowing you're invisible in AI responses is frustrating. Knowing why and what to do about it is actually useful.

Answer gap analysis

The core concept: your competitors are being cited by ChatGPT and Claude for certain prompts, and you're not. Why? Usually because the AI model can't find a clear, authoritative answer to that question on your website. The content simply doesn't exist, or exists in a form that AI crawlers can't easily parse.

A good gap analysis tool surfaces the specific prompts where competitors have visibility and you don't — not as a vague "content opportunity" but as a concrete list of questions your site needs to answer.

Promptwatch calls this Answer Gap Analysis, and it's one of the more practically useful features in the category. You see the exact prompts, the competitors who are winning them, and what kind of content would likely close the gap.

AI-optimized content generation

Once you know what's missing, you need to create it. This is where a lot of teams hit a wall — they have the insight but not the bandwidth to act on it.

A few platforms have built content generation directly into the visibility workflow:

AirOps is strong here. It combines content workflow automation with citation tracking, so you can build content pipelines that are specifically designed to get cited by AI models rather than just rank in Google.

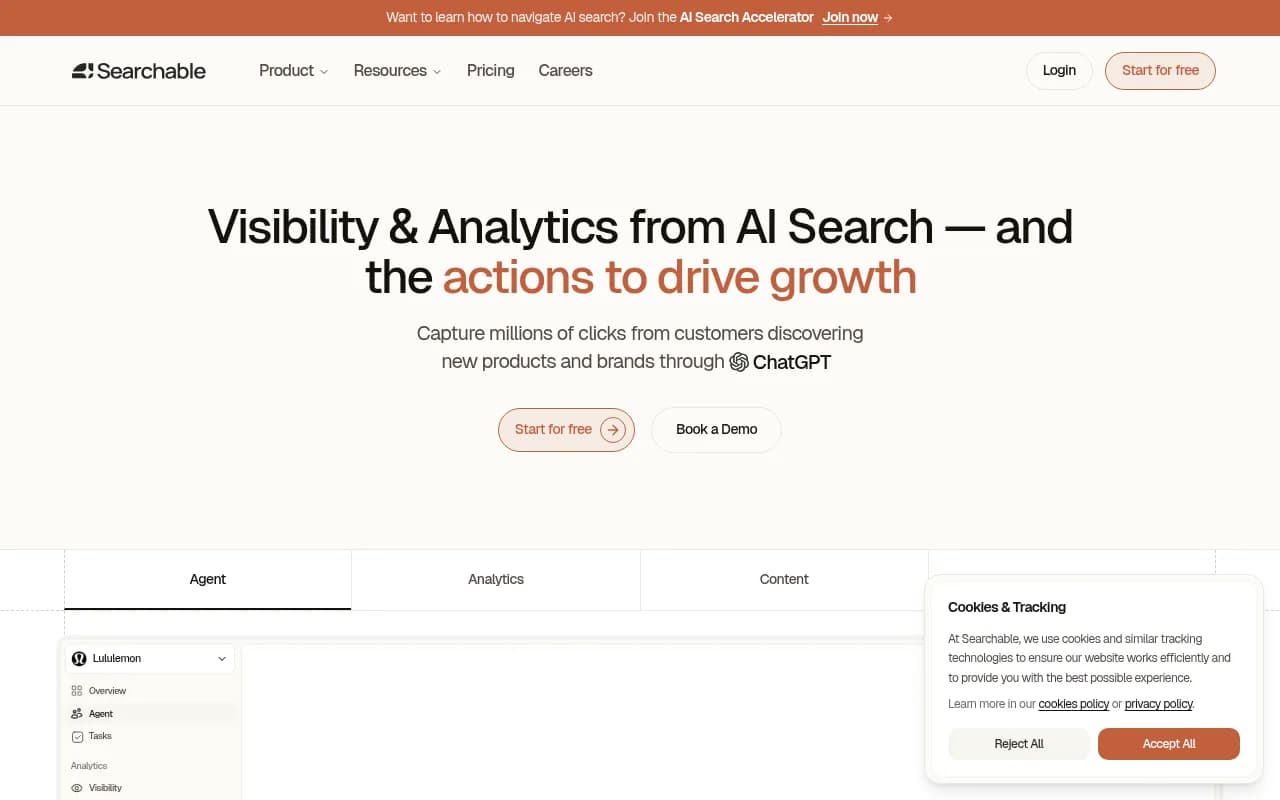

Searchable takes an end-to-end approach, covering up to seven AI engines and including content generation as part of the platform.

Rankshift is worth a look for teams that want a tighter focus on ChatGPT and Perplexity visibility with content recommendations built in.

The key thing to understand about AI-optimized content is that it's not the same as SEO content. AI models cite sources that directly and clearly answer specific questions. Long-form pillar pages that bury the answer in paragraph 12 don't perform well. Concise, structured, question-answering content does.

Layer 3: Traffic attribution

This is the layer most teams skip, and it's the one that connects AI visibility to actual business outcomes.

The challenge: when someone reads an AI response that mentions your brand and then visits your site, that visit often shows up as direct traffic in Google Analytics. There's no UTM parameter, no referral header. The connection between AI mention and website visit is invisible unless you're specifically looking for it.

How to close the attribution loop

There are a few approaches:

Server log analysis is the most reliable. AI crawlers (GPTBot, ClaudeBot, PerplexityBot, etc.) leave traces in your server logs when they read your pages. Analyzing these logs tells you which pages AI engines are actively reading, how often, and whether they're encountering errors. This is genuinely useful data that most teams don't have access to.

Promptwatch includes AI crawler log monitoring as a feature in its Professional and Business plans — you get real-time logs of which AI crawlers are hitting which pages. Most competitors don't offer this at all.

GSC integration helps you understand which pages are getting traffic that correlates with AI visibility improvements. It's indirect but useful as a cross-reference.

JavaScript snippet tracking can capture some AI-referred visits by detecting patterns in how users arrive and behave.

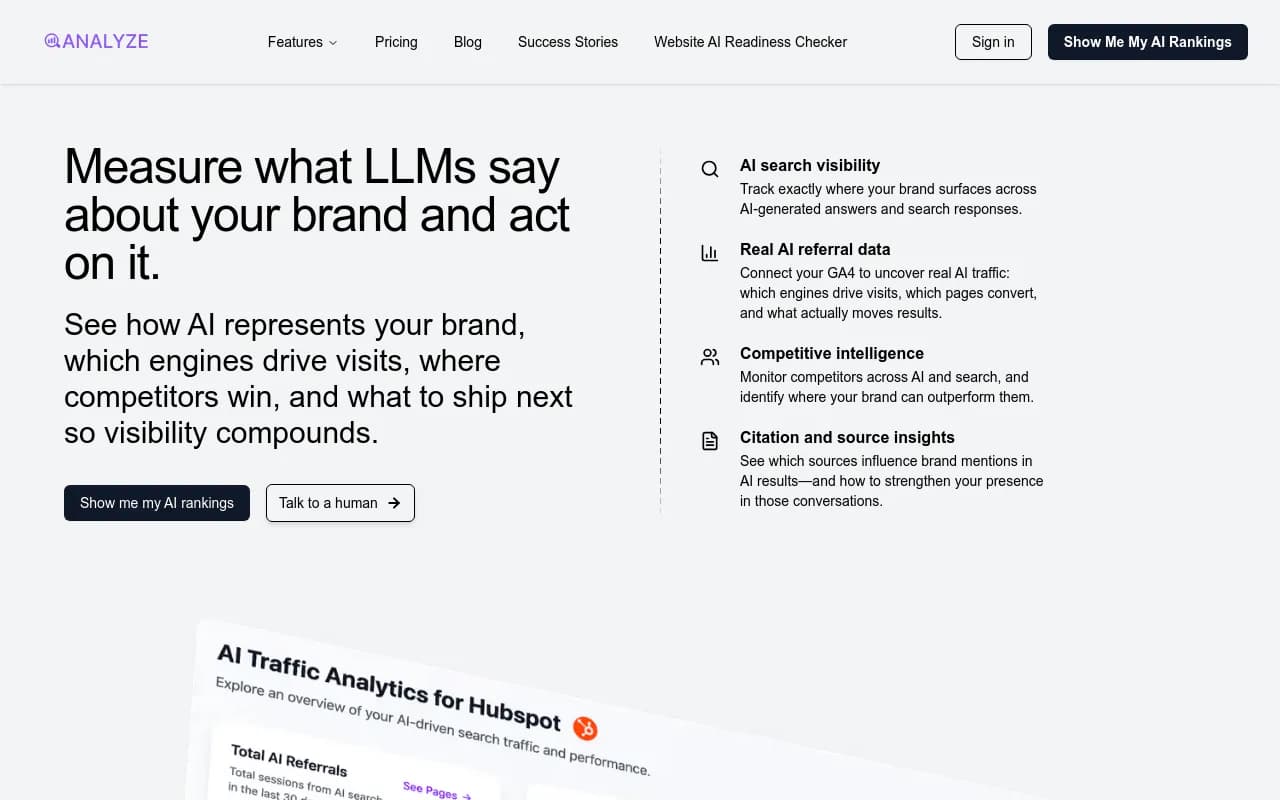

For teams that need deeper attribution, Analyze AI specifically focuses on connecting AI search visibility to real traffic data.

Comparison: how the main tools stack up

| Tool | AI engines covered | Content generation | Crawler logs | Traffic attribution | Starting price |

|---|---|---|---|---|---|

| Promptwatch | 10 | Yes (built-in AI writer) | Yes | Yes (GSC, logs, snippet) | $99/mo |

| Profound | Up to 10 | No | No | Limited | $99/mo |

| Otterly.AI | 4 base + add-ons | No | No | No | $29/mo |

| Peec AI | Up to 10 | No | No | No | €85/mo |

| SE Visible | 5 | No | No | No | $99/mo |

| AirOps | Up to 4 | Yes (workflow-based) | No | Limited | Free tier |

| Searchable | Up to 7 | Yes | No | Limited | Custom |

| Rankshift | 3 (ChatGPT, Perplexity focus) | Recommendations | No | No | Varies |

The pattern is clear: most tools cover layer one reasonably well. Layers two and three are where the field thins out fast.

Building your stack: three practical configurations

Configuration 1: Getting started (under $100/month)

If you're new to AI visibility tracking and want to understand the basics before committing to a larger platform:

- Start with Otterly.AI for basic ChatGPT and Perplexity tracking

- Add Google Search Console to monitor traffic changes as a proxy for AI visibility improvements

- Use AirOps free tier for content workflow experiments

This won't give you full coverage, but it will tell you whether AI visibility is a meaningful channel for your brand before you invest more.

Configuration 2: Mid-market team ($200-600/month)

For marketing teams that have confirmed AI search is driving (or could drive) meaningful traffic:

- Promptwatch Professional ($249/mo) as the core platform — covers 10 engines, includes Answer Gap Analysis, AI content generation, crawler logs, and traffic attribution

- SE Ranking for traditional SEO monitoring alongside AI tracking

This combination gives you full-stack AI visibility with the traditional SEO layer that still matters for feeding AI models in the first place.

Configuration 3: Agency or multi-brand setup

For agencies managing multiple clients or brands with complex AI visibility needs:

- Promptwatch Business or Agency plan for multi-site tracking, white-label reporting, and API access

- Profound as a secondary research layer for enterprise clients who need deep prompt volume data

- AirOps for content production at scale

What most guides don't tell you about AI brand monitoring

A few things worth knowing that tend to get glossed over:

Prompt design matters more than most tools admit. If you're tracking generic prompts like "what is [your category]?" you'll get generic data. The prompts that actually matter are the ones your customers type — specific, intent-driven, often conversational. Tools that let you import your own prompt sets from customer research are meaningfully more useful than tools with fixed libraries.

AI models update their knowledge and behavior. A brand that's well-cited by ChatGPT today might drop in six months if the underlying training data shifts or if competitors publish better content. Monitoring needs to be ongoing, not a one-time audit.

Reddit and YouTube influence AI responses more than most people realize. When ChatGPT recommends a brand, it's often because that brand appears in Reddit discussions or YouTube reviews that the model has indexed. Platforms that track these sources (Promptwatch includes Reddit and YouTube insights) give you a more complete picture of why you're visible or invisible.

Hallucination is a real problem. AI models sometimes mention brands inaccurately — wrong pricing, wrong features, wrong context. Monitoring for sentiment and accuracy isn't just about positive mentions; it's about catching misinformation before it spreads.

The action loop: from monitoring to results

The brands getting real value from AI visibility in 2026 aren't just watching dashboards. They're running a continuous loop:

- Track which prompts they're visible for and which they're not

- Identify the specific content gaps that explain the invisibility

- Create content that directly addresses those gaps

- Watch crawler logs to confirm AI engines are reading the new content

- Track visibility scores and traffic to measure impact

- Repeat

This is the difference between AI brand monitoring as a reporting exercise and AI brand monitoring as a growth channel. The tools that support the full loop — rather than just step one — are the ones worth building your stack around.

Promptwatch is currently the only platform rated as a "Leader" across all categories in independent comparisons of GEO tools, specifically because it supports this full loop rather than stopping at monitoring.

Final thought

The AI search landscape in 2026 is not a single channel — it's ten different models with different training data, different citation behaviors, and different user bases. Full coverage means tracking all of them, understanding why you appear or don't, and having a clear path to improving what you find.

Start with a platform that covers the engines your customers actually use, make sure it gives you actionable gap data (not just a dashboard), and build the attribution layer so you can connect visibility to revenue. The monitoring part is table stakes. The optimization part is where the work actually happens.