Key takeaways

- Only 41% of marketers can demonstrate ROI from AI tools in 2026, down from 49% the year before, according to a report cited by Forbes.

- The core problem isn't the tools — it's that most teams measure activity (posts published, words generated, hours saved) instead of outcomes (pipeline, revenue, visibility).

- 75% of marketing teams have no AI roadmap, meaning tools get used in isolation rather than as part of a connected system.

- AI content that doesn't get discovered by AI search engines is invisible to a growing share of buyers — and most teams have no way to track this.

- Fixing the ROI problem requires three things: better attribution, content that targets AI-discoverable prompts, and a feedback loop that connects output to results.

There's an awkward conversation happening in marketing departments right now. Someone spent real money on AI writing tools. The team is using them. Content is going out. And when the CFO asks what it's producing, the honest answer is: we're not totally sure.

This isn't a niche problem. According to a Forbes piece from March 2026, only about 41% of marketers say they can demonstrate ROI from their AI investments — and that number has dropped from 49% the year before. So confidence is going in the wrong direction, even as spending goes up.

What's going wrong? And more importantly, what does a team that actually proves ROI do differently?

The tool adoption trap

Here's the pattern I keep seeing: a team adopts a handful of AI writing tools, output volume increases, and everyone feels productive. Then quarter-end arrives and the numbers don't move.

The problem is that adoption got confused with results. Salesforce's 2026 State of Marketing report found that 75% of marketers have now adopted AI. But Gartner found that fewer than one in four marketing leaders say their team has fully integrated AI into daily workflows. That gap between "we have the tools" and "the tools are working together" is where ROI disappears.

Using ChatGPT for a caption, an AI writer for a blog post, and a grammar tool for email is not a system. It's three isolated tasks that save a few minutes each and compound into nothing. The content gets published, the hours-saved metric looks good in a report, and the revenue line doesn't budge.

A stat from the research puts this bluntly: 83% of marketers have not received detailed AI training. So teams are using powerful tools without a clear model for how those tools connect to business outcomes. That's not a technology failure. It's an architecture failure.

The measurement problem nobody wants to talk about

Most teams measure AI content performance the same way they measured content before AI: pageviews, time on page, social shares, maybe keyword rankings. These metrics aren't wrong, but they're incomplete in 2026 in a way they weren't in 2022.

Here's what's changed. A growing share of buyer research now happens inside AI search engines — ChatGPT, Perplexity, Google AI Overviews, Gemini. When someone asks an AI "what's the best project management tool for remote teams" or "how do I reduce customer churn," they get an answer with citations. If your content isn't in those citations, you're invisible to that buyer. And most analytics dashboards have no way to capture this.

So a team can publish 50 AI-generated articles, watch their Google Analytics traffic stay flat, and conclude the content isn't working — when the real problem is that the content isn't being discovered by AI engines at all. Or the reverse: traffic is growing from AI referrals, but nobody knows because the attribution isn't set up.

This is a genuinely new measurement gap, and it's one reason the ROI numbers are sliding. The tools are generating content faster than ever. The measurement infrastructure hasn't caught up.

Why "efficiency" is the wrong metric

The most common way teams justify AI writing tools is time savings. "We used to spend 8 hours on a blog post, now it takes 2." That's real. But it's not ROI.

ROI requires connecting spend to revenue. If you saved 6 hours per article but the articles don't generate leads, don't rank, and don't get cited by AI engines, you've made the production of ineffective content more efficient. That's not a win.

Dr. Bin Tang put it well in his Forbes piece: "Efficiency isn't valuable unless it drives measurable business outcomes. Saving hours on social media posting is great, until you realize those hours saved didn't move revenue, reduce churn or increase customer lifetime value."

The fix isn't to stop measuring efficiency — it's to add a second layer of measurement that tracks what the content actually does after it's published. Does it rank? Does it get cited? Does it drive traffic? Does that traffic convert?

The content saturation problem making this worse

There's a compounding issue: 29% of marketers say AI content saturation is their biggest concern for 2026, ranking it above ROI pressure, budget cuts, and measurement challenges. That's a significant signal.

When everyone uses the same AI tools to write about the same topics, the output converges. Articles start to look alike. The differentiation that used to come from research, original perspective, and editorial judgment gets squeezed out. And undifferentiated content doesn't rank, doesn't get cited, and doesn't convert.

This is why the ROI problem and the content quality problem are the same problem. Teams optimizing for volume — more posts, faster production, lower cost per word — are often producing content that performs worse than less frequent, more considered pieces would.

The teams that are seeing ROI from AI content tools aren't using them to replace thinking. They're using them to execute faster on a strategy that was already sound.

What a working system actually looks like

The teams that can demonstrate ROI from AI content tools tend to share a few characteristics.

They define what ROI means before they spend. Not "we'll track engagement" but "we expect this content to generate X leads per month within 90 days, and here's how we'll measure it." That sounds obvious, but most teams skip it.

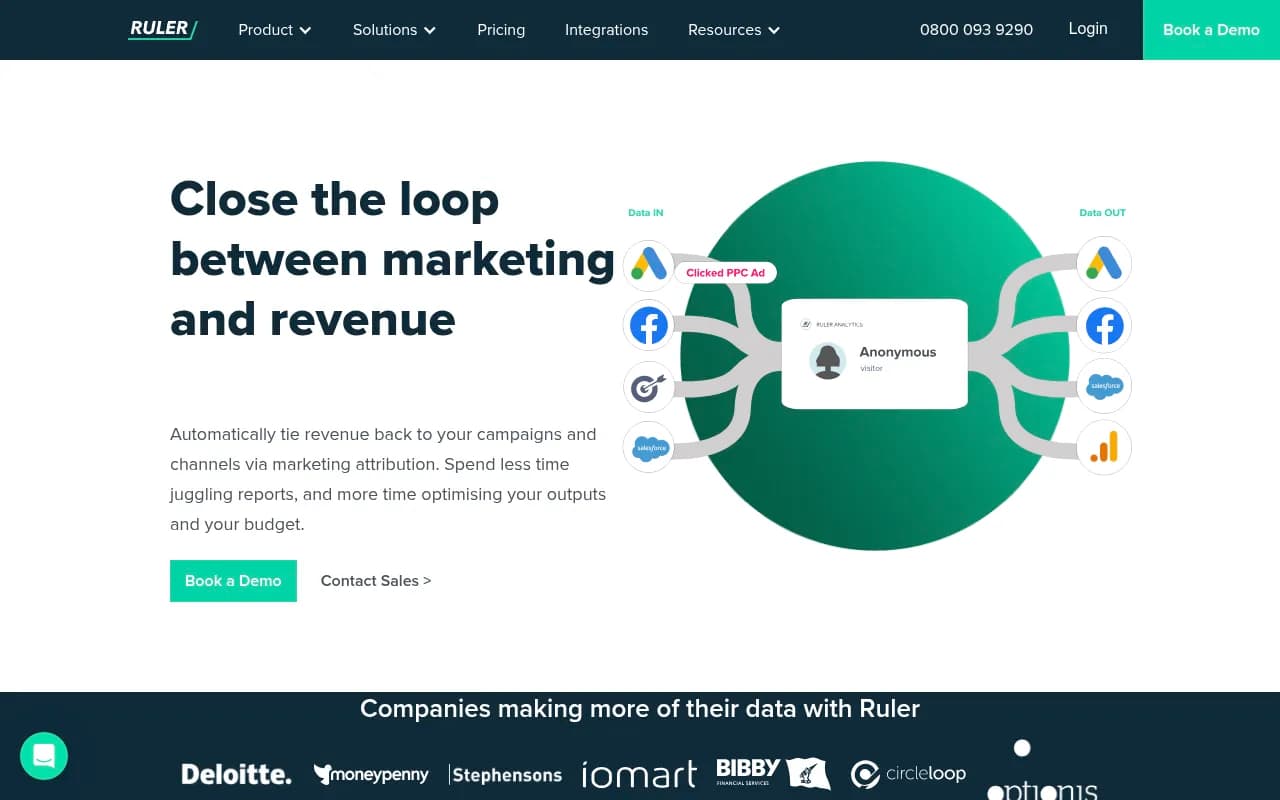

They connect content to revenue attribution. Tools like Ruler Analytics or Dreamdata can close the loop between a piece of content and a closed deal. Without this, you're guessing.

They track AI search visibility, not just Google rankings. This is the piece most teams are missing entirely. If your content isn't being cited by ChatGPT, Perplexity, or Google AI Overviews, you're losing a channel that's growing fast. Promptwatch is built specifically for this — it tracks which prompts your brand appears in across 10+ AI engines, shows you where competitors are visible and you're not, and includes content generation tools to close those gaps.

They treat content gaps as a prioritization tool. Instead of publishing whatever seems interesting, they identify the specific questions AI engines are answering without citing their brand, then create content that directly addresses those gaps. This turns content strategy from guesswork into something closer to engineering.

The tools worth using (and what to measure with each)

There's no shortage of AI content tools in 2026. The question is whether you're using them as part of a system or as isolated productivity hacks.

Here's a practical breakdown of where different tools fit and what you should be measuring with each:

| Tool category | Example tools | What to measure |

|---|---|---|

| AI writing and drafting | Jasper, Writer, Copy.ai | Content output rate, editorial revision time, content quality scores |

| SEO content optimization | Surfer SEO, Clearscope, MarketMuse | Keyword rankings, organic traffic, topical authority scores |

| AI search visibility | Promptwatch, AthenaHQ, Profound | Citation rate in AI engines, prompt coverage, share of voice vs competitors |

| Revenue attribution | Ruler Analytics, Dreamdata, HockeyStack | Pipeline influenced, revenue attributed, cost per acquisition |

| Content intelligence | MarketMuse, Frase, BuzzSumo | Content gap coverage, topic authority, competitive overlap |

Profound

The point isn't to use all of these. It's to have at least one tool in each category so you can connect content creation to content performance to revenue.

The AI search visibility gap most teams are ignoring

This deserves its own section because it's the fastest-growing blind spot in content measurement right now.

Traditional SEO measurement is relatively mature. You publish content, you track rankings in Google Search Console, you watch traffic. The feedback loop is slow but it works.

AI search is different. When someone asks Perplexity "what CRM should I use for a 50-person sales team," Perplexity synthesizes an answer from multiple sources and cites a handful of them. If your brand isn't in those citations, you don't show up — and there's no equivalent of Google Search Console to tell you this is happening.

Most content teams have no visibility into this channel at all. They're measuring Google rankings while a growing share of their potential customers are getting answers from AI engines that never mention them.

Platforms like Promptwatch track exactly this: which prompts your brand appears in, which competitors are getting cited instead of you, and what content you'd need to create to close those gaps. The data is specific — not "you should write about topic X" but "here are the exact prompts where you're invisible and here's what the AI wants to see."

For teams trying to prove content ROI in 2026, AI search visibility is becoming as important to track as organic rankings. Ignoring it means your measurement picture is incomplete by definition.

A practical framework for proving content ROI

If you're trying to fix this problem for your team, here's a framework that actually works:

Step 1: Define your outcome metrics upfront. Before a single piece of content is created, agree on what success looks like. Pipeline generated? Leads from organic? AI citation rate? Pick metrics that connect to revenue, not just activity.

Step 2: Audit your current attribution. Can you trace a closed deal back to the content that influenced it? If not, fix this first. Without attribution, you're flying blind regardless of how good your content is.

Step 3: Map your AI search visibility. Run a baseline audit of which prompts your brand appears in across ChatGPT, Perplexity, and Google AI Overviews. This tells you where the gaps are and gives you a starting point to measure improvement against.

Step 4: Prioritize content by gap size, not by what's easy to write. The highest-ROI content addresses questions where your brand is invisible but your competitors are visible. This is the opposite of how most teams prioritize.

Step 5: Build a feedback loop. Publish content, track what happens to your visibility scores and your attribution data, and adjust. This is what separates teams that improve over time from teams that keep publishing into the void.

The honest conclusion

The ROI problem with AI content tools isn't going to be solved by better tools alone. 42% of AI projects showing zero ROI isn't a technology problem — it's a measurement and strategy problem.

Teams that are winning right now have done two things: they've connected their content output to revenue attribution, and they've expanded their definition of "ranking" to include AI search visibility. Teams that are struggling are still measuring activity instead of outcomes, and they're optimizing for a search landscape that's shifting under their feet.

The tools exist to fix this. The question is whether your team is using them as a system or as a collection of productivity shortcuts.