Key takeaways

- Traditional SEO rank tracking measures URL positions in Google's organic results -- it tells you nothing about how AI models like ChatGPT or Perplexity represent your brand.

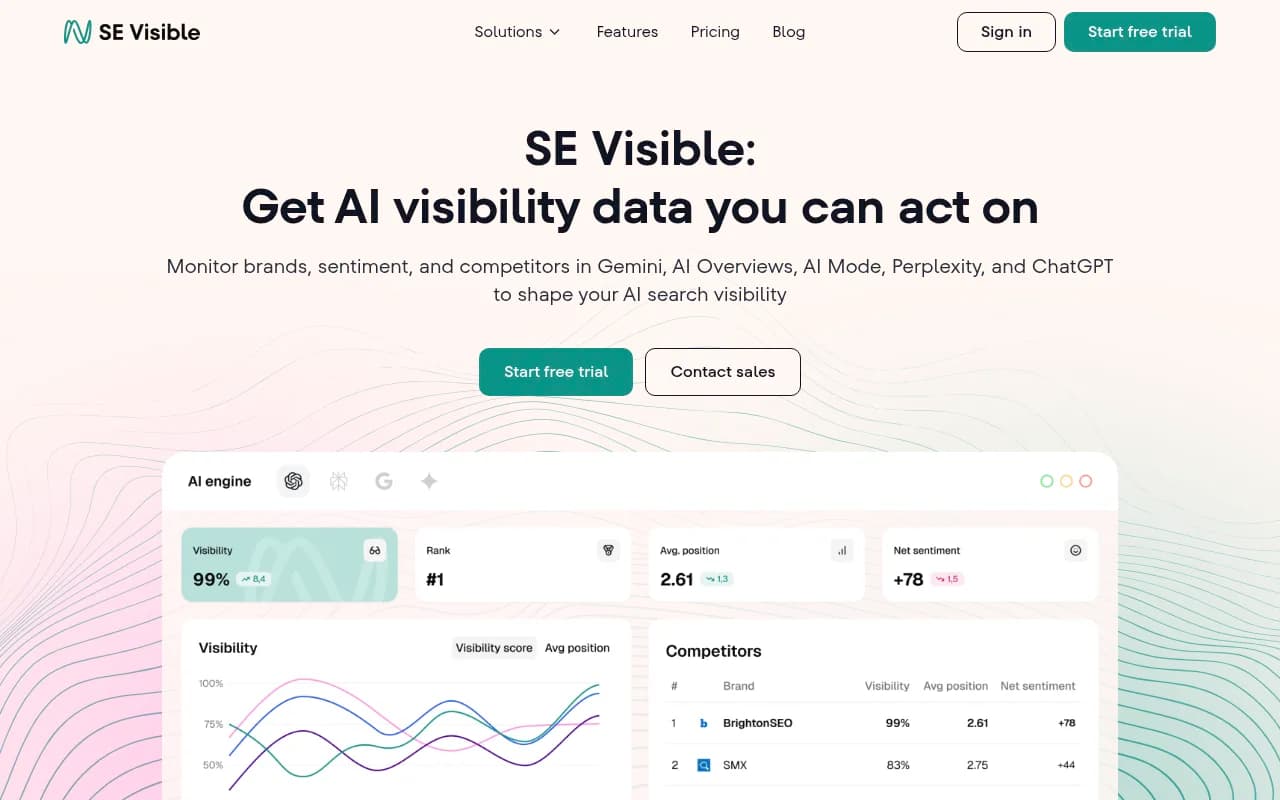

- AI brand mention monitoring tracks whether, how often, and in what context your brand appears inside AI-generated answers across multiple LLMs.

- Brands appear in roughly 90% of Google AI Mode responses, making AI visibility a core metric -- not an optional add-on.

- The two approaches measure fundamentally different things: one measures clicks, the other measures influence and recommendation share.

- Most traditional SEO tools (Semrush, Ahrefs) have added some AI tracking, but purpose-built AI visibility platforms go much deeper.

- If you want to both track and fix your AI visibility, you need a platform that closes the loop from gap analysis to content creation to result tracking.

AI search traffic grew 527% in a single year, according to Semrush's 2026 data. That's not a trend you can afford to monitor with the same tools you've been using since 2018.

For years, SEO rank tracking meant one thing: where does my URL appear in Google's blue links for a given keyword? It was a clean, measurable system. Position 1 was good. Position 11 was bad. You optimized, you tracked, you moved the needle.

That model still works -- for traditional search. But a growing share of search behavior now happens inside AI-generated answers, where there are no "positions." There's no page 1. There's just: does the AI mention your brand, or doesn't it?

These are two genuinely different problems, and they require different tools, different strategies, and different success metrics. Here's exactly how they differ.

1. What gets measured: URL position vs. brand mention

Traditional rank tracking is fundamentally about URLs. You enter a keyword, the tool checks where your page ranks in Google's organic results, and you get a number. Position 3. Position 14. That number tells you how visible your page is for that query.

AI brand monitoring is about something else entirely: whether your brand name, product, or recommendation appears inside a conversational AI response. When someone asks ChatGPT "what's the best project management tool for remote teams?", there's no ranking to track. The AI either mentions you or it doesn't. It might describe you accurately or inaccurately. It might recommend you first or bury you in a list of five alternatives.

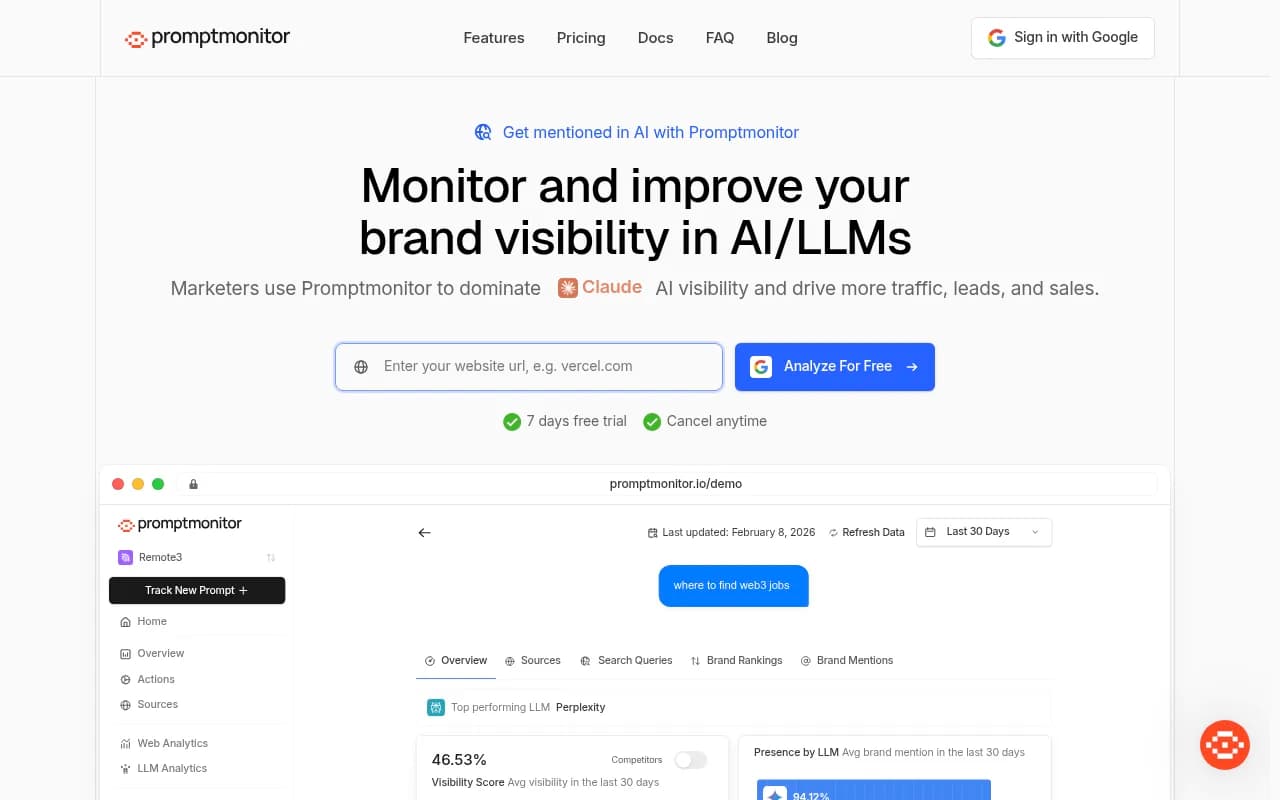

That's a completely different measurement problem. Tools like Promptwatch track brand mentions, sentiment, and placement across 10 AI models simultaneously -- not URL positions.

2. The data source: Google's index vs. LLM training and retrieval

Traditional rank trackers pull data from Google's search index. They simulate a search query, scrape the SERP, and record where your URL appears. The underlying data is Google's crawl of the web.

AI visibility tools work differently. They query AI models directly -- ChatGPT, Perplexity, Claude, Gemini, Grok, and others -- and analyze the responses. What they're measuring is a combination of what the LLM learned during training and what it retrieves in real time (for models with web access).

This matters because the two data sources can tell completely different stories. You might rank #1 in Google for "best CRM software" but never appear in ChatGPT's answer to that same question. Or vice versa. A brand with modest traditional SEO presence might be heavily cited by AI models because it's frequently mentioned in Reddit threads, review sites, and industry publications that LLMs weight heavily.

Tools like Otterly.AI and Peec AI query AI models directly to capture this data.

Otterly.AI

3. The competitive landscape: SERPs vs. share of model

In traditional SEO, competition is about the top 10 results. You're fighting for one of ten slots on page one. The metric is your position relative to competitors.

In AI search, the competitive frame is "share of model" -- how often does your brand get recommended compared to competitors when AI models answer relevant questions? If ChatGPT mentions your competitor in 80% of responses to your target queries and mentions you in 20%, that's a visibility gap that no amount of traditional rank tracking will surface.

This is why AI visibility platforms show competitor heatmaps and share-of-voice metrics across LLMs. It's a fundamentally different competitive picture.

4. Keyword intent vs. prompt intent

Traditional SEO is built around keywords. You research search volume, difficulty, and intent for specific keyword phrases. You optimize pages to rank for those keywords.

AI monitoring is built around prompts -- full questions and conversational queries that people type into ChatGPT or Perplexity. "What's the best accounting software for freelancers?" is a prompt, not a keyword. The intent is richer, the context is broader, and the optimization strategy is different.

Prompt intelligence tools can show you volume estimates for specific prompts, difficulty scores, and how a single prompt "fans out" into related sub-queries. This helps you prioritize which prompts to target -- not which keywords to stuff into a page.

Profound

5. Traffic attribution: clicks vs. influence

Traditional SEO has a clear attribution model. Someone searches, clicks your link, lands on your site. Google Search Console shows you impressions, clicks, and click-through rate. The connection between rank and traffic is direct.

AI search breaks this model. When ChatGPT recommends your brand in a response, the user might not click anything. They might just act on the recommendation -- visit your site directly, search your brand name, or make a purchase decision based on what the AI said. That influence doesn't show up in your GSC data as a referral from ChatGPT.

This is one of the hardest problems in AI visibility measurement. Some platforms are starting to address it through server log analysis, code snippet tracking, and GSC integration to detect AI-influenced traffic patterns. But the attribution gap is real, and it means AI brand mentions have business impact that traditional analytics tools simply don't capture.

6. Optimization levers: on-page SEO vs. content engineering for AI

When you want to improve a traditional ranking, you know what to do: improve the page's relevance, build backlinks, fix technical issues, improve Core Web Vitals. The playbook is well-established.

Improving AI brand visibility requires a different approach. AI models cite sources that comprehensively answer questions, appear in authoritative third-party contexts (Reddit, review sites, industry publications), and use clear, factual language that LLMs can extract and summarize. You can't just optimize a meta title.

The content strategy for AI visibility involves identifying which questions AI models are answering without mentioning you (answer gap analysis), then creating content specifically designed to be cited -- articles, comparisons, listicles grounded in the kind of factual, structured information that LLMs prefer.

This is where the gap between monitoring-only tools and full optimization platforms becomes obvious. Most AI tracking tools will show you that you're invisible for certain prompts. Fewer will help you fix it.

7. Platform coverage: one engine vs. many models

Traditional rank tracking is almost entirely Google-focused. Some tools track Bing, but Google's ~90% market share makes it the only one that really matters for most businesses.

AI brand monitoring has to cover a much wider surface. ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Claude, Gemini, Grok, DeepSeek, Meta AI, Copilot -- each has different training data, different retrieval mechanisms, and different tendencies to recommend brands. Your visibility can vary wildly across these models.

A brand might be well-represented in Perplexity (which does real-time web retrieval) but largely absent from Claude (which relies more heavily on training data). Monitoring only one model gives you an incomplete picture.

8. The feedback loop: periodic rank checks vs. continuous citation tracking

Traditional rank tracking runs on a schedule. You check rankings daily or weekly, see the trend, and adjust your strategy over time. The feedback loop is slow but predictable.

AI visibility monitoring needs to be more dynamic. AI models update their training data, change their retrieval behavior, and shift their recommendations in ways that aren't tied to a predictable schedule. A piece of content that gets cited heavily today might drop out of AI responses next month. A competitor's new article might suddenly start appearing in responses where you used to dominate.

Page-level citation tracking -- seeing exactly which of your pages are being cited by which AI models, how often, and for which prompts -- is the AI equivalent of rank tracking. But it needs to be paired with crawler log monitoring (which AI bots are actually visiting your site and reading your content?) to close the loop properly.

How the two approaches compare side by side

| Dimension | Traditional rank tracking | AI brand mention monitoring |

|---|---|---|

| What it measures | URL position in Google SERPs | Brand mentions in AI-generated answers |

| Data source | Google's search index | Direct LLM queries (ChatGPT, Perplexity, etc.) |

| Competitive metric | Position vs. competitors | Share of model / recommendation frequency |

| Query type | Keywords | Conversational prompts |

| Traffic attribution | Direct click tracking via GSC | Indirect influence, harder to attribute |

| Optimization levers | On-page SEO, backlinks, technical | Content engineering, citation building, entity authority |

| Platform coverage | Primarily Google (+ Bing) | 10+ AI models |

| Feedback loop | Daily/weekly rank checks | Continuous citation and crawler monitoring |

Do you still need traditional rank tracking?

Yes. Traditional SEO isn't dead -- it's just no longer the whole picture. Google still processes billions of searches daily, and organic rankings still drive significant traffic. The question isn't "which approach do I use?" but "how do I cover both?"

The practical challenge is that most tools are built for one or the other. Traditional platforms like Semrush and Ahrefs have added some AI tracking features, but they were designed for keyword-based SEO and the AI features are often bolted on rather than native.

Purpose-built AI visibility platforms go much deeper on the AI side but may lack the breadth of traditional SEO features. The right answer for most teams in 2026 is probably a combination: keep your existing rank tracker for Google performance, and add a dedicated AI visibility tool for the LLM side.

What to look for in an AI brand monitoring tool

Not all AI visibility tools are equal. Some are monitoring-only dashboards that show you data but leave you to figure out what to do with it. Others are built around the full cycle: find gaps, create content, track results.

When evaluating tools, ask these questions:

- Does it cover all the major AI models, or just one or two?

- Can it show competitor visibility alongside your own?

- Does it track which specific pages are being cited, not just overall brand mentions?

- Does it have crawler log monitoring to show which AI bots are visiting your site?

- Does it help you identify content gaps -- prompts where competitors appear but you don't?

- Can it help you create content designed to get cited, or does it just show you the problem?

- Does it connect AI visibility to actual traffic and revenue?

The last point matters more than most tools acknowledge. AI brand mentions that never translate to business outcomes aren't worth optimizing for. Traffic attribution -- whether through a code snippet, GSC integration, or server log analysis -- is what separates a vanity metric from a business metric.

Platforms like Promptwatch are built around this full loop: answer gap analysis to find what you're missing, built-in content generation to fix it, and page-level tracking plus traffic attribution to measure the result. That's a meaningfully different proposition from a tool that just shows you a dashboard of mentions.

The bottom line

Traditional rank tracking and AI brand monitoring measure different things, use different data sources, and require different optimization strategies. They're not competing approaches -- they're complementary ones that cover different parts of how people find brands in 2026.

The mistake is treating AI visibility as a future problem. With AI search traffic up 527% year-over-year and brands appearing in 90% of Google AI Mode responses, the brands building AI visibility now are the ones that will be embedded in AI recommendations when the market matures. The brands waiting are building a gap that gets harder to close every month.