Key takeaways

- Generating AI content is easy. Getting that content cited by ChatGPT, Perplexity, or Google AI Overviews requires a different approach entirely.

- The most effective workflows in 2026 follow a three-stage loop: identify what AI models want to cite, create content that fits, then measure whether citations actually increase.

- Human judgment still matters at every stage -- for strategy, for fact-checking, and for adding the specificity that makes content worth citing.

- Tracking AI visibility separately from traditional SEO is now a requirement, not a nice-to-have.

- Tools exist for every stage of this workflow, but most only cover one or two steps. The gap between "we published content" and "we can prove it's working" is where most teams get stuck.

There's a version of an AI writing workflow that a lot of teams are running right now. It goes like this: someone writes a prompt, ChatGPT or Claude generates a draft, a human does a light edit, and the article goes live. Repeat until the content calendar is full.

That's not a bad start. But it's also not a strategy. And in 2026, it's increasingly not enough -- because the goal isn't just to publish content. The goal is to get cited.

AI search engines like ChatGPT, Perplexity, and Google AI Overviews don't just index pages. They pull from sources they trust, and they cite those sources in their answers. If your content isn't structured, authoritative, and covering the right questions, it won't make the cut. You'll be invisible in the places where more and more of your potential customers are looking.

This guide is about building a workflow that closes that loop -- from the first prompt to proof that your content is actually working.

Why "generate and publish" isn't enough anymore

The content volume problem is solved. Any team with access to a decent AI writing tool can produce 50 articles a month. The quality floor has risen too -- AI-generated drafts are coherent, reasonably well-structured, and passable on the first read.

The problem is that everyone else can do the same thing. Volume is no longer a differentiator. What matters now is whether your content gets surfaced when someone asks an AI model a question in your category.

That requires something more deliberate: understanding which prompts and questions AI models are actually answering, identifying where your content is missing or weak, creating content that directly addresses those gaps, and then tracking whether AI models start citing you.

Most teams skip steps one and four entirely. They generate content based on gut instinct or keyword lists, publish it, and then have no idea whether it's moving the needle in AI search. That's the gap this workflow is designed to close.

Stage 1: Find the gaps before you write anything

The biggest mistake in AI content strategy is starting with a blank page. Before you write a word, you need to know what AI models are being asked in your category -- and where your competitors are showing up but you're not.

This is called answer gap analysis, and it's the foundation of everything else.

What to look for

You're trying to answer three questions:

- Which prompts and questions are people asking AI models in your niche?

- Which of those prompts are your competitors being cited for?

- Which of those prompts return zero results from your domain?

The intersection of "high-value prompt" and "you're not cited" is your content priority list. It's more useful than any keyword research tool because it shows you exactly what AI models want to answer -- and what they can't find on your site.

Tools like Promptwatch are built specifically for this. The Answer Gap Analysis feature shows you which prompts competitors rank for that you don't, so you're not guessing at topics -- you're working from actual citation data.

For traditional keyword research that feeds into this process, tools like Frase and MarketMuse help you understand what questions exist in a topic area before you start writing.

Prioritizing what to write

Not every gap is worth filling. Before you start generating content, score each opportunity by:

- Prompt volume (how often is this question asked?)

- Difficulty (how many authoritative sources are already being cited?)

- Business relevance (does ranking for this actually bring in the right audience?)

This is where prompt intelligence data becomes genuinely useful. Knowing that a prompt gets asked frequently but has few strong sources is a green light. Knowing that a prompt is dominated by Wikipedia and major news outlets is a signal to look elsewhere.

Stage 2: Build a content brief that's actually useful

AI writing tools are only as good as the instructions you give them. A vague prompt produces a vague article. A detailed brief produces something worth publishing.

Here's what a useful content brief looks like in 2026:

- The specific question or prompt you're targeting

- The audience persona (who's asking this, and what do they already know?)

- The angle that differentiates your take from what's already being cited

- Specific data points, statistics, or examples to include

- Structural requirements (headers, lists, FAQs, tables -- formats that AI models tend to cite)

- Word count and depth expectations

- Internal links and related topics to reference

The brief is where human strategy lives. The AI handles execution. If you skip the brief and just prompt the AI to "write an article about X," you'll get something generic -- and generic content doesn't get cited.

Content brief tools like Content Harmony can help you build briefs grounded in real SERP and question data.

Stage 3: Generate the draft -- with the right tool for the job

Once you have a solid brief, the actual generation step is relatively fast. The key is choosing a tool that fits the type of content you're producing.

| Tool | Best for | AI search optimization | Content volume |

|---|---|---|---|

| Jasper | Marketing copy, campaigns | Moderate | High |

| Claude | Long-form, nuanced writing | Good | Medium |

| ChatGPT | Versatile drafting | Good | High |

| Surfer SEO | SEO-optimized articles | Strong | Medium |

| Writesonic | Blog automation | Moderate | High |

| AirOps | Content engineered for AI citation | Strong | Medium |

A few things to keep in mind during generation:

Structure matters more than you think. AI models are more likely to cite content that's clearly organized -- with headers, bullet points, numbered lists, and direct answers near the top of sections. Write for a reader who's skimming, because that's also how AI models parse content.

Specificity beats generality. "Studies show that..." is weak. "According to Gartner's 2025 Digital Markets report..." is citable. Include real data, named sources, and concrete examples. AI models prefer content they can attribute.

FAQ sections are underrated. A well-structured FAQ at the end of an article directly mirrors how AI models respond to queries. If your FAQ answers the exact question someone is asking, you're a natural citation candidate.

Stage 4: The human edit -- where the real work happens

This is the step most teams rush, and it's the most important one.

AI-generated drafts are starting points, not finished products. The human edit isn't just about fixing grammar -- it's about adding the things AI can't generate on its own:

- Your actual experience and perspective

- Proprietary data or internal case studies

- Nuance that comes from knowing your industry

- Fact-checking (AI models hallucinate; your editor shouldn't)

A useful editing checklist:

- Does every factual claim have a source you can verify?

- Is there at least one specific example or data point per major section?

- Does the article answer the target prompt directly and early?

- Is the structure scannable -- headers, lists, short paragraphs?

- Does it sound like a person wrote it, or like a template?

Tools like Grammarly and Hemingway Editor help with the mechanical side of editing, but the substantive review has to be human.

Stage 5: Optimize for AI retrieval, not just traditional SEO

Traditional SEO and AI search optimization overlap, but they're not the same thing. Here's where they diverge:

Traditional SEO prioritizes keyword density, backlinks, and page authority. AI search optimization prioritizes direct answers, source credibility, and structured content that's easy to extract.

Some specific things to optimize for AI citation:

Answer the question in the first paragraph. Don't bury the lede. AI models often pull from the opening section of an article.

Use schema markup. FAQ schema, HowTo schema, and Article schema all help AI crawlers understand your content's structure and purpose.

Build topical authority. A single article rarely gets cited in isolation. AI models tend to trust domains that cover a topic comprehensively. If you're writing about AI writing workflows, you should also have content about prompt engineering, content strategy, and AI search visibility.

Get cited elsewhere. Reddit threads, YouTube videos, and third-party articles that reference your content increase the signal that you're a credible source. AI models pull from these secondary sources too.

Tools like Clearscope and NeuronWriter help with content optimization at the article level.

For structured data and entity optimization, WordLift is worth looking at.

Stage 6: Publish and distribute strategically

Where you publish matters. AI models don't just crawl your website -- they pull from Reddit, YouTube, LinkedIn, industry publications, and other high-authority sources.

A smart distribution strategy means:

- Publishing on your own domain (foundational)

- Syndicating or contributing to industry publications

- Creating Reddit posts or comments that reference your content

- Building YouTube content that covers the same topics

- Getting your content linked from other credible sources

This isn't about gaming the system. It's about being genuinely present in the places where AI models look for authoritative information.

For managing content across channels, platforms like Narrato AI help teams coordinate production and distribution without losing track of what's where.

Stage 7: Track whether it's actually working

This is where most workflows fall apart. Teams publish content and then check Google Analytics to see if traffic went up. But AI search traffic doesn't always show up cleanly in traditional analytics -- and citation visibility requires a completely different measurement approach.

You need to know:

- Which AI models are citing your content, and for which prompts?

- Which pages are being cited most frequently?

- How does your citation rate compare to competitors?

- Is AI visibility translating into actual traffic and conversions?

This is where dedicated AI visibility tracking becomes necessary. Promptwatch tracks citations across 10 AI models -- ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews, and more -- and shows you page-level data on what's being cited and how often. The traffic attribution features (via code snippet, Google Search Console integration, or server log analysis) connect AI visibility to actual revenue, which is the number most stakeholders care about.

The AI Crawler Logs feature is particularly useful here. You can see exactly which pages AI crawlers are visiting, how often they return, and whether they're encountering errors. If ChatGPT's crawler is hitting your site but not citing your content, that's a signal to investigate the content quality or structure.

Other tools worth knowing for tracking:

Otterly.AI

Putting it all together: the full workflow

Here's the complete loop, condensed:

- Identify gaps -- Use answer gap analysis to find high-value prompts where competitors are cited but you're not

- Build a brief -- Define the target prompt, audience, angle, required data, and structure

- Generate the draft -- Use an AI writing tool suited to the content type

- Edit for substance -- Add real data, verify facts, inject your perspective

- Optimize for AI retrieval -- Structure for scannability, add schema, build topical depth

- Distribute strategically -- Publish on your domain and in the secondary sources AI models trust

- Track citations -- Monitor which AI models cite your content and for which prompts

- Close the loop -- Use citation data to identify the next round of gaps and repeat

The loop doesn't end at publication. Every round of tracking feeds back into the gap analysis, giving you a clearer picture of what's working and what to write next.

Common mistakes that break the workflow

Skipping the brief. Prompting an AI to "write a 1500-word article about content marketing" produces something forgettable. The brief is where strategy happens.

Publishing without fact-checking. AI models hallucinate. If your published content contains a fabricated statistic, it will eventually be noticed -- and it undermines the credibility that makes you citable.

Treating AI search and traditional SEO as identical. They overlap, but they're not the same. A page that ranks well on Google may not get cited by Perplexity, and vice versa. You need to optimize for both.

Measuring only traffic. Citation visibility and organic traffic are related but distinct. A page can be cited frequently by AI models without driving significant direct traffic -- and that citation still has value for brand awareness and downstream conversions.

Writing for volume instead of depth. Fifty shallow articles will underperform five genuinely comprehensive ones in AI citation. AI models prefer sources that cover a topic thoroughly.

The tools that matter most

To summarize the stack by workflow stage:

| Stage | What you need | Tools to consider |

|---|---|---|

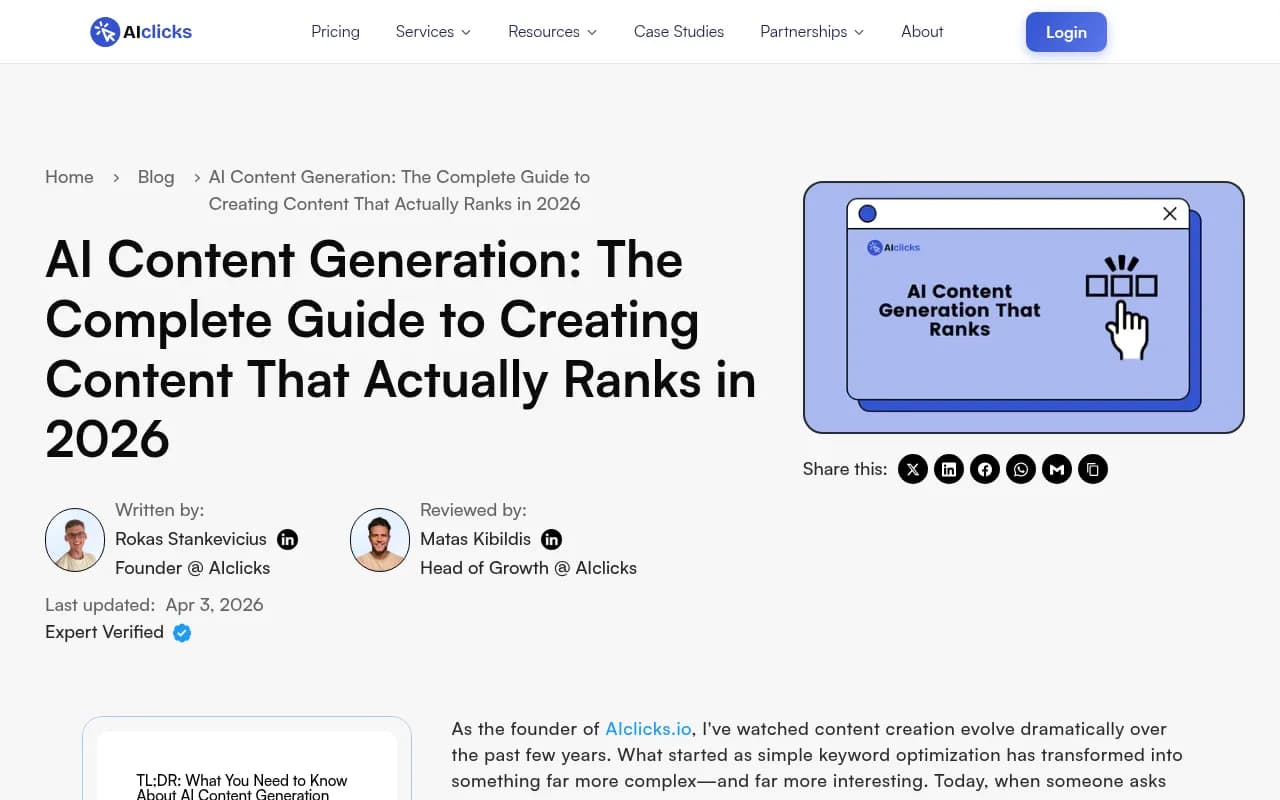

| Gap analysis | AI visibility data, competitor citation tracking | Promptwatch, AIclicks |

| Brief building | Question research, SERP analysis | Content Harmony, Frase, MarketMuse |

| Content generation | AI writing with structure | Jasper, Claude, ChatGPT, Writesonic |

| Editing | Grammar, readability, clarity | Grammarly, Hemingway Editor |

| Optimization | Content scoring, schema, entity optimization | Clearscope, NeuronWriter, WordLift, Surfer SEO |

| Distribution | Content workflow management | Narrato AI |

| Tracking | AI citation monitoring, traffic attribution | Promptwatch, AIclicks, Otterly.AI |

The honest reality is that most tools cover one or two stages well. The gap between "we generated content" and "we can prove it's being cited and driving results" is where most teams get stuck -- and it's where having an end-to-end platform matters.

Promptwatch is the only platform in this space that covers the full loop: gap analysis, content generation grounded in citation data, and citation tracking with traffic attribution. That's not a small thing. Most competitors stop at monitoring and leave you to figure out the rest yourself.

One last thing

The workflow described here isn't complicated. But it does require discipline -- specifically, the discipline to not skip the gap analysis at the start or the tracking at the end. Those two steps are what separate teams that are guessing from teams that are learning.

Content generation is a commodity now. The competitive advantage is in knowing what to write, writing it well, and being able to prove it's working. Build the loop, run it consistently, and the results will follow.