Key takeaways

- 60%+ of searches now end without a click -- AI models answer the question directly, so being cited matters more than ranking

- AI-referred visitors convert at 14.2% vs. traditional organic's 2.8% -- the quality premium is real

- The 60-day path breaks into three phases: audit and baseline (days 1-14), content creation (days 15-45), and tracking and iteration (days 46-60)

- Most brands fail because they monitor their AI visibility but never fix it -- the action loop (find gaps, create content, track results) is what actually moves the needle

- You don't need a massive site or domain authority to get cited -- you need the right content structure, the right topics, and consistent entity signals

Let's be honest about what's happening. Your Google Analytics traffic is flat or declining. Your rankings look fine. But something is off.

What's off is that the search landscape shifted while most marketing teams were still optimizing title tags. According to data from Averi, 58.5% of U.S. Google searches now end without a single click to any website. When AI Overviews appear -- which they do for 50-60% of searches -- that number jumps to 83%. And it's not just Google. ChatGPT, Perplexity, Claude, Gemini, and Grok are all answering questions directly, pulling from sources they've decided to trust.

The brands getting cited are winning. The ones that aren't are effectively invisible, even if they technically rank on page one.

This playbook is a practical, week-by-week guide to getting your brand from zero AI visibility to consistently cited -- in 60 days. No fluff, no vague advice about "creating quality content." Just the specific moves that work.

Why AI citation is different from traditional SEO

Before the tactics, it's worth understanding why the game changed -- because the mental model shift matters.

Traditional SEO was about ranking. You optimized a page, it climbed to position one, people clicked, you got traffic. The metric was clear: rank higher, get more clicks.

AI citation doesn't work that way. When someone asks ChatGPT "what's the best project management tool for remote teams," ChatGPT doesn't return a list of ranked URLs. It synthesizes an answer and cites the sources it found most credible and relevant. Your page might be ranking #1 on Google and still never appear in that answer -- because the content isn't structured in a way the model can extract and trust.

The new game is about being a citable source. That means:

- Writing content that directly answers specific questions (not just broadly covers topics)

- Building entity authority so AI models recognize your brand as a known, trustworthy entity

- Getting mentioned in the places AI models pull from -- not just your own site, but Reddit, YouTube, third-party publications, and review sites

- Structuring content so models can extract clean, quotable answers

The good news: you don't need a 10-year-old domain or a massive backlink profile to get cited. New sites with well-structured, authoritative content are getting cited regularly. The barrier is lower than traditional SEO -- but the approach is different.

Phase 1: Audit and baseline (days 1-14)

Step 1: Establish your baseline (days 1-3)

You can't improve what you can't measure. Before doing anything else, find out where you actually stand.

Manually test 10-15 prompts that your target customers would realistically ask. Go into ChatGPT, Perplexity, Claude, and Google AI Overviews and ask questions like:

- "What's the best [your category] tool for [your use case]?"

- "How do I [problem your product solves]?"

- "What are the top [your category] options for [specific persona]?"

Write down whether your brand appears, which competitors do appear, and what sources get cited. This is your baseline.

For anything beyond manual spot-checking, a dedicated AI visibility tracker saves a lot of time. Promptwatch tracks your brand across 10 AI models simultaneously and shows you exactly which prompts you're appearing in (and which you're not).

Other options worth knowing about:

Otterly.AI

Step 2: Map your prompt universe (days 4-7)

Most brands make the mistake of tracking 5-10 generic prompts. That's not enough signal. You need a map of the full question space your customers are navigating.

Think in terms of:

- Awareness prompts ("what is [category]", "how does [process] work")

- Consideration prompts ("best [category] tools", "[your brand] vs [competitor]")

- Decision prompts ("is [your brand] worth it", "[your brand] pricing", "[your brand] reviews")

- Use-case prompts ("best [category] for [specific use case]", "[category] for [industry]")

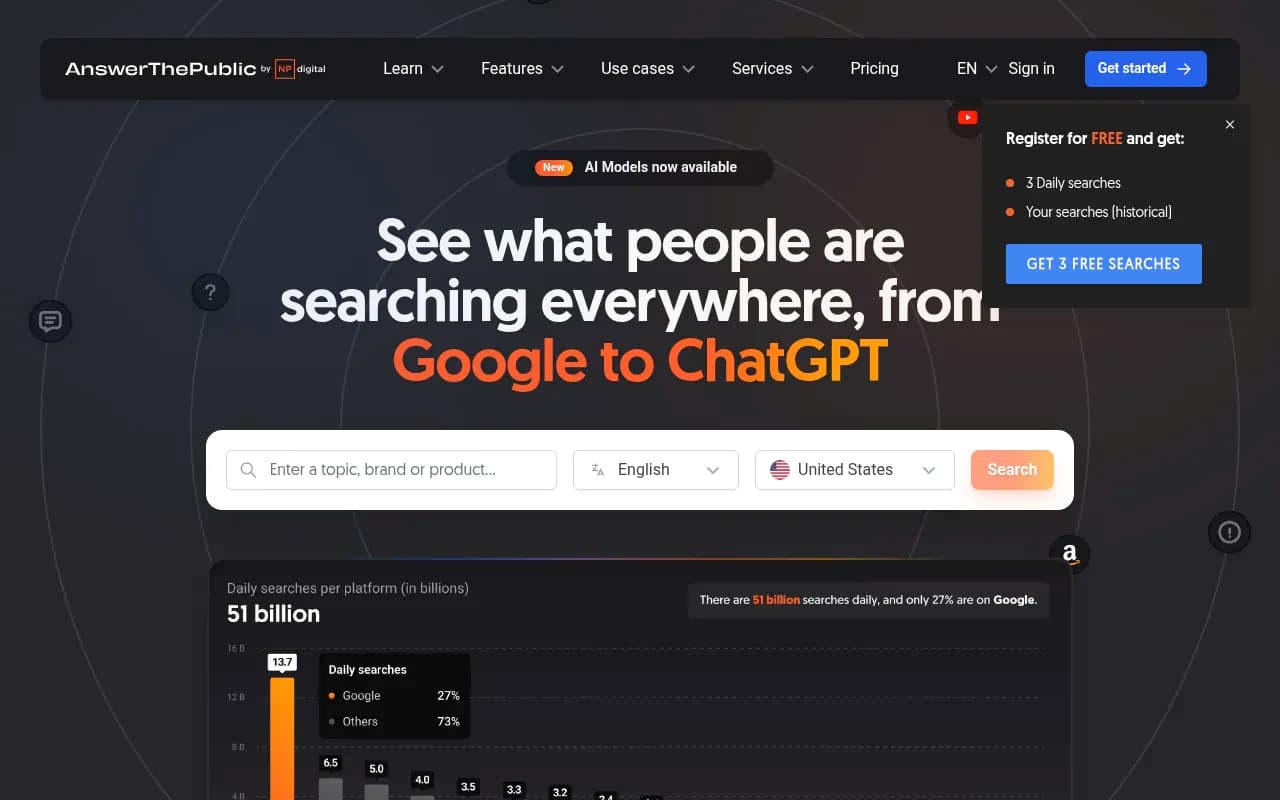

Tools like AnswerThePublic and AlsoAsked are useful for surfacing the actual questions people ask.

Aim for 50-100 prompts across all four stages. You won't track all of them manually -- that's what monitoring tools are for -- but you need the full map to prioritize.

Step 3: Run a content gap analysis (days 8-14)

Now compare your prompt map against what's actually on your site. For each prompt category, ask: does my site have a page that directly and specifically answers this question?

Not "does my site broadly cover this topic." Does it have a page that answers this specific question, with a clear answer in the first 100 words, structured so a model can extract it?

Most sites have huge gaps here. A SaaS company might have a features page and a blog, but nothing that directly answers "what's the difference between [their tool] and [competitor]" or "how do I [specific use case] with [their tool]."

The gaps you find here become your content roadmap for Phase 2.

Promptwatch's Answer Gap Analysis automates this -- it shows you the specific prompts your competitors are visible for that you're not, with the actual content your site is missing. That's a much faster starting point than doing it manually.

Phase 2: Create content that gets cited (days 15-45)

This is where most of the work happens. You have your gaps. Now you need to fill them with content that AI models will actually cite.

What makes content citable

AI models prefer sources that:

- Give direct, specific answers (not hedged, vague, or padded)

- Have clear structure (headers, short paragraphs, lists where appropriate)

- Demonstrate expertise through specificity (numbers, named examples, concrete claims)

- Are consistent with what the model has seen cited elsewhere (entity reinforcement)

The worst thing you can write is a 2,000-word article that takes 1,800 words to get to the actual answer. Models are extracting the answer block -- if it's buried, they'll find it somewhere else.

Content types that get cited

Based on citation patterns across AI models, these formats consistently perform well:

- Direct comparison pages ("X vs Y: which is better for [use case]")

- Definitive how-to guides with numbered steps

- "Best [category] for [specific use case]" listicles with clear criteria

- FAQ pages that answer specific questions with concise, quotable answers

- Original data or research (models love citing primary sources)

Week-by-week content plan

Week 3 (days 15-21): Write 3-4 comparison pages targeting your highest-priority consideration prompts. These are often the most valuable -- when someone asks ChatGPT to compare your brand to a competitor, you want your own page to be the cited source.

Week 4 (days 22-28): Write 4-5 use-case pages targeting specific audience segments. "Best [your category] for [industry]" or "how to use [your tool] for [specific workflow]." These capture long-tail prompts that are often easier to win.

Week 5 (days 29-35): Create an FAQ hub. Take the 20-30 most common questions from your prompt map and write clean, direct answers. This is one of the highest-leverage content investments for AI citation -- models frequently pull from FAQ-style content.

Week 6 (days 36-42): Publish on third-party platforms. AI models don't only cite your own site. They cite Reddit discussions, YouTube videos, LinkedIn posts, and publications like Medium. Write a detailed answer on Reddit in relevant subreddits. Publish a summary on LinkedIn. Get a guest post on a relevant publication. This is how you build the off-site citation footprint that reinforces your entity.

Week 7 (days 43-45): Schema markup and technical cleanup. Add FAQ schema, HowTo schema, and Article schema to your new pages. This isn't magic, but it helps models parse your content structure. Also check that your pages are actually being crawled by AI bots -- GPTBot, ClaudeBot, PerplexityBot. If they're blocked in your robots.txt, nothing else matters.

For content creation at scale, a few tools worth considering:

Phase 3: Track, iterate, and close the loop (days 46-60)

What to measure

By day 46, you should have 15-20 new pages live. Now you need to know if they're working.

The metrics that matter for AI visibility:

- Citation rate: how often your brand appears when the relevant prompts are queried

- Share of voice: your citations vs. competitors' citations across your prompt set

- Which pages are being cited: page-level tracking tells you which specific URLs are getting pulled

- AI-referred traffic: visitors coming from ChatGPT, Perplexity, and other AI models (check your analytics for referrals from chat.openai.com, perplexity.ai, etc.)

Closing the loop

Here's where most teams stop short. They track visibility scores going up and call it a win. But the real question is: does AI visibility translate to actual business outcomes?

To answer that, you need to connect AI citations to traffic, and traffic to conversions. This means:

- Setting up proper UTM tracking on any links in AI-cited content

- Monitoring referral traffic from AI platforms in Google Analytics

- Checking server logs for AI crawler activity (which pages are being crawled, how often, any errors)

Promptwatch handles this with a traffic attribution module -- it connects visibility data to actual site traffic through a code snippet, Google Search Console integration, or server log analysis. That closes the loop from "we got cited" to "that citation drove X visitors who converted at Y%."

Iteration: what to do when something isn't working

Not every page will get cited immediately. When a page isn't getting traction after 2-3 weeks, run through this checklist:

- Is the answer too buried? Move the key answer to the first paragraph.

- Is the content too generic? Add specific numbers, named examples, or original data.

- Is the page being crawled? Check crawler logs for GPTBot and ClaudeBot activity.

- Is your entity reinforced? Make sure your brand name, description, and key claims are consistent across your site, your Google Business Profile, your LinkedIn, and any third-party mentions.

The 60-day snapshot: what success looks like

| Metric | Day 1 | Day 60 target |

|---|---|---|

| Prompts tracked | 0 | 50-100 |

| Citation rate (tracked prompts) | 0-5% | 20-40% |

| Pages optimized for AI citation | 0 | 15-25 |

| Off-site mentions (Reddit, LinkedIn, etc.) | Baseline | +10-20 new mentions |

| AI-referred traffic (monthly) | Near zero | Measurable and growing |

| Competitor visibility gap | Unknown | Mapped and shrinking |

These aren't guaranteed numbers -- they depend on your category, competition, and how aggressively you execute. But brands that follow this playbook consistently see meaningful citation gains within 60 days.

Common mistakes that kill your progress

Tracking too few prompts. If you're only monitoring 5 prompts, you'll miss most of your visibility. The brands winning in AI search are tracking 50-200 prompts across the full customer journey.

Writing for Google, not for AI models. Long-form content padded with keywords works for traditional SEO. AI models want direct answers. A 300-word page that answers one question clearly will often outperform a 3,000-word article that buries the answer.

Ignoring off-site presence. Your own website is one input. Reddit, YouTube, LinkedIn, and third-party publications are others. AI models synthesize from all of them. If you're only publishing on your own site, you're missing half the game.

Blocking AI crawlers. This sounds obvious, but a surprising number of sites have GPTBot or ClaudeBot blocked in their robots.txt -- sometimes because a developer added a blanket block years ago. Check this first.

Monitoring without acting. Knowing you have zero visibility doesn't help unless you do something about it. The monitoring-only approach is where most brands get stuck. The action loop -- find gaps, create content, track results -- is what actually moves the number.

Tools to support the 60-day plan

Here's a quick reference for the tools mentioned in this guide, organized by phase:

| Phase | Task | Tool options |

|---|---|---|

| Audit | AI visibility baseline | Promptwatch, Otterly.AI, Peec AI |

| Audit | Prompt/question research | AnswerThePublic, AlsoAsked |

| Content | Content gap analysis | Promptwatch (Answer Gap Analysis) |

| Content | Content creation and optimization | AirOps, Surfer SEO, Frase |

| Content | Schema and technical SEO | Screaming Frog, Ahrefs |

| Tracking | Citation monitoring | Promptwatch, LLM Pulse |

| Tracking | Traffic attribution | Promptwatch, Google Analytics |

The 60-day mindset

The brands that will dominate AI search over the next two years aren't necessarily the ones with the biggest budgets or the most domain authority. They're the ones that treat AI visibility as an active discipline -- not a passive hope.

That means building a prompt map, finding the gaps, creating content specifically engineered to be cited, and tracking whether it's working. The cycle repeats. You get cited for more prompts, you find new gaps, you fill them.

Sixty days is enough to go from zero to a measurable presence in AI search results. But the brands that really win are the ones that keep the loop running after day 60.

Start with the audit. Everything else follows from knowing where you actually stand.